- Feb 14, 2004

- 48,422

- 5,276

- 136

The robots are coming for you:

https://www.engadget.com/2018/10/24/autonomous-vehicles-ethics-mit-study/

https://www.engadget.com/2018/10/24/autonomous-vehicles-ethics-mit-study/

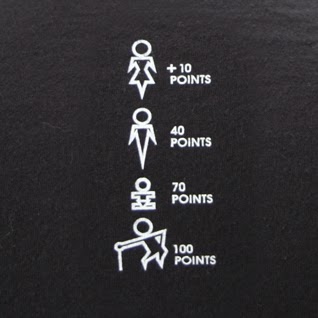

This is where China's social credit scoring system could really pay off. Phones can be equipped to constantly broadcast one's social credit and cars can be programmed to swerve accordingly.Those 'moral' decisions are such a red herring when talking about self driving car ethics.

1) It assumes perfect knowledge. Real life is messy. We might know there is a solid object there, but things like mist can generate solid returns, let alone being able to tell the age and state of health of everyone.

2) Decision will be based on the law and legal liability, not ethics. If staying on the road and braking hard means I hit a family of 5 vs going on the sidewalk and killing a crippled 90 year old, it means hitting the family of 5 every time. The manufacturer is likely on the hook for these choices. Lawyers will be making these choices and it will always be what creates the least risk legally.

Interesting thought experiments on the relative value of human life (Which BTW insurance has metrics for apparently). I know as a buyer of a car I'd want it to prioritize my life over anyone else though.

This is where China's social credit scoring system could really pay off. Phones can be equipped to constantly broadcast one's social credit and cars can be programmed to swerve accordingly.

You should also get extra points for big tits. We gotta save them titties.This is where China's social credit scoring system could really pay off. Phones can be equipped to constantly broadcast one's social credit and cars can be programmed to swerve accordingly.

Seems to me that the free market could be used here. Folks could opt to buy higher preference ratings to avoid being hit.

Best bet is they can just make it a configurable parameter. Then whatever the outcome is, blame the driver for how it was configured, and not the manufacturer. I could see that happen TBH. Either way I think the driver will always be held at fault as you will still be expected to have both hands on the wheel and be fully alert and ready to take over at any time. Even if a driving situation could be better done by the AI, if the AI does fail, the driver will still have been expected to override and do better. It's all in the language of the EULA that will definitely be shipped with the car that you'll have to agree to when you first use it. Heck it might even make you agree to it each time you start the car. It could be something as simple as a popup on an LCD that asks if you have read it and agree to it.