-

We’re currently investigating an issue related to the forum theme and styling that is impacting page layout and visual formatting. The problem has been identified, and we are actively working on a resolution. There is no impact to user data or functionality, this is strictly a front-end display issue. We’ll post an update once the fix has been deployed. Thanks for your patience while we get this sorted.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

S/A: AMD kills Kaveri, Steamroller, Excavator

Page 9 - Seeking answers? Join the AnandTech community: where nearly half-a-million members share solutions and discuss the latest tech.

On a given node, transistor density is inversely proportional to clockspeed because transistor density is directly proportional to Idrive (transistor width being the transistor density driver for a given node).

All those low-clocked high-density GPU-dedicated xtors on Llano and trinity are what drive up their aggregate xtor count for the IC in toto.

And that kids, is your solid state physics lesson for the day 😛

Last edited:

If AMD were to take a page out of Intel's history book, AMD would go back to the shrunk stars core they created for Llano as a starting point for a reboot of their microarchitecture line targeting 20nm at TSMC with plans to leverage the 16nm finfet xtor substitution.

Go back to what was sorta working, same as Intel did in reaching backwards to the P3 lineage as a replacement for Netburst, and leverage the advantage of a node shrink or two.

Wouldn't it make more sense to use the Bobcat/Jaguar line instead?

Wouldn't it make more sense to use the Bobcat/Jaguar line instead?

I'm the last guy to ask what would make sense, beats me which is better.

My armchair analysis is this - if AMD wanted to build a silly high core-count CPU (requires small die-size per core) with less than stellar single-threaded IPC (i.e. bulldozer) then they would have been better off creating bulldozer as a bunch of bobcat or jaguar cores bolted together (sea-of-jaguars) because at least then it would have had the low-power aspects built-in from the beginning.

But the problem with selling netburst was IPC and power/performance. And the problem with bulldozer was IPC and power/performance.

Core 2 Duo addressed both of Netburst's weaknesses in one stroke. So if we extend the analogy to AMD and bulldozer then we wouldn't look to Jaguar because Jaguar represents an even further step back in IPC compared to steamroller.

What AMD has that bests the IPC of bulldozer and piledriver is the Llano cpu core. So the analogy for Intel's recipe of success as extended to AMD is for them to revisit Llano's core and update its ISA to keep it relevant.

ViRGE

Elite Member, Moderator Emeritus

So let me ask you this IDC: why is it that power consumption always spikes above 4GHz? What's so special about that frequency that Intel and co can hold power consumption relatively low in the 3GHz range, but that gives way at 4GHz? BD/PD is a power pig, Intel's processors only touch 4GHz with one core active (implying it's consuming around as much power as 3-4 cores at 3.4GHz or so), and overclocking SNB/IVB past 4GHz comes with a major power penalty.

This can't just be a design issue, can it?

This can't just be a design issue, can it?

AnandThenMan

Diamond Member

4Ghz is just at the point where the fabric of space/time start to break down.

I would like an actual answer as well.

I would like an actual answer as well.

cbn

Lifer

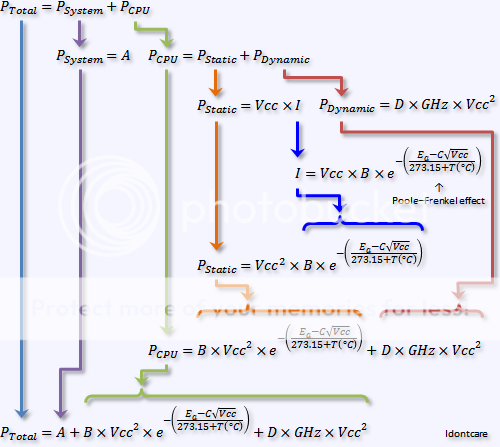

Some more information to add ( I believe this agrees with Abwx):

http://forums.anandtech.com/showpost.php?p=30918873&postcount=4

http://forums.anandtech.com/showthread.php?p=27459192#post27459192

P.S. Thanks for all the experiments you share IDC :thumbsup: (May the wind be always at your back and good luck always with you.)

http://forums.anandtech.com/showpost.php?p=30918873&postcount=4

Idontcare said:CPU heat is proportional to CPU temperature is proportional to CPU power-consumption.

Power-consumption is proportional to the cube of the operating voltage and linearly with the clockspeed.

http://forums.anandtech.com/showthread.php?p=27459192#post27459192

Idontcare said:Here you go, Vcc versus power consumption at the wall for my system.

http://i272.photobucket.com/al...usPowerConsumption.gif

Note that the coefficients for both the squared and linear terms are practically zero (10^-9 and 10^-6). The y-intercepts represent system power consumption in the absence of the CPU (so chipset, ram, hdrives, vidcard, fans, PSU efficiency, etc) - comes in the vicinity of 105-120W which is reasonable for my components.

If I force the fit to be quadratic I get non-physical y-intercepts, 700W in one case and 14W in the other, neither of which could possibly be my system level power consumption so this counts as a second peice of evidence that quadratic power scaling for my system is not correct.

So in conclusion, for a 65nm Kentsfield quadcore chip, my results corroborate the lost circuits claims that power consumption scales as the cube of cpu voltage, not the square.

P.S. Thanks for all the experiments you share IDC :thumbsup: (May the wind be always at your back and good luck always with you.)

Last edited:

This is the system comsumption , not the one of the CPU.

Actualy this is a square law for the TDP vs voltage graph ,

not a cubic law as mentionned in IDC s post.

We can see clearly that from 1V to 1.5V the power increase

by close to 2.25 = 1.5^2 , from about 160 to 320W.

Last edited:

JumpingJack

Member

This is the system comsumption , not the one of the CPU.

Actualy this is a square law for the TDP vs voltage graph ,

not a cubic law as mentionned in IDC s post.

We can see clearly that from 1V to 1.5V the power increase

by close to 2.25 = 1.5^2 , from about 160 to 320W.

With all due respect to IDC, Abwx is correct, in the limit that Pdynamic >> Psatatic and Pdynamic >> Psc, the power is quadratic with respect to voltage and linear with repect to frequency.

http://bwrc.eecs.berkeley.edu/php/pubs/pubs.php/418/paper.fm.pdf (see p. 3)

If those assumptions above do not old it is still quadratic wrt voltage and linear wrt frequency and Ptotal = Pdynamic + Pstatic + Psc

IDCs fitted data with an empirical polynomial fit, and the fit occurred in a linear portion of the curve .... In essences, a linear, quadratic, cubic, or even quartic polynomial would have given a good R^2, added to the complexity is the fact that it is system level power with the inefficiency of the VRM,PSU, and busses all convoluted into the result.

On a given node, transistor density is inversely proportional to clockspeed because transistor density is directly proportional to Idrive (transistor width being the transistor density driver for a given node).

All those low-clocked high-density GPU-dedicated xtors on Llano and trinity are what drive up their aggregate xtor count for the IC in toto.

The device sizing is a second-order effect, and the sizing is less-directly related to clock speed than you might expect (for example, relatively low-clocked (as compared to x86) ARM designs tend to use big "12-track" libraries). You can see the white space at the Bulldozer SOC level - it's absurd...it looks like there's easily room for a whole extra bank of L3 cache even without allowing for the funny shapes that the 45nm six-core designs used. Then within the cores, you can see little blobs of logic with wasted space around them. Then within each hand-designed piece you can see wasted space around each column of gates. SRAM is densest; large-scale random logic implementation by hand is often least dense. Automated design falls in between. These are sweeping generalizations, of course.

cbn

Lifer

This is the system comsumption , not the one of the CPU.

Actualy this is a square law for the TDP vs voltage graph ,

not a cubic law as mentionned in IDC s post.

We can see clearly that from 1V to 1.5V the power increase

by close to 2.25 = 1.5^2 , from about 160 to 320W.

Yes, that is system power consumption. (Notice in post #208 of this thread the IDC quote says the y-intercept corresponds to the power consumption of his system sans CPU).

The y-intercepts represent system power consumption in the absence of the CPU (so chipset, ram, hdrives, vidcard, fans, PSU efficiency, etc) - comes in the vicinity of 105-120W which is reasonable for my components.

(In very simple terms and as I understand the situation) When IDC isolates the CPU power increase from the baseline system power consumption he gets the cube rather the square for increasing voltage.

A post from IDC later on in the thread:

http://forums.anandtech.com/showpost.php?p=27461231&postcount=22

Idontcare said:I would have never believed my CPU exhibits x^3 power consumption as a function of Vcc unless I had seen it with my own eyes. I was amazed to see the results too.

P.S. For those wanting to read the thread from the beginning here is the opening post --> http://forums.anandtech.com/showthread.php?t=268297

AtenRa

Lifer

Still don't have a clue, do you?

The first 1156 chip wasn't i3 Clarkdale but i7 and i5 Lynfield which arrived in Q309. Also 32nm 1156, which arrived in Q110, comprised of the entire line up, from Celeron to i7.

I said the first 32nm was the Core i3 5xx series launched in Q1 2010. Socket 1156 Core i5 and Core i7 where 45nm launch in September 2009. One year later, Q3 2010, Socket 775 had 60/70% of Intel Shipments. Only Core i3 5xx series was 32nm at that time. The majority of the Intel lineup was still 45nm.

What's the source of your 60-70% LGA775 for Q111? Because in Q111 Intel had three fabs producing 32nm chips and only two producing 45nm, and not only westmere was in production, but SNB would have the fastest ramp up of all times.

I have said 60-70% in Q3 2010, but even in Q1 2011 Socket 775 had a large percentage of Intel's shipments.

In Q1 2011, Intel expecting 20% of the shipments to be SandyBridge (Core i5,i7). Even then 45nm processors were the majority of Intels Shipments.

Intel and AMD use the previous litho process for a long time, even after one or two years after the next process has been released. TSMC does the same as well as every other foundry in the world.

So, still selling Athlons X2 (High volume, low price product) is not unheard of, but as i have said before, Llano and Trinity shipments were bellow of what it should of been.

eternalone

Golden Member

AMD has issued a denial.

II have said 60-70% in Q3 2010, but even in Q1 2011 Socket 775 had a large percentage of Intel's shipments

Source?

beginner99

Diamond Member

BD architecture was designed for the APU first and for Servers.

True but I mean the actual chip and it's opteron version and not the architecture.

Some more information to add ( I believe this agrees with Abwx):

http://forums.anandtech.com/showpost.php?p=30918873&postcount=4

http://forums.anandtech.com/showthread.php?p=27459192#post27459192

P.S. Thanks for all the experiments you share IDC :thumbsup: (May the wind be always at your back and good luck always with you.)

This is the system comsumption , not the one of the CPU.

Actualy this is a square law for the TDP vs voltage graph ,

not a cubic law as mentionned in IDC s post.

We can see clearly that from 1V to 1.5V the power increase

by close to 2.25 = 1.5^2 , from about 160 to 320W.

With all due respect to IDC, Abwx is correct, in the limit that Pdynamic >> Psatatic and Pdynamic >> Psc, the power is quadratic with respect to voltage and linear with repect to frequency.

http://bwrc.eecs.berkeley.edu/php/pubs/pubs.php/418/paper.fm.pdf (see p. 3)

If those assumptions above do not old it is still quadratic wrt voltage and linear wrt frequency and Ptotal = Pdynamic + Pstatic + Psc

IDCs fitted data with an empirical polynomial fit, and the fit occurred in a linear portion of the curve .... In essences, a linear, quadratic, cubic, or even quartic polynomial would have given a good R^2, added to the complexity is the fact that it is system level power with the inefficiency of the VRM,PSU, and busses all convoluted into the result.

The results Computer Bottleneck linked to are unfortunately quite dated and reflected a time period in which characterizing the end-users observation of power consumption was adequate to lump it all together as a cubic polynomial.

The reason it sorta worked, which also happened to be the same reason it had to be done that way, was because of temperature and the fact that none of the experiments being done (by me and by the third-part reviews) properly accounted for the operating temperature of the CPU.

In later works (which themselves are now becoming dated as well) I addressed this gap in the models and correctly accounted for the impact/effect of the CPU's operating temperature on the power-consumption of the IC:

Effect of Temperature on Power-Consumption with the i7-2600K

i7-3770K vs. i7-2600K: Temperature, Voltage, GHz and Power-Consumption Analysis

So no worries, the correct equations are available and are employed in all current analyses of power-consumption, but temperature must be captured alongside the system power consumption.

If you don't capture the temperature data then what you will find is that the power usage of the IC increases as a cubic because of what essentially becomes a dominant term in the taylor series expansion of the thermally activated portion (the arrhenius equation portion) of the static power consumption.

Cerb

Elite Member

But, they never really improved it. The Pentium-M wasn't just a Pentium III. It was a Pentium III with many small improvements--it was superior to the Pentium III. Stars may have worked out OK, but if they were going to take that route, they missed their chance ages ago: re-implement it with newer tools, and iteratively improve upon it, towards some long-term design goals (such as a high-bandwidth shared front-end).I thought "pulling a Pentium M" meant dropping your current inefficient architecture, going back to what you had before, and die-shrinking it,. In Intel's case, they dropped P4 and went back to PIII. In AMD's case, that would mean dropping Bulldozer and going back to...K10. They already die-shrunk it to 32nm and it seemed to work well, after all.

As of now, BD's descendents are already better than Stars, so that wouldn't be a good move. They've got to take their lemons, and make some bitter moldy lemonade with them 🙂.

Last edited:

The results Computer Bottleneck linked to are unfortunately quite dated and reflected a time period in which characterizing the end-users observation of power consumption was adequate to lump it all together as a cubic polynomial.

Cubic law is perfectly in line with end users gladly crancking up voltages

to get more ghz.....😉

In later works (which themselves are now becoming dated as well) I addressed this gap in the models and correctly accounted for the

If you don't capture the temperature data then what you will find is that the power usage of the IC increases as a cubic because of what essentially becomes a dominant term in the taylor series expansion of the thermally activated portion (the arrhenius equation portion) of the static power consumption.

Great work indeed , even too technical to be published in an official

AT or TR review..

JumpingJack

Member

Cubic law is perfectly in line with end users gladly crancking up voltages

to get more ghz.....😉

..

Indeed, the take home message is the same whether it is the old IDC data set cubic fit or the theoretical quadratic functionality, voltage is the largest power modulator knob.

Indeed, the take home message is the same whether it is the old IDC data set cubic fit or the theoretical quadratic functionality, voltage is the largest power modulator knob.

In practice it actually tends to be a 50/50 sort of deal for us enthusiasts who are willing to invest in decent 3rd party cooling (or more extravagant lapping and delidding methods); allow me to show what I mean by way of answering ViRGE's question below.

(btw, always a pleasure to see you posting, you wouldn't know this but I am a longtime fan of yours from your posts over on XS :wub:, I never post there though, just a lurker for years now)

So let me ask you this IDC: why is it that power consumption always spikes above 4GHz? What's so special about that frequency that Intel and co can hold power consumption relatively low in the 3GHz range, but that gives way at 4GHz? BD/PD is a power pig, Intel's processors only touch 4GHz with one core active (implying it's consuming around as much power as 3-4 cores at 3.4GHz or so), and overclocking SNB/IVB past 4GHz comes with a major power penalty.

This can't just be a design issue, can it?

There is a short answer, a long practical answer, and the verrrrry long academic answer 😉 I'll only bore you with the former two and spare you of the latter 😀

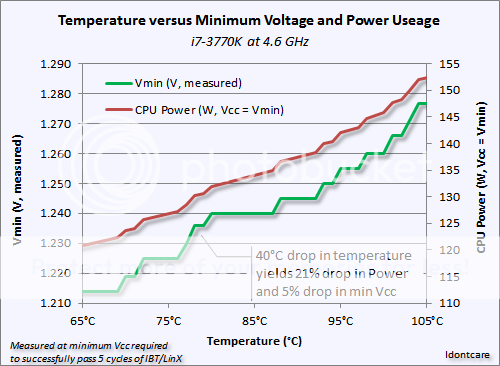

The short answer is that power consumption markedly spikes upwards once you get above 4GHz because the CPU's operating temperature is also increasing in a way that is insidiously problematic.

The temperature increases, which increases leakage so power goes up for that alone...but the the increase in leakage raises the temperature, which raises the power consumption even more, which raises the temperature even more...this is referred to as the "thermal runaway effect".

Eventually this foot-race between thermal runaway and peak operating temperature reaches a steady-state that depends entirely on our cooling efficacy. The TIM, the HSF, the ambient temps, etc.

But that is only the half of it, the reason I labeled it "insidiously problematic" is that as the operating temperature increases so too does the minimum voltage necessary for the CPU's circuits to operate in a stable and reliable fashion.

I am now getting into the longer answer I alluded to above, sans the more boring academic stuff I promise 😉, and so I will bring some data into the conversation to speak to:

These data were generated with my 3770k and what it shows is that the minimum voltage needed to stabilize the circuits (signal-to-noise) such that the CPU can reliably pass the rigors of LinX rises with temperature (which is expected, but the data gives numbers to speak to).

In this test the CPU is held steady at 4.6GHz, and if we use a fancy expensive H100 that is lapped and so forth then we can clock this 3770k at 4.6GHz with a mere 1.214V and the peak operating temperature is 65°C. (the green line, which is the CPU voltage, goes with the left-side axis)

As the temperature rises, which would happen if I had a lower quality cooling solution or if my ambient temperature rose, that 1.214V is no longer sufficient to keep the CPU stable.

So I must raise the CPU's voltage, solely because of temperature, and in doing so the voltage increase results in an increase in power consumption. (the red line, which is the CPU's power consumption, goes with the right-side axis)

The increase in temperature results in an increase in leakage current, which increases power usage, which increases temperature, which causes a required increase in the minimum voltage necessary for stability, which increases power, which increases temperature, etc etc.

That makes the higher clockspeeds particularly problematic because the clockspeed is what drives the voltage requirement to first-order, but voltage drives the power consumption as a quadratic, and the power consumption feeds back and drives the temperature as quadratic (thermal dissipation goes to the 4th power of the difference between hot and cold points in the system), and the temperature feeds back into an exponential function that drives the power consumption even higher.

In this case, a real-world test case, my 3770k requires 1.214V (measured with a voltmeter) to be stable at 4.6GHz if the temperature is kept below 65°C, but as the temperature rises up to the point of TJMax (105°C), the voltage required to maintain stable operation increases from 1.214V to 1.277V, an increase of 5.2% in the voltage alone.

And the resulting increase in the CPU's power consumption (isolated from the rest of the system) rises from 121W to 152W (31.6W w/o the rounding) as the temperature climbs from 65°C to 105°C.

That is a 26% increase in power usage (31.6/121) just because we had to deal with the rising temperatures causing an increase in static leakage as well as requiring us to increase the operating voltage for stability purposes.

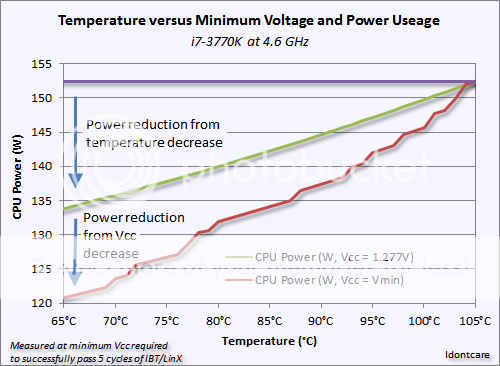

Now let's look at this from a different perspective, Intel's perspective, and touch on JumpingJack's comment a bit in the process.

Intel must ensure their CPU's are set to have enough voltage that they can reliably operate all the way up to TJMax at any given clockspeed within the spec. So while we enthusiast OC'ers have within our control the ability to optimize the Vcc to a value lower than that required for TJMax stability, Intel does not have that luxury.

So which pays more dividends in practive? Lowering the temperature or lowering the voltage? (naturally, and ideally, we'd find ourselves investing our time and dollars attempting to optimally lower both, of course)

Well that is what the graph above analyzes. If we took the Intel approach and determined the max voltage necessary for stable operation at say 4.6GHz when the chip hits TJMax (which it will and does if you are using the stock HSF) then you need 1.277V for my 3770k. Intel would of course set the VID value to a number that was much higher than this, but lets say for the sake of argument they use the minimum allowed value as I have done.

Now as enthusiasts we spend money buying 3rd party HSF coolers to lower our temperatures, increase CPU longevity, lower power usage, etc. So what happens if we do that but we don't bother to re-optimize the Vcc?

In the case of my 3770k the temperature decreases from 105°C to 65°C and the power consumption falls from 152.5W to 134W, a decrease of nearly 19W or 12.2% less power usage. This is a decrease in power usage solely due to less static power losses from lower leakage current because we invested in better cooling and reduced our temperatures without touching the voltage parameter. (the purple to olive-green line in the graph above)

If we further optimize the system to lower the voltage needed for stable operation then we can lower the power usage even more, from 134W to 121W at 65°C by reducing the voltage from 1.277V to 1.214V...this is an additional 8.5% reduction in power consumption simply from being able to lower the voltage because the signal-to-noise ratio has improved because the temperature has been reduced.

Of course lowering the Vcc results in a lower temperature which results in a lower power consumption which results in a lower temperature which results in a lower required Vcc, etc, as the thermal runaway effect goes into reverse and the feedback results in a substantially lower power consumption.

And so we see that it is roughly 50/50 for us enthusiasts in terms of controlling power-consumption by way of controlling the voltage necessary for stable operation at a given clockspeed versus controlling the operating temperature by way of improving the cooling of the CPU.

Ideally we'd spend our time and money doing both, but if we had to choose one to go after it would not really make much of a practical difference at the outlet whether we had simply endeavored to lower the operating temperature with better cooling or if we had endeavored to lower the CPU's voltage (while remaining stable).

But in the end the reason why we see both Intel's and AMD's chips spiral upwards in power-consumption as you get near the high end of their clockspeed band is because the clockspeed drives the voltage requirement which in turn drives the temperature upwards which in turn drives the leakage upwards as well as the required voltage all the more, and we end up operating on the hairy edge of a thermal runaway situation.

Last edited:

cbn

Lifer

Thanks for the explanation IDC!

So assuming core temps do not change, power consumption is squared (not cubed) with increases in voltage. But in real world conditions power consumption can increase at a rate greater than the square (due to higher xtor leakage from increased temperatures adding to the power consumption).

P.S. I am still reading through all those great experiment SB/IV links you provided.

Of course, as I do this, it is really interesting to think where xtor design and material science will go as these xtors get closer together (and thus potentially hotter).

So assuming core temps do not change, power consumption is squared (not cubed) with increases in voltage. But in real world conditions power consumption can increase at a rate greater than the square (due to higher xtor leakage from increased temperatures adding to the power consumption).

P.S. I am still reading through all those great experiment SB/IV links you provided.

Of course, as I do this, it is really interesting to think where xtor design and material science will go as these xtors get closer together (and thus potentially hotter).

Last edited:

Of course, as I do this, it is really interesting to think where xtor design and material science will go as these xtors get closer together (and thus potentially hotter).

That is what impressed me the most about Intel's 22nm versus their 32nm, they managed to get a die shrink which makes all the active components closer to one another (meaning the isolation dielectrics are even thinner) and yet they also managed to improve the dielectric leakage parameters such that for the same voltage and temperature the static leakage is practically the same on 22nm as it is on 32nm.

That is unheard of in shrinking, you always take a leakage hit on shrinking (will, always until now) and its not all attributable to the transition to FinFet because even the leakage in BEOL would have increased at the M1 pitch if Intel had not done something awesome with, or to, the dielectric material used.

TRENDING THREADS

-

Discussion Zen 5 Speculation (EPYC Turin and Strix Point/Granite Ridge - Ryzen 9000)

- Started by DisEnchantment

- Replies: 25K

-

Discussion Intel Meteor, Arrow, Lunar & Panther Lakes + WCL Discussion Threads

- Started by Tigerick

- Replies: 25K

-

-

-