Stuka87

Diamond Member

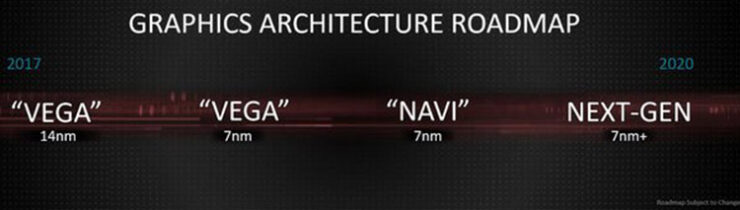

Why wouldn't AMD bin and name the chips themselves? Vega VII / Vega VII XT or whatever for the better performing chips.

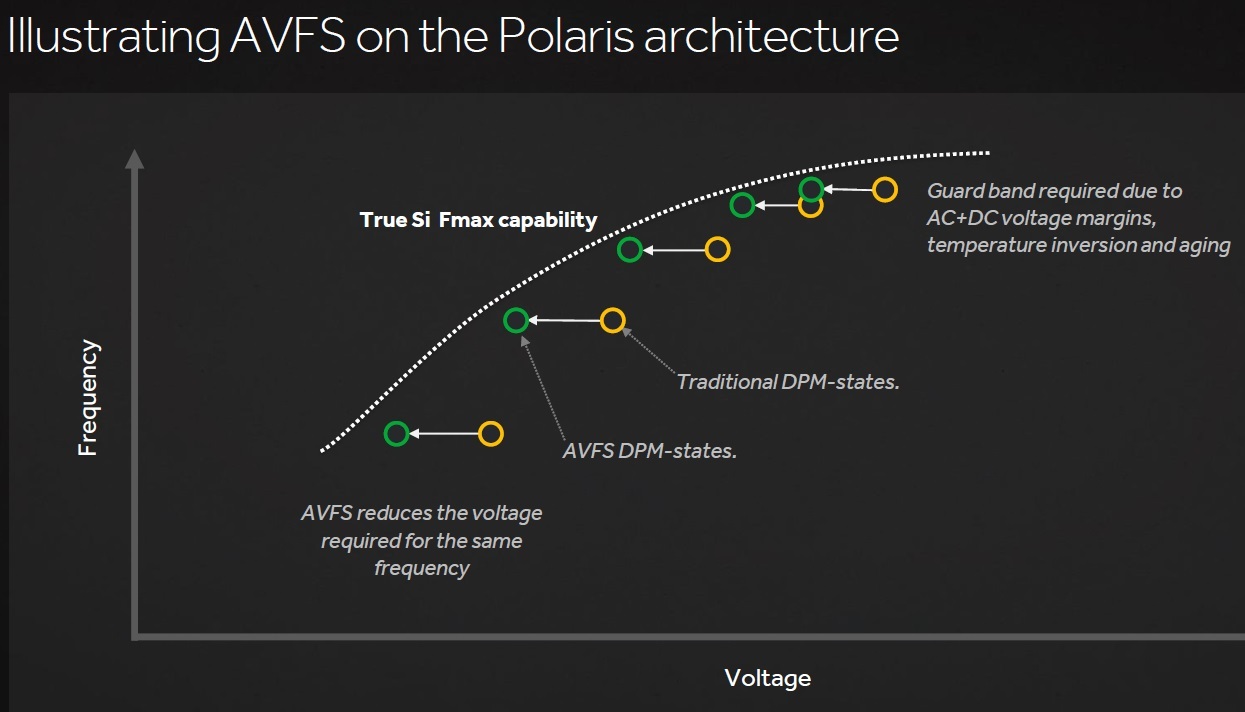

Cost. Binning every chip gets expensive, and it ultimately results in some chips not passing spec. Those that don't pass either have to be tossed, or relegated to some other product. By setting the voltage higher, they know every chip they have will work, so they lose less money from throwing some away.