Man RDNA5 just keeps getting wilder and wilder. I'm just baffled by the incredibly low L2 capacities.

AT2 with 36gbps 7 still falls short of 5070 TI's mem BW and halves L2 (24MB vs 48MB).

Despite that it could easily bury 4090 (+37-40% 9070XT raster) if AMD pushes clocks.

Full AT2 matching GB202 spec with half the L2 is impressive especially with AT2 extrapolated perf at ~50-60% ahead of 5090.

And the low L2 for LPDDR based cards is even more impressive but then again 384bit LPDDR6 12000 = 570ish GB/S halfway between 4070 TI and 9070XT.

Is this low L2 a result of the new decentralized SE level scheduling paradigm

@Kepler_L2?

That is the only explanation I can think of that could have such a drastic impact. ADC and WGS within SEs + Local launchers mean that all caches effectively move up one tier. L0 now acts as both L1 and L0 (WGP self launch), L1 acts as L2 (ADC + WGS) and L2 is now nothing more than a work item victim cache.

Performing all scheduling and dispatch at the Shader Engine level instead of orchestrated through a central command processor means that with the exception of occasional scratch buffer L2 spillovers only work item data resides in L2.

Please correct me if this observation is incorrect.

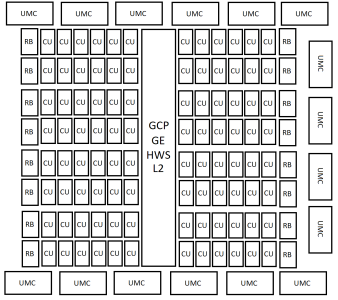

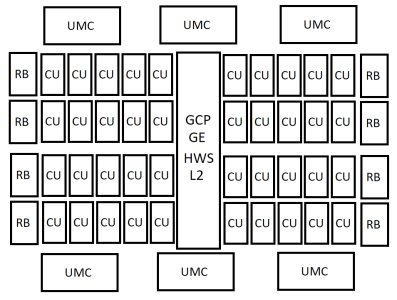

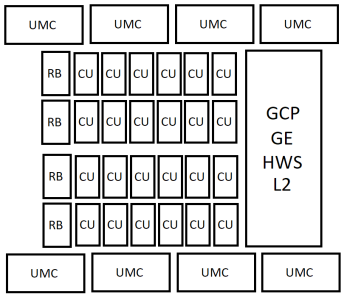

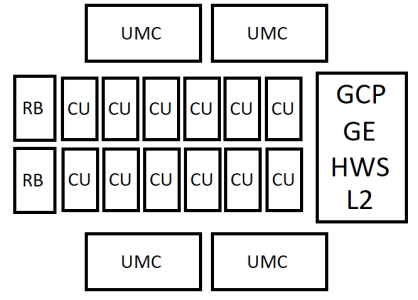

SKU spec table

| SKU | CUs (new/old) | L2 (MB) | PHY type | Mem bus (bit) | SE/SA | CU/SE (new/ old) |

| AT0 | 96/192 | 64 | GDDR7 | 512 | 8/16 | 12/24 |

| AT2 | 40/80 | 24 | GDDR7 | 192 | 4/8 | 10/20 |

| AT3 | 24/48 | 32 | LPDDR5X/6 | 256/384 | 2/4 | 12/24 |

| AT4 | 12/24 | 16 | LPDDR5X/?6 | 128/?192 | 1/2 | 12/24 |