Those want membw that LPDDR can never offer.

BTW, have you seen the picture of Mi450, with HBM, and the base die of each HBM stack also having a memory controller for 2x LPDDR channels? Looks insane...

Those want membw that LPDDR can never offer.

Where's that posted?BTW, have you seen the picture of Mi450, with HBM, and the base die of each HBM stack also having a memory controller for 2x LPDDR channels? Looks insane...

For bulk inferencing where multiple models may be resident, a MUCH bigger pool of LPDDR per card will mean lower costs, better availability of components, and less memory thrashing, cooling requirements and failure rates over the life time of ownership.Those want membw that LPDDR can never offer.

DC GPUs have their FF GFX h/w long excavated.

Where's that posted?

Thanks. 🙂

Tons of words to say tokens/sec ratio will be bad.For bulk inferencing where multiple models may be resident, a MUCH bigger pool of LPDDR per card will mean lower costs, better availability of components, and less memory thrashing, cooling requirements and failure rates over the life time of ownership.

Looks normal.BTW, have you seen the picture of Mi450, with HBM, and the base die of each HBM stack also having a memory controller for 2x LPDDR channels? Looks insane...

Buncha trolls here 😛 Wake me up when y'all want to talk about inferencing that doesn't melt a hole in the earth and requires multiple nuclear plants as a power supply...

Tokens / Joule and Tokens / $ matter.Tons of words to say tokens/sec ratio will be bad.

Looks normal.

Wake me up when Qualcomm wants to talk about inference. The joke I posted above only works because QC did not offer any meaningful metric that presents their hardware as efficient. No performance numbers, no power usage attached to that performance.Wake me up when y'all want to talk about inferencing

SemiAnalysis make their living out of taking both the industry in general and AI in particular VERY seriously. So sleep on this: if QC had a truly valuable product in their hands, we'd be swimming in benchmarks and efficiency claims.Buncha trolls here 😛

I'm not convinced by someone just because he takes something seriously --you still have to know what you're talking about too and not have some narrative agenda in mind before writing. Not sure I can take an appeal to authority or some claim of false causation regarding the lack of benchmarks seriously either.Wake me up when Qualcomm wants to talk about inference. The joke I posted above only works because QC did not offer any meaningful metric that presents their hardware as efficient. No performance numbers, no power usage attached to that performance.

SemiAnalysis make their living out of taking both the industry in general and AI in particular VERY seriously. So sleep on this: if QC had a truly valuable product in their hands, we'd be swimming in benchmarks and efficiency claims.

Looks normal.

I'm not convinced by someone just because he takes something seriously --you still have to know what you're talking about too and not have some narrative agenda in mind before writing. Not sure I can take an appeal to authority or some claim of false causation regarding the lack of benchmarks seriously either.

They didn't just enter the AI market, they have had success in DCs with their AI100's released in 2019 and have dominated mobile AI both hardware and software wise for a decade (until Mediatek's strong entry this year...) They pioneered model quantization techniques and have a unique perception stack that uses gauge equivalent CNN's. Along with nVidia's DLSS, their modem \ RF systems are just a few pieces of consumer tech which use non-trivial neural nets fruitfully wholly at the client level.

Given their low power pedigree, I expect the parts to be very efficient, and their direct addressing of memory movement power overhead with a near compute architecture looks right on the money to me. I expect the parts to be a popular choice for inferencing that can improve compute density per rack by being easier to cool.

None.How much success have they had in the DC AI market

So... Windows on ARM is starting to get more adepts even on a slow way?I know the WoA market isn't exactly blowing up right now but I still thought I should post this anyway...

Rust 1.91 Promotes Windows On 64-bit ARM To Tier-1 Status - Phoronix

www.phoronix.com

Just means that on the Rust language side of things WoA is at least being given the same maintenance and feature priority as other tier 1 platforms.So... Windows on ARM is starting to get more adepts even on a slow way?

All things considered, that's not a great result for the X2 Elites vs the R9. 12-core vs 12-core, the Snapdragon CPU is ~6% faster at 50W, ~6% at 40W, ~9% at 30W, and ~15% at 20W.

The M4 Max achieves 2000+ points with a power consumption of 57-60 watts.All things considered, that's not a great result for the X2 Elites vs the R9. 12-core vs 12-core, the Snapdragon CPU is ~6% faster at 50W, ~6% at 40W, ~9% at 30W, and ~15% at 20W.

The 18-core looks very impressive though.

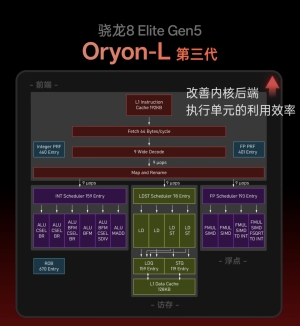

A lot of strange stuff in the slide deck.