igor_kavinski

Lifer

I'm a bit confused.

Are pipeline stages == clock cycles?

That's what DavidC1 seems to be implying with that post.

Are pipeline stages == clock cycles?

That's what DavidC1 seems to be implying with that post.

No, but adding pipeline stages allows designers to increase clockspeed.I'm a bit confused.

Are pipeline stages == clock cycles?

That's what DavidC1 seems to be implying with that post.

1 pipe stage usually is 1 clock cycle, but that’s not always the case. IIRC the P4 was using double clock speed for its ALUs (where is Sarah when you need her?).Are pipeline stages == clock cycles?

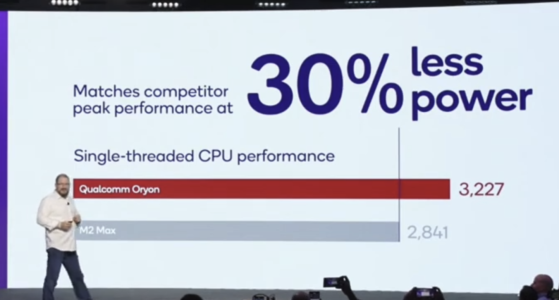

“We expect PC to be the next biggest driver of diversification for the company,” says Amon. Business will be “slow and steady as the market transitions,” he admitted, but we already see some good signs coming out of the woodwork. Amon said that some Snapdragon X PCs have already sold out, while Geekbench also posted on X that 6.5% of Geekbench 6 benchmarks from June 15 to July 15, 2024, were also run on Snapdragon X devices — good signs for Qualcomm, especially as it had launched less than a month before that.

They don't have the volume to enforce anything.Should Qualcomm create a minimum laptop specification standard, like Intel Evo?

It is the return of sd810 yes, but that's unrelated to the battery capacity bumps.Some speculated this is due to bad power efficiency of 8G4.

And now you understand why GPU-driven games such as Rainbox Six Siege run very poorly on Qualcomm Snapdragon X. Same for Nanite.

Other Android GPU vendors have similar bottlenecks. This is not just Qualcomm. These GPUs are optimized for traditional VS+PS workloads. All big data (matrices, material) should be loaded from uniform buffer using fixed address. This hits fast paths.

If you want to know how HypeHype’s new renderer optimizes around these bottlenecks, check our new SIGGRAPH 2024 presentation (in Moving Mobile Graphics track). Slide deck will be public next week.

Most people don’t know that biggest improvement in Turing wasn’t ray-tracing or tensor cores. It was 28 cycle L1$ latency (vs 85 cycles) and 2x L1$ bandwidth (source: CUDA paper). This is great for GPU-driven render + V-buffer. And great for ray-tracing too.

Nvidia wasn’t great in GPU compute before Turing. They also lacked async compute. Kepler also emulated LDS atomics. Turing was a massive enabler for Nvidia -> $3 trillion company now. But Pascal had so fast geometry processing that gamers didn’t notice GCNs compute advantage.

Back in the Pascal days Nvidia made a series of blog posts advicing devs to use uniform buffers for their new deferred lighting shaders. IIRC Pascal was suffering 30% from modern raw buffer compute code. Now Qualcomm is in the same position when you run modern workloads.

Nvidia wasn’t great in GPU compute before Turing. They also lacked async compute. Kepler also emulated LDS atomics. Turing was a massive enabler for Nvidia -> $3 trillion company now. But Pascal had so fast geometry processing that gamers didn’t notice GCNs compute advantage.

Very little of that left outside of the overall 1 scheduler/4 SIMD arrangement.Case in point CDNAx is still based on Vega/GFX9.

I find that claim questionable.Snapdragon 8 Gen 4 might match the Single Core performance of Apple A18 in Geekbench 5, where SME does not add to the score.

Do we know the X Elite GB5 score? I can’t find anything on it. That’d be a decent proxy.Snapdragon 8 Gen 4 might match the Single Core performance of Apple A18 in Geekbench 5, where SME does not add to the score.

Here you go: https://browser.geekbench.com/v5/cpu/search?utf8=✓&q=X1e84100Do we know the X Elite GB5 score? I can’t find anything on it. That’d be a decent proxy.

Would have to get around 2500-2600 to challenge.

Oh no. Apple is stronger in GB5 than GB6?

| GB5 | GB6 | CB2024 | |

| X1E-84 | 1950 | 2950 | 131 |

| M3 Max | 2350 | 3150 | 144 |

| Delta | +20.5% | +6.7% | +9.9% |

4.2ghz is massive jump in frequency, that plus minor ipc improvement it's possible for 8 gen 4 to reach ~3500 in GB 6 which is A18 level.Awesome thank you.

Yeah so based on that the X1E-84-100, the score is around 1940 (median of 5 results). The A17 Pro is already at 2130ish on average.

The A18 Pro will probably be around 2400* EDIT. Doesn’t seem possible that the Snapdragon 8 Gen 4 will be anywhere near that. I don’t even know if we can expect it to be at 2000.

The rumour says 4.37 GHz ST boost for 8G4.4.2ghz is massive jump in frequency, that plus minor ipc improvement it's possible for 8 gen 4 to reach ~3500 in GB 6 which is A18 level.

A single core is definetely not guzzling 80W.Tbh I’d be very surprised if a mobile derivative of the X Elite will perform as high as their laptop part at its’ highest wattage.

Keep in mind their GB6 score of ~2965 was for the 80W reference part. Their 23W reference design achieved ~2765.

If I had to guess, it’d be under 3000.

3250 seems too high. That’s almost 10% faster than the 80W reference.

Why wouldn’t they want to use that same performance core in their flagship laptops?

Surely not. But the 23W design is more representative of a mobile SKU.A single core is definetely not guzzling 80W.

Also that 3200 is for Linux. In Windows it does 3000 (for reasons that I cannot explain -_-)