So i ran into interesting piece of info on Octane Render forums.... one of the users benched both GTX 1060 and 1080 in Octanebench and the results are suprising, to say at least. Usually, when it comes to Octane, performance-wise, the most important stats are number of CUDA cores and frequency. Obviously, higher means better/faster, in both cases.

Knowing this, comparing different GPUs is fairly easy, at least when you compare within the same generation, when there are no architectural changes present, which could sway the results...

One example for all, GTX970 has average score of 79. It has 1664 CC to 980Ti 2816... thats 1,69x more in favor of 980Ti. 79 x 1,69 = 133. Slightly above whats listed as avg score of 980Ti, 126 - could be perhaps explained by some frequency differences, or simply the app does not scale 100 percent...still its pretty close.

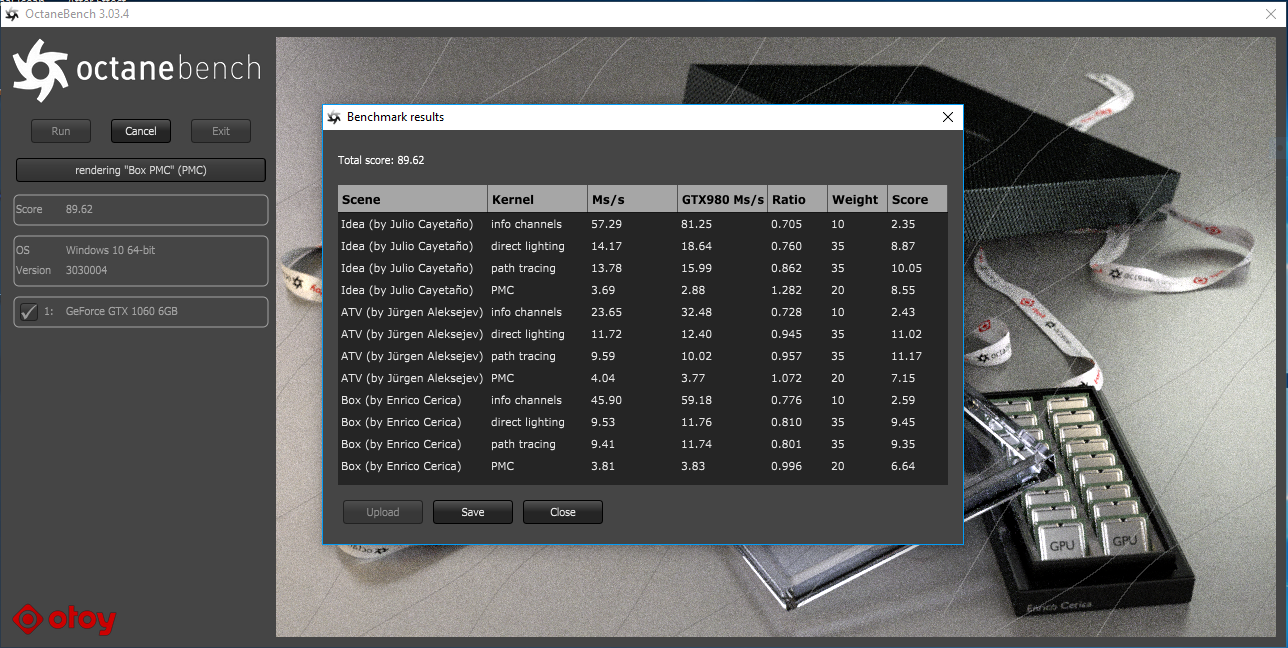

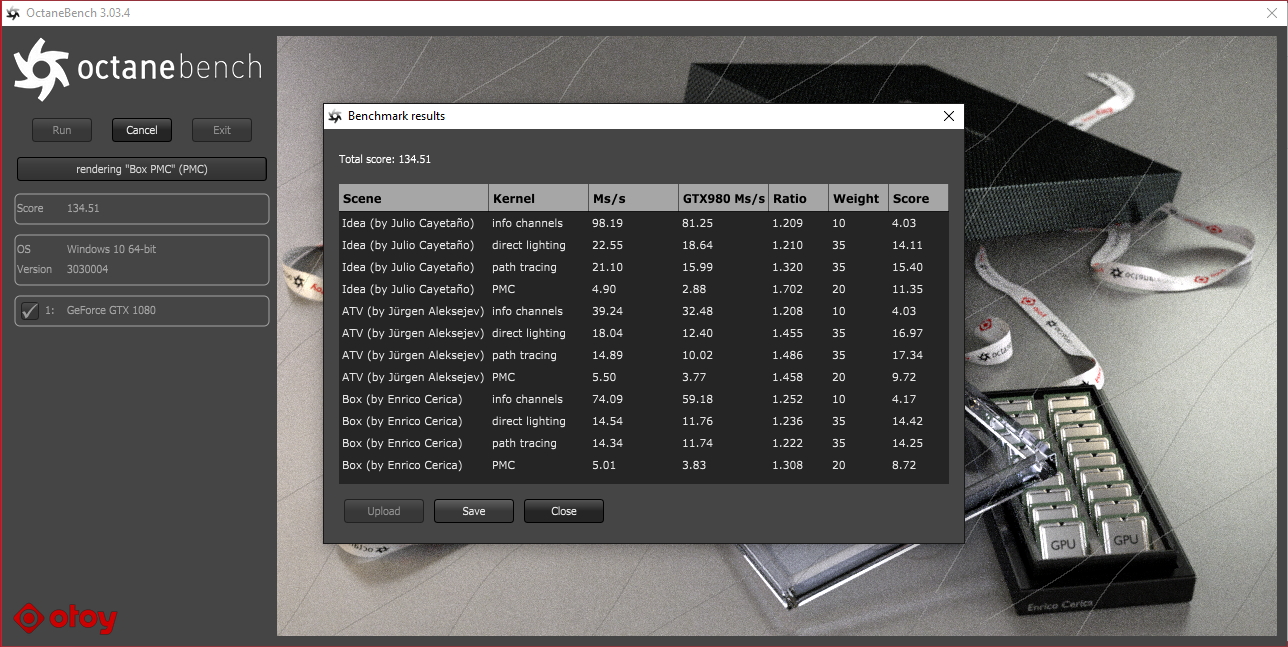

Now lets get to 1060 and 1080, both at stock apparently, and see this:

1060 = 89, 1080 = 134. Thats 2/3 of the performance, even though 1060 has only 1/2 of CUDA cores. Frequencies should be at stock more or less the same, i assume.

Any idea whats going on?

Knowing this, comparing different GPUs is fairly easy, at least when you compare within the same generation, when there are no architectural changes present, which could sway the results...

One example for all, GTX970 has average score of 79. It has 1664 CC to 980Ti 2816... thats 1,69x more in favor of 980Ti. 79 x 1,69 = 133. Slightly above whats listed as avg score of 980Ti, 126 - could be perhaps explained by some frequency differences, or simply the app does not scale 100 percent...still its pretty close.

Now lets get to 1060 and 1080, both at stock apparently, and see this:

1060 = 89, 1080 = 134. Thats 2/3 of the performance, even though 1060 has only 1/2 of CUDA cores. Frequencies should be at stock more or less the same, i assume.

Any idea whats going on?