BFG10K

Lifer

Written:

www.techpowerup.com

www.techpowerup.com

www.computerbase.de

www.computerbase.de

hothardware.com

hothardware.com

www.kitguru.net

www.kitguru.net

www.tomshardware.com

www.tomshardware.com

www.pcgameshardware.de

www.pcgameshardware.de

Video:

www.youtube.com

www.youtube.com

www.youtube.com

www.youtube.com

www.youtube.com

www.youtube.com

NVIDIA GeForce RTX 3080 Founders Edition Review - Must-Have for 4K Gamers

NVIDIA's new GeForce RTX 3080 "Ampere" Founders Edition is a truly impressive graphics card. It not only looks fantastic, performance is also better than even the RTX 2080 Ti. In our RTX 3080 Founders Edition review, we're also taking a close look at the new cooler, which runs quietly without...

Nvidia GeForce RTX 3080 FE im Test

Die Nvidia GeForce RTX 3080 FE macht bei „Gaming-Ampere“ den Anfang. Im Test hat der Vorgänger GeForce RTX 2080 Super FE keine Chance.

NVIDIA GeForce RTX 3080 Review: Ampere Is A Gaming Monster

The Ampere-based NVIDIA GeForce RTX 3080 is a total BEAST and we've got independent benchmarks, power, acoustics, thermals, and overclocking on tap.

Nvidia RTX 3080 Founders Edition Review - KitGuru

The time has come. Two years on from the launch of Turing and the RTX 20-series GPUs, Nvidia's Amper

www.kitguru.net

www.kitguru.net

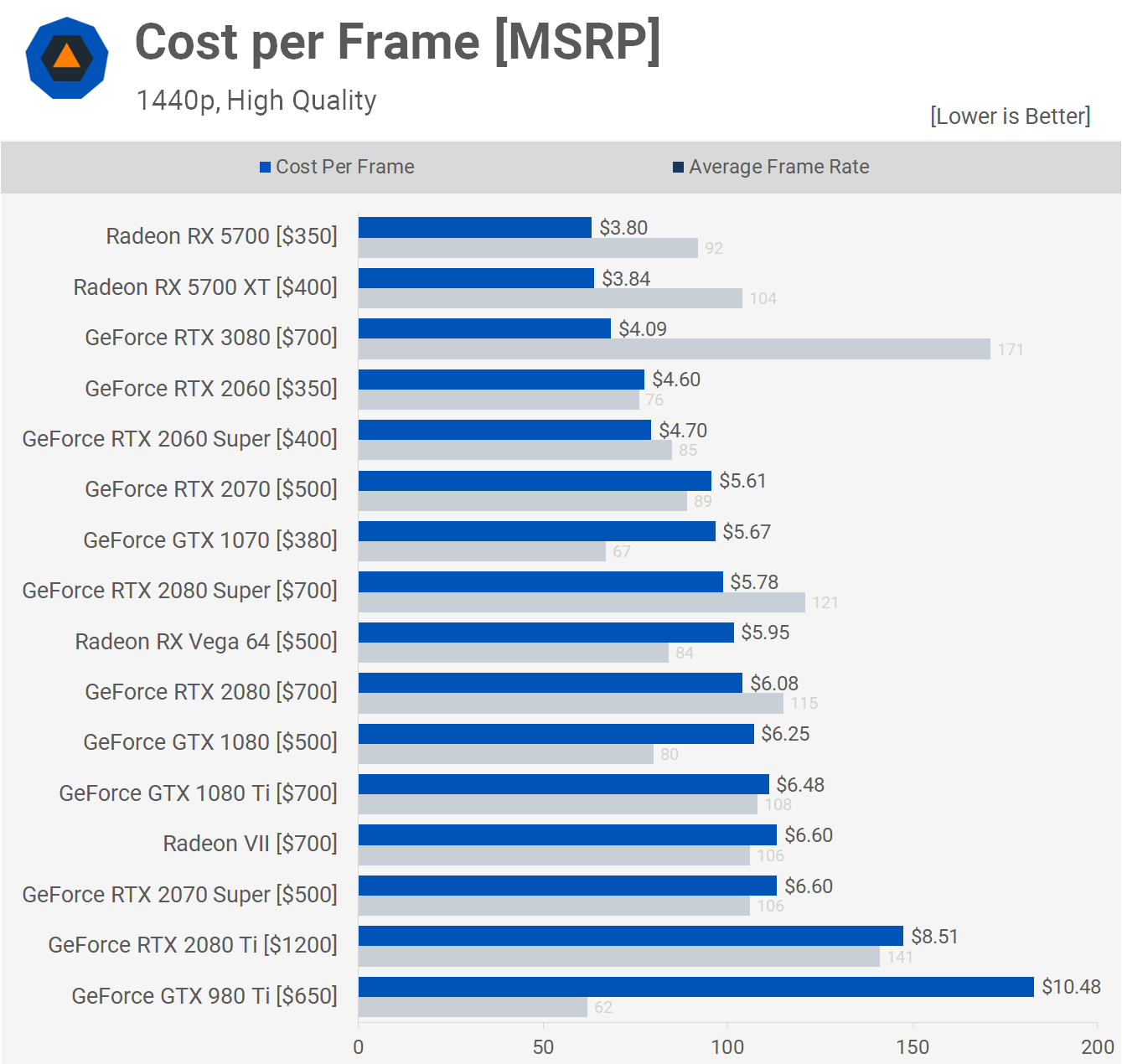

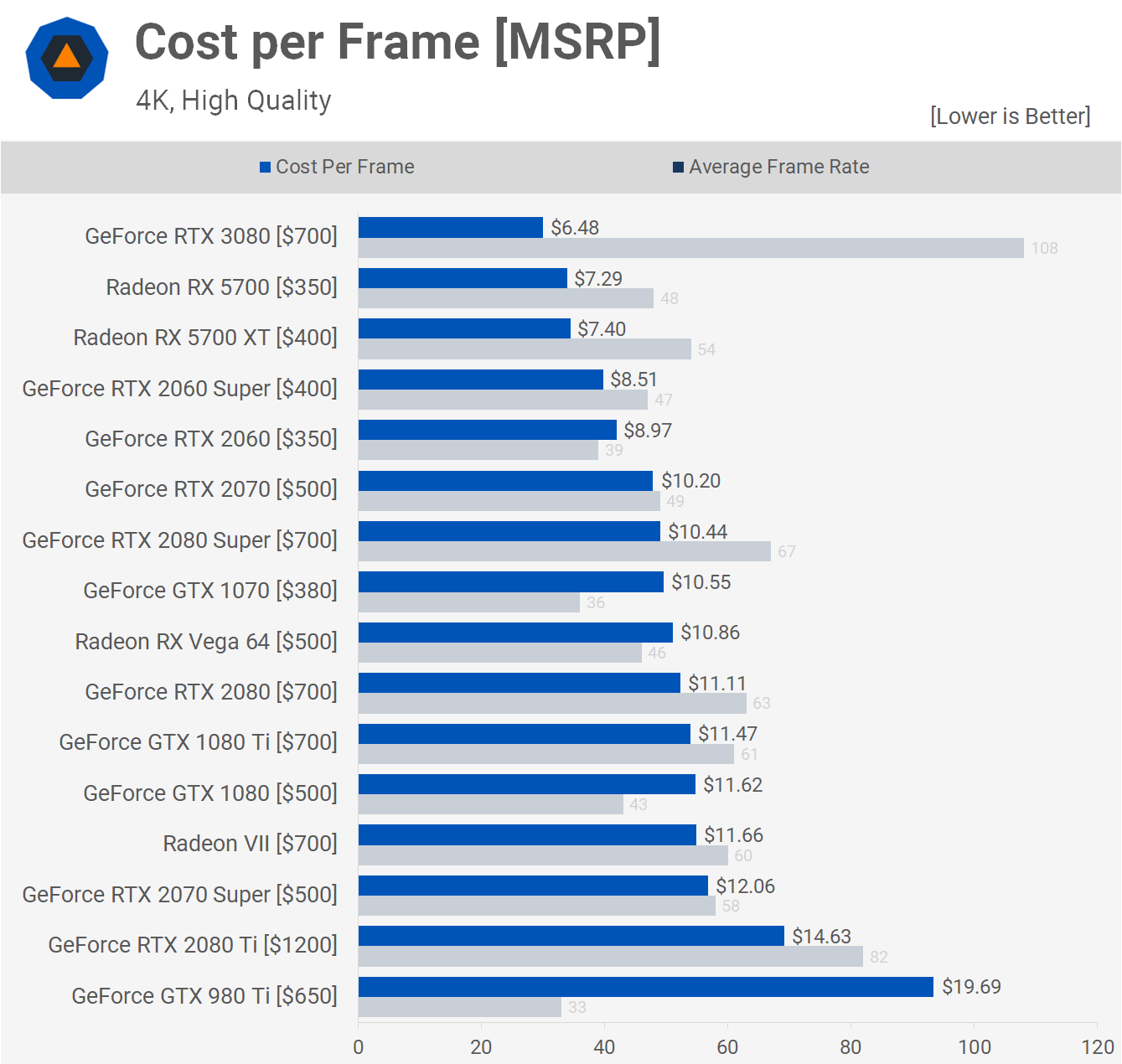

Nvidia GeForce RTX 3080 Founders Edition Review: A Huge Generational Leap in Performance

The GeForce RTX 3080 delivers big gains over Turing and the RTX 2080 Ti, but at a lower price.

Nvidia Geforce RTX 3080 im XXL-Test: Ampère, le trés légère Preisbrechèr [Update: Verkaufsstart]

Die Geforce RTX 3080 im Spieletest mit zahlreichen Benchmarks und einer Indexierung in die Bestenliste. PCGH hat 20 Spiele für den Vergleich herangezogen.

Video:

The RTX 3080 Benchmarks... do they even come close to expectations?

The RTX 3080 Benchmarks are in... so do they even come close to what we expected?? Learn more about the Eclipse p500A and p500A D-RGB cases here! Non-RGB - h...

NVIDIA GeForce RTX 3080 Founders Edition Review: Gaming, Thermals, Noise, & Power Benchmarks

This is our review of the NVIDIA RTX 3080 video card, looking at the new Ampere GPU and its Founders cooler vs. the GTX 1080 Ti, RTX 2080 Ti, RTX 2080, 2070,...

A DIFFERENT RTX 3080 Review - Should You Upgrade NOW?

The RTX 3080 performance review with benchmarks is finally here! But this review is DIFFERENT. We talk about the impact of an RTX 3080 upgrade for people who...

Last edited: