psolord

Platinum Member

- Sep 16, 2009

- 2,142

- 1,265

- 136

Now let’s move on the quick Core i5-8600k with the 970, which is essentially a preview, since I will do more testing later on, with the 1070 installed, in order to better highlight any differences in performance.

Again the SSD test come first with the system also at stock. Please that all post kb4056892 patch benchmarks on the 8600k, were done with the 1.40 Asrock Z370 Extreme 4 BIOS, which included microcode fixes for the cpu, regarding the Spectre and Meltdown vulnerabilities. It is therefore more solidly patched, compared to i7-860.

Ashampoo cpu check report verifies the system to be ok.

I have two SSDs. A Samsung 850 EVO 500GB and a Sandisk Extrepe Pro 240GB. Needless to say that the screeshots with the lower performance, are the ones of the patched system. I have the windows version captured on those.

Unfortunately, there is a substantial and directly measureable performance drop on both SSDs, that reach 1/3 of performance loss on the smaller file sizes. On the bigger file sizes things are much better of course. I did a mistake and used different ATTO versions for the Samsung and Sandisk drives, but the performance drop has been recorded correctly for both anyway.

As for pure cpu tests, I didn’t do much. Just cpuz and cinebench.

Cinebench didn’t show significant difference, but cpuz showed a drop on the multicore result. I then realized that I had used version 1.81 on the pre patch test and version 1.82 on the post patch test. I am not sure if this would affect things. Still I trust cinebench more, since it’s a much heavier test.

Ok then, gaming benchmarks time. i5-8600k@5Ghz, GTX 970@1.5Ghz.

The pattern is the same as above.

Assassins Creed Origins 1920X1080 Ultra

Gears of War 4 1920X1080 Ultra

World of Tanks Encore 1920X1080 Ultra

Grand Theft Auto V 1920X1080 Very High

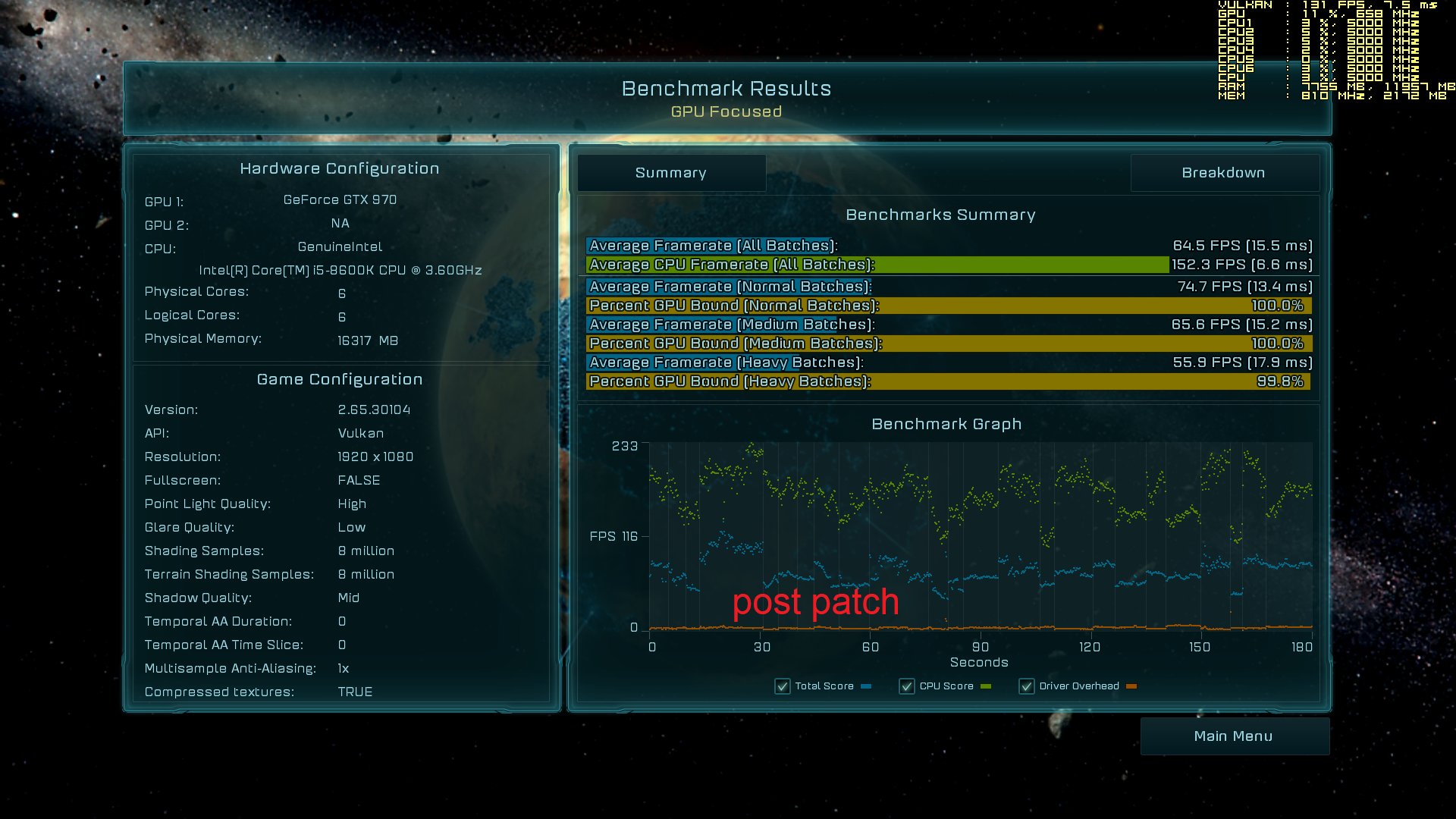

And I left Ashes of the Singularity for the end, because I only have post patch measurements, but there’s a reason I am including those too.

Again as you can see, we have a measureable drop on Gears of War 4. It’s probable that with the 1070 the difference will be higher. The point is not just that however.

Let’s do a comparison on the above numbers. Take GTA V for example on the i7-860+1070. You will see that it has the same benchmarking result of 75fps average, as the 8600k with the much slower 970. However its 0.1% and 1% lows are quite better. You can feel it while playing. This is a direct result of how cpu limited this game is. For reference, the 1070 with the 8600k gave me 115fps average, but this is a discussion for another time.

After that, you can take a look at Gears of War 4 post patch results for both cpu scores. 364fps for the 8600k, 199 fps for the 860.

And of course we cannot defy the king of cpu limits, Ashes of the Singularity, which for the Vulkan test being the best for both systems, gave us post patch, an average cpu framerate of 152fps for the 8600k and 74fps for the i7-860.

Why am I saying all that and why am I comparing first and eighth generation cpus? Because as you can see even from these few tests, the i5-8600k continues to perform as an 8th gen cpu. It did not suddenly turn into a Lynnfield or something. Also the Lynnfield stayed a Lynnfield and did not become a Yorkfield or whatever.

I generally observe a severe doom and gloom attitude and the consensus that our systems are only fit for the trash, seems to have taken hold on some users minds. This is not what I am seeing however. Always talking from a home user perspective.

I am not trying to diminish the importance of the issue. It’s very serious and it is sad that it has broken out the way it did. However I do see some seriousness from all affected parties. I mean Asrock brought out the BIOS with what, less than a week or something.

Now regarding the professional markets, I can understand that things will be much worse, especially with the very real IO performance degradation. Even some home users with fast SSDs will be rightfully annoyed. In these situations I believe some form of compensation should take place or maybe some hefty discounts for future products. Heck I know that I would be furious if I had seen a severe degradation on the gaming graphics department, which is my main focus.

Of course testing will continue. I have a good 1070+8600k pre patch gaming benchmarks database already, which I will compare with some select post patch benchmarks. If I find anything weird I will repost.

For reference, here are my pre patch benchmarking videos, from which the above pre patch results came from. I did no recordings for the post patch runs.

Take care.

Assassin's Creed Origins 1920X1080 ultra GTX 1070 @2Ghz CORE i7-860 @4GHz

Tom Clancy's Rainbow Six Siege 1920X1080 Ultra GTX 1070 @2Ghz CORE i7-860 @4GHz

Forza Motorsport 7 1920X1080 Ultra 4xAA GTX 1070 @2Ghz CORE i7-860 @4GHz

Ashes of the Singularity 1920X1080 High DX11+DX12+Vulkan GTX 1070 @2Ghz CORE i7-860 @4GHz

Gears of War 4 1920X1080 Ultra GTX 1070 @2Ghz CORE i7-860 @4GHz

Gears of War Ultimate 1920X1080 maxed GTX 1070 @2Ghz CORE i7-860 @4GHz

Prey 1920X1080 very high GTX 1070 @2Ghz CORE i7-860 @4GHz

Total War Warhammer 2 1920X1080 Ultra GTX 1070 @2Ghz CORE i7-860 @4GHz

Unigine Valley 1920X1080 Extreme HD GTX 1070 @2Ghz CORE i7-860 @4GHz

Shadow of War 1920X1080 Ultra+V.High GTX 1070 @2Ghz CORE i7-860 @4GHz

World of Tanks Encore 1920X1080 Ultra GTX 1070 @2Ghz CORE i7-860 @4GHz

The Evil Within 2 1920X1080 Ultra GTX 1070 @2Ghz CORE i7-860 @4GHz

Road Redemption 1920X1080 fantastic GTX 1070 @2Ghz CORE i7-860 @4GHz

Dirt 4 1920X1080 4xAA Ultra GTX 1070 @2Ghz CORE i7-860 @4GHz

F1 2017 1920X1080 ultra + high GTX 1070 @2Ghz CORE i7-860 @4GHz

Dead Rising 4 1920X1080 V.High GTX 1070 @2Ghz CORE i7-860 @4GHz

ELEX 1920X1080 maxed GTX 1070 @2Ghz CORE i7-860 @4GHz

Project Cars 2 1920X1080 ultra GTX 1070 @2Ghz CORE i7-860 @4GHz

Grand Theft Auto V 1920X1080 V.High GTX 1070 @2Ghz CORE i7-860 @4GHz

i5-8600k + 1070

World of Tanks Encore 1920x1080 Ultra GTX 970 @1.5Ghz Core i5-8600k @5GHz

Grand Theft Auto V 1920x1080 V.High outdoors GTX 970 @1.5Ghz Core i5-8600k @5GHz

Gears of War 4 1920x1080 Ultra GTX 970 @1.5Ghz Core i5-8600k @5GHz

Assassin's Creed Origins 1920x1080 Ultra GTX 970 @1.5Ghz Core i5-8600k @5GHz

Again the SSD test come first with the system also at stock. Please that all post kb4056892 patch benchmarks on the 8600k, were done with the 1.40 Asrock Z370 Extreme 4 BIOS, which included microcode fixes for the cpu, regarding the Spectre and Meltdown vulnerabilities. It is therefore more solidly patched, compared to i7-860.

Ashampoo cpu check report verifies the system to be ok.

I have two SSDs. A Samsung 850 EVO 500GB and a Sandisk Extrepe Pro 240GB. Needless to say that the screeshots with the lower performance, are the ones of the patched system. I have the windows version captured on those.

Unfortunately, there is a substantial and directly measureable performance drop on both SSDs, that reach 1/3 of performance loss on the smaller file sizes. On the bigger file sizes things are much better of course. I did a mistake and used different ATTO versions for the Samsung and Sandisk drives, but the performance drop has been recorded correctly for both anyway.

As for pure cpu tests, I didn’t do much. Just cpuz and cinebench.

Cinebench didn’t show significant difference, but cpuz showed a drop on the multicore result. I then realized that I had used version 1.81 on the pre patch test and version 1.82 on the post patch test. I am not sure if this would affect things. Still I trust cinebench more, since it’s a much heavier test.

Ok then, gaming benchmarks time. i5-8600k@5Ghz, GTX 970@1.5Ghz.

The pattern is the same as above.

Assassins Creed Origins 1920X1080 Ultra

Gears of War 4 1920X1080 Ultra

World of Tanks Encore 1920X1080 Ultra

Grand Theft Auto V 1920X1080 Very High

And I left Ashes of the Singularity for the end, because I only have post patch measurements, but there’s a reason I am including those too.

Again as you can see, we have a measureable drop on Gears of War 4. It’s probable that with the 1070 the difference will be higher. The point is not just that however.

Let’s do a comparison on the above numbers. Take GTA V for example on the i7-860+1070. You will see that it has the same benchmarking result of 75fps average, as the 8600k with the much slower 970. However its 0.1% and 1% lows are quite better. You can feel it while playing. This is a direct result of how cpu limited this game is. For reference, the 1070 with the 8600k gave me 115fps average, but this is a discussion for another time.

After that, you can take a look at Gears of War 4 post patch results for both cpu scores. 364fps for the 8600k, 199 fps for the 860.

And of course we cannot defy the king of cpu limits, Ashes of the Singularity, which for the Vulkan test being the best for both systems, gave us post patch, an average cpu framerate of 152fps for the 8600k and 74fps for the i7-860.

Why am I saying all that and why am I comparing first and eighth generation cpus? Because as you can see even from these few tests, the i5-8600k continues to perform as an 8th gen cpu. It did not suddenly turn into a Lynnfield or something. Also the Lynnfield stayed a Lynnfield and did not become a Yorkfield or whatever.

I generally observe a severe doom and gloom attitude and the consensus that our systems are only fit for the trash, seems to have taken hold on some users minds. This is not what I am seeing however. Always talking from a home user perspective.

I am not trying to diminish the importance of the issue. It’s very serious and it is sad that it has broken out the way it did. However I do see some seriousness from all affected parties. I mean Asrock brought out the BIOS with what, less than a week or something.

Now regarding the professional markets, I can understand that things will be much worse, especially with the very real IO performance degradation. Even some home users with fast SSDs will be rightfully annoyed. In these situations I believe some form of compensation should take place or maybe some hefty discounts for future products. Heck I know that I would be furious if I had seen a severe degradation on the gaming graphics department, which is my main focus.

Of course testing will continue. I have a good 1070+8600k pre patch gaming benchmarks database already, which I will compare with some select post patch benchmarks. If I find anything weird I will repost.

For reference, here are my pre patch benchmarking videos, from which the above pre patch results came from. I did no recordings for the post patch runs.

Take care.

Assassin's Creed Origins 1920X1080 ultra GTX 1070 @2Ghz CORE i7-860 @4GHz

Tom Clancy's Rainbow Six Siege 1920X1080 Ultra GTX 1070 @2Ghz CORE i7-860 @4GHz

Forza Motorsport 7 1920X1080 Ultra 4xAA GTX 1070 @2Ghz CORE i7-860 @4GHz

Ashes of the Singularity 1920X1080 High DX11+DX12+Vulkan GTX 1070 @2Ghz CORE i7-860 @4GHz

Gears of War 4 1920X1080 Ultra GTX 1070 @2Ghz CORE i7-860 @4GHz

Gears of War Ultimate 1920X1080 maxed GTX 1070 @2Ghz CORE i7-860 @4GHz

Prey 1920X1080 very high GTX 1070 @2Ghz CORE i7-860 @4GHz

Total War Warhammer 2 1920X1080 Ultra GTX 1070 @2Ghz CORE i7-860 @4GHz

Unigine Valley 1920X1080 Extreme HD GTX 1070 @2Ghz CORE i7-860 @4GHz

Shadow of War 1920X1080 Ultra+V.High GTX 1070 @2Ghz CORE i7-860 @4GHz

World of Tanks Encore 1920X1080 Ultra GTX 1070 @2Ghz CORE i7-860 @4GHz

The Evil Within 2 1920X1080 Ultra GTX 1070 @2Ghz CORE i7-860 @4GHz

Road Redemption 1920X1080 fantastic GTX 1070 @2Ghz CORE i7-860 @4GHz

Dirt 4 1920X1080 4xAA Ultra GTX 1070 @2Ghz CORE i7-860 @4GHz

F1 2017 1920X1080 ultra + high GTX 1070 @2Ghz CORE i7-860 @4GHz

Dead Rising 4 1920X1080 V.High GTX 1070 @2Ghz CORE i7-860 @4GHz

ELEX 1920X1080 maxed GTX 1070 @2Ghz CORE i7-860 @4GHz

Project Cars 2 1920X1080 ultra GTX 1070 @2Ghz CORE i7-860 @4GHz

Grand Theft Auto V 1920X1080 V.High GTX 1070 @2Ghz CORE i7-860 @4GHz

i5-8600k + 1070

World of Tanks Encore 1920x1080 Ultra GTX 970 @1.5Ghz Core i5-8600k @5GHz

Grand Theft Auto V 1920x1080 V.High outdoors GTX 970 @1.5Ghz Core i5-8600k @5GHz

Gears of War 4 1920x1080 Ultra GTX 970 @1.5Ghz Core i5-8600k @5GHz

Assassin's Creed Origins 1920x1080 Ultra GTX 970 @1.5Ghz Core i5-8600k @5GHz