No, I think kepler means that zen 7 is 5 years away /s 🙂Zen7 on AM5?

-

We’re currently investigating an issue related to the forum theme and styling that is impacting page layout and visual formatting. The problem has been identified, and we are actively working on a resolution. There is no impact to user data or functionality, this is strictly a front-end display issue. We’ll post an update once the fix has been deployed. Thanks for your patience while we get this sorted.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Info LPDDR6 @ Q3-2025: Mother of All CPU Upgrades

Page 13 - Seeking answers? Join the AnandTech community: where nearly half-a-million members share solutions and discuss the latest tech.

You are still getting a third more B/W at the same frequencies.

For eg. standard dual channel LPDDR5X @10667MT/s will get ~170GB/s,

standard dual channel LPDDR6 @10667MT/s will get ~227.5GB/s.

But yeah LPDDR6 is still quite some time away for being available and then become mainstream. DDR6 seems even further away for availability .

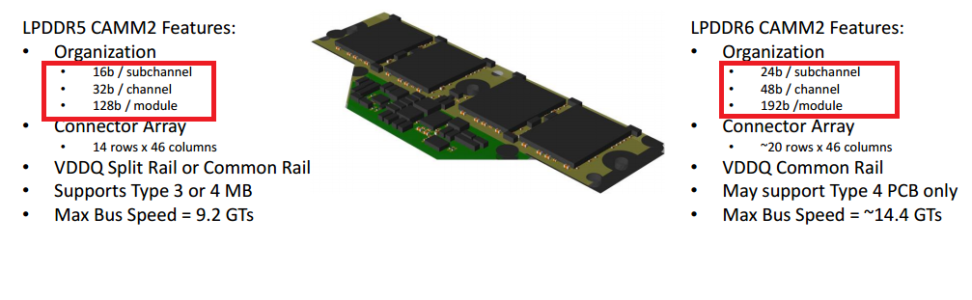

That's because an LPDDR6 controller is 24 bits wide instead of LPDDR5X's 16, meaning a LPDDR6 controller will be physically larger and require more shoreline and pins. If you devote more area/shoreline/pins to LPDDR5X you can get more bandwidth too. LPDDR5X-10666 actually offers slightly more bandwidth than LPDDR6-10666 (assuming the same total width of memory bus) due to LPDDR6's extra bits for ECC/tagging.

johnsonwax

Senior member

You should open a Zen7 Speculation Thread.Zen7 on AM5?

marees

Platinum Member

Here is the zen 7 SPECULATION threadYou should open a Zen7 Speculation Thread.

1.4nm

33 cores ccd

264 core processor

AMD's next-gen Zen 7 chips with X3D teased, new 3D cores, and more in fresh rumors

AMD's future-gen Zen 7 CPU architecture rumored with '3D cores' that would usher in 'absurdly high' performance increases for gaming.

Read more: https://www.tweaktown.com/news/1052...-3d-cores-and-more-in-fresh-rumors/index.html

AMD Zen 7 will pull further ahead of Intel with dedicated 3D core, says leak

Though its release is still far in the future, veteran hardware leaker MLID says Zen 7 CPUs could vastly improve performance with 3D cores.www.pcguide.com

Yeah that part is known but does it matter much that frequency alone doesn't move much initially when we can get B/W gain from increased width and subsequently gain with frequency improvements that follow?That's because an LPDDR6 controller is 24 bits wide instead of LPDDR5X's 16, meaning a LPDDR6 controller will be physically larger and require more shoreline and pins. If you devote more area/shoreline/pins to LPDDR5X you can get more bandwidth too. LPDDR5X-10666 actually offers slightly more bandwidth than LPDDR6-10666 (assuming the same total width of memory bus) due to LPDDR6's extra bits for ECC/tagging.

For the last few years we mostly got improvements of 1066MT/s in LPDDR5X(improvement cadence)

Let's take a mainstream platform. For eg.

MTL-H(7467MT/s)--> Lunar Lake/ARL-H(8533MT/s)-->PTL-H(9600MT/s)

In a Dual-channel config we have been getting ~17GB/s per gen(cadence).

Let's say hypothetically with NVL-H we get LPDDR5x 10667MT/s(another same bump) but in 2028 we get even the base specs LPDDR6(10667MT/s), we are still getting a lot (~57GBps in Dual channel or one-third B/W gain over previous gen). That's still a fairly decent gain while moving to new standard and then subsequent gains follow from increased frequencies.

johnsonwax

Senior member

Oh, you should start a Zen 8 Speculation Thread then. 7GHz?Here is the zen 7 SPECULATION thread

NTMBK

Lifer

sigh I'm ready to say the lineOh, you should start a Zen 8 Speculation Thread then. 7GHz?

Yeah that part is known but does it matter much that frequency alone doesn't move much initially when we can get B/W gain from increased width and subsequently gain with frequency improvements that follow?

For the last few years we mostly got improvements of 1066MT/s in LPDDR5X(improvement cadence)

Let's take a mainstream platform. For eg.

MTL-H(7467MT/s)--> Lunar Lake/ARL-H(8533MT/s)-->PTL-H(9600MT/s)

In a Dual-channel config we have been getting ~17GB/s per gen(cadence).

Let's say hypothetically with NVL-H we get LPDDR5x 10667MT/s(another same bump) but in 2028 we get even the base specs LPDDR6(10667MT/s), we are still getting a lot (~57GBps in Dual channel or one-third B/W gain over previous gen). That's still a fairly decent gain while moving to new standard and then subsequent gains follow from increased frequencies.

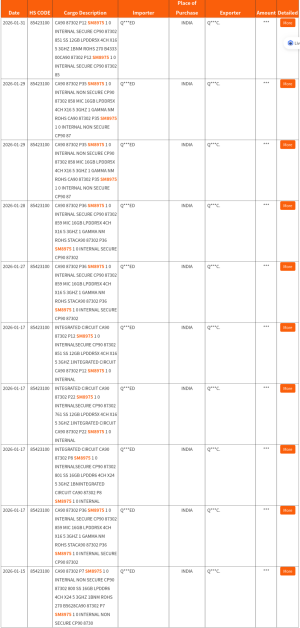

You seem to be relying on the information found in this graphic from the first post of this thread:

Do you not notice that jump from "x64" to "x96"? That is not free, and it something any OEM could choose to TODAY with LPDDR5X for roughly the same cost, and get MORE bandwidth than LPDDR6.

The ONLY advantages to LPDDR6-10666 are Samsung's reported 20% power savings, and the ability to use those extra bits for ECC or tagging. The disadvantage is that you get 114.1 GB/s, whereas LPDDR5X-10666 at the same x96 width would provide 128.4 GB/s. There's also the downside that LPDDR6 is going to cost a LOT more initially, and it will take probably two years before it is at price parity with LPDDR5X.

Smartphones today have 64 bit wide memory busses, with four LPDDR5X controllers. Do you really believe they are going to go to a 96 bit wide bus when they transition to LPDDR6, because "4" memory controllers is some kinda magic number? Most likely they'll go to 72, which would give them EXACTLY the same 85.6 GB/s that a 64 bit wide LPDDR5X bus provides.

Ditto with PCs, they aren't going to go from 128 bit to 192 bit simply because "8" memory controllers is a magic number.

SteinFG

Senior member

Yeah, phone makers will go for more expensive memory, in order to *checks notes* get no benefit whatsoever. brilliant!Most likely they'll go to 72, which would give them EXACTLY the same 85.6 GB/s that a 64 bit wide LPDDR5X bus provides.

johnsonwax

Senior member

Will note that the iPhone has routinely gotten benefits from features like this ahead of the Mac. If Apple wanted to take 120fps video off of all of their pro phone cameras simultaneously to do some computational video, that might not fit in 85 GB/s. I don't think Apple is planning to do that, but smartphone cameras generally consume resources faster than anything done on a desktop PC. I've noted the rumor of putting AI compute in RAM which would be precisely the application for that kind of thing.Yeah, phone makers will go for more expensive memory, in order to *checks notes* get no benefit whatsoever. brilliant!

You seem to be relying on the information found in this graphic from the first post of this thread:

Do you not notice that jump from "x64" to "x96"? That is not free, and it something any OEM could choose to TODAY with LPDDR5X for roughly the same cost, and get MORE bandwidth than LPDDR6.

The ONLY advantages to LPDDR6-10666 are Samsung's reported 20% power savings, and the ability to use those extra bits for ECC or tagging. The disadvantage is that you get 114.1 GB/s, whereas LPDDR5X-10666 at the same x96 width would provide 128.4 GB/s. There's also the downside that LPDDR6 is going to cost a LOT more initially, and it will take probably two years before it is at price parity with LPDDR5X.

As for the standard modules and channel width are concerned we can take hint from LPDDR6 CAMM2. This(192 bit LP6 LPCAMM2) is supposed to be a (supposed to be more widely used vs previous CAMM2 of DDR5 and LPDDR5X has been till now) delivery vehicle for LPDDR6 other than soldered packages.

You seem to overlook the examples of mainstream Notebook platform and even in the hypothetical scenario we were talking 2028+ for LPDDR6 where it starts to be more commonly for mainstream notebook platform(It's kinda known that NVL-H doesn't support LPDDR6, Medusa Point SKUs also don't at least the initial versions). EVEN IF RazorLake notebook SKUs support LPDDR6 their broad availability would be in 2028 although for smartphones it would be earlier.

Are you implying that by the timelines of 2028(+), LPDDR6 won't likely have significant B/W gains from either channel width or frequency i.e. neither x96/net 192bit be standard for mainstream notebooks nor their would be very significant frequency uplift any higher than 10667MT/s by that time ?

Are you saying that LP6 in LPCAMM2 will use x96/192bit but other soldered LP6 packages won't be using x96/192 bit for mainstream notebook platforms ?

What do you think would be a standard bus-width from future successors of mainstream platforms like Intel's -H series of AMD's "Point" series that have LPDDR6 support? 144-bit?

Choosing x72/144bit vs x96/192 bit means memory config capacity goes down as well.

Yeah this is kinda expected though, sort of similar to new standard adoption in past. Also for some of the same reasons flagship smartphone SoCs are are likely to adopt LPDDR6 first than mainstream notebook.There's also the downside that LPDDR6 is going to cost a LOT more initially, and it will take probably two years before it is at price parity with LPDDR5X.

All that was referred was mainstream notebook platform, didn't refer to smartphones at all. As far as LPDDR6 in notebooks are concerned there is no need of any "magic number" here, they are going to gain benefits from LP6 LPCAMM2(192-bit) or any better standard down the line, other categories likely will get soldered LPDDR6 packages and some others will use older standards(LPDDR5X or DDR5) for some time.Smartphones today have 64 bit wide memory busses, with four LPDDR5X controllers. Do you really believe they are going to go to a 96 bit wide bus when they transition to LPDDR6, because "4" memory controllers is some kinda magic number? Most likely they'll go to 72, which would give them EXACTLY the same 85.6 GB/s that a 64 bit wide LPDDR5X bus provides.

Ditto with PCs, they aren't going to go from 128 bit to 192 bit simply because "8" memory controllers is a magic number.

DDR6(far from finalization) is likely going to use 16-bit sub-channel(not 24bit of LPDDR6), we will get know about its final specs with time.

The Synopsys LPDDR6 PHY results validated on N2P were most likely about a 48-bit bus AFAIK, using 2*48(net 96bit) will be a thing eventually in flagship smartphone SoCs. So it won't be a surprise that 96bit for flagship smartphone SoCs could become a fairly common thing eventually.

Last edited:

As for the standard modules and channel width are concerned we can take hint from LPDDR6 CAMM2. This(192 bit LP6 LPCAMM2) is supposed to be a (supposed to be more widely used vs previous CAMM2 of DDR5 and LPDDR5X has been till now) delivery vehicle for LPDDR6 other than soldered packages.

Well considering that LPDDR5 CAMMs are nearly nonexistent, "more widely used" is a given. It is unclear how widely CAMMs will be adopted by PC OEMs, and if 192 bits will become the standard width as a result. The fact that LPDDR6 will remain the "high end" option for several years obviously helps there, and they can figure out if they want to split the low end / value segment off at a lower width (where LPCAMM2 may not be used anyway) and the CPUs that support that would have a narrower bus.

You seem to overlook the examples of mainstream Notebook platform and even in the hypothetical scenario we were talking 2028+ for LPDDR6 where it starts to be more commonly for mainstream notebook platform(It's kinda known that NVL-H doesn't support LPDDR6, Medusa Point SKUs also don't at least the initial versions). EVEN IF RazorLake notebook SKUs support LPDDR6 their broad availability would be in 2028 although for smartphones it would be earlier.

Are you implying that by the timelines of 2028(+), LPDDR6 won't likely have significant B/W gains from either channel width or frequency i.e. neither x96/net 192bit be standard for mainstream notebooks nor their would be very significant frequency uplift any higher than 10667MT/s by that time ?

Are you saying that LP6 in LPCAMM2 will use x96/192bit but other soldered LP6 packages won't be using x96/192 bit for mainstream notebook platforms ?

What do you think would be a standard bus-width from future successors of mainstream platforms like Intel's -H series of AMD's "Point" series that have LPDDR6 support? 144-bit?

OK, so you're talking 2028. I wasn't talking 2-3 years in the future, all I said was that the first generation of LPDDR6 Samsung announced will enter mass production by the end of THIS year is pointless because it isn't any faster than LPDDR5X. If you stick it in a form factor that requires 192 bits like LPCAMM then yeah it is "more bandwidth" but that's accompaned by more cost. A LOT more cost, as an early adopter not only for LPDDR6 but also for LPCAMM2!

By 2028 the cost penalty will be fairly moderate, but LPDDR6 will have moved on to higher bins so it'll be faster than LPDDR5X on its merits not because OEMs are being forced (if they want to support LPCAMM2) to go wider.

DDR6(far from finalization) is likely going to use 16-bit sub-channel(not 24bit of LPDDR6), we will get know about its final specs with time.

The Synopsys LPDDR6 PHY results validated on N2P were most likely about a 48-bit bus AFAIK, using 2*48(net 96bit) will be a thing eventually in flagship smartphone SoCs. So it won't be a surprise that 96bit for flagship smartphone SoCs could become a fairly common thing eventually.

I've speculated here previously that I think DDR6 will use the same 24 bit channel width as LPDDR6, because supporting ECC on 16 bit subchannels is extremely wasteful (50% bit overhead) but the only "confirmation" of that I'd seen is a wccftech article, where they stated the 24 bit subchannels as an objective fact leading me to believe they got that from me (whether directly from one of my posts, or via an AI that scraped it and regurgitated it back to a wccftech "journalist" as fact, who knows)

But recently I've seen two other articles implying 24 bit channels / 96 bit width as a likelihood for DDR6 so I wouldn't count that out.

Anyway I just think it is disingenous comparing 128 bit LPDDR5X with 192 bit LPDDR6, as any factors that might drive platforms to widen with LPDDR6 would be driven by external factors like the defined width of LPCAMM2 modules, or Synopsos PHYs (and if they are implemented as 48 bit wide "pairs" of controllers, that could be driven by them wanting to design combo LPDDR5X/LPDDR6 controllers without wasting area when used with LPDDR5X) LPDDR6 will eventually be faster than LPDDR5X which isn't likely to get any faster than -12800. It just won't happen soon.

RnR_au

Diamond Member

Just be aware that JEDEC is planning/working on another version of LPCAMM2 that will make it tool-less. At the moment there are screws involved and they will go away. Until this happens I can't see it become mainstream. I think there is only one laptop manufacturer atm that are using it in one sku.Well considering that LPDDR5 CAMMs are nearly nonexistent, "more widely used" is a given. It is unclear how widely CAMMs will be adopted by PC OEMs, ...

Just be aware that JEDEC is planning/working on another version of LPCAMM2 that will make it tool-less. At the moment there are screws involved and they will go away. Until this happens I can't see it become mainstream. I think there is only one laptop manufacturer atm that are using it in one sku.

I kinda like the screw in m.2 since it seems more secure. It isn't as if you swap out your SSD or DRAM all that often, so the only reason I can see why they'd do this is to save a few seconds and a few cents for OEM assembly. 🙄

NTMBK

Lifer

I imagine that the screw might also affect form factor? Needing space for the screw might potentially add a fraction of a mm onto Z height.I kinda like the screw in m.2 since it seems more secure. It isn't as if you swap out your SSD or DRAM all that often, so the only reason I can see why they'd do this is to save a few seconds and a few cents for OEM assembly. 🙄

RnR_au

Diamond Member

Less screws are better. A mate of mine recently received a Strix Halo boxen from Hong Kong. He heard something rattling. Yup a tiny little screw was loose.I kinda like the screw in m.2 since it seems more secure. It isn't as if you swap out your SSD or DRAM all that often, so the only reason I can see why they'd do this is to save a few seconds and a few cents for OEM assembly. 🙄

Less screws are better. A mate of mine recently received a Strix Halo boxen from Hong Kong. He heard something rattling. Yup a tiny little screw was loose.

Without that screw it could be the LPCAMM3 module that's been rattling around all the way from Hong Kong. Which is a bigger problem? 😂

Well considering that LPDDR5 CAMMs are nearly nonexistent, "more widely used" is a given. It is unclear how widely CAMMs will be adopted by PC OEMs, and if 192 bits will become the standard width as a result. The fact that LPDDR6 will remain the "high end" option for several years obviously helps there, and they can figure out if they want to split the low end / value segment off at a lower width (where LPCAMM2 may not be used anyway) and the CPUs that support that would have a narrower bus.

With LPCAMM2 they want to replace SO-DIMM. There has been a concerted effort for CAMM to be made universal starting LPDDR6(and even for client DDR6 with CAMM2?). May be it(or some other form factor in future) can achieve that. Getting the tool-less implementation could be the big step. But we will have to see how well it progresses for adoption in coming generations(LPDDR6 and DDR6?). SOCAMM 2(Nvidia) looks ok apart from length(might not be suitable for many laptop designs).

Soldered LPDDR6 packages would likely be the main thing esp.for

most thin- and-lights.

Samsung has a record of announcing things way earlier than shipping(?) wrt to their memory products. But still direct comparison of LP6 with LP5X with regards to B/W only taking account of clock while ignoring bus width doesn't look ok. But I do get your point on early availability.OK, so you're talking 2028. I wasn't talking 2-3 years in the future, all I said was that the first generation of LPDDR6 Samsung announced will enter mass production by the end of THIS year is pointless because it isn't any faster than LPDDR5X. If you stick it in a form factor that requires 192 bits like LPCAMM then yeah it is "more bandwidth" but that's accompaned by more cost. A LOT more cost, as an early adopter not only for LPDDR6 but also for LPCAMM2!

By 2028 the cost penalty will be fairly moderate, but LPDDR6 will have moved on to higher bins so it'll be faster than LPDDR5X on its merits not because OEMs are being forced (if they want to support LPCAMM2) to go wider.

For stuffs like what becomes standard or what channel becomes common, it would get confirmed with time.

I imagine that the screw might also affect form factor? Needing space for the screw might potentially add a fraction of a mm onto Z height.

Flush screws exist and aren't all that uncommon. I'll be interested to see what they end up with for a "compression attached" memory module that doesn't need a screw. Probably some sort of retaining clip(s) like DIMMs, which will be a bigger issue for Z height than even a non flush screw.

I doubt the Z height matters though, since the CAMM module is going to be on the same board as the CPU. Other than perhaps a passively cooled laptop like Macbook Air, the CPU will always be your limiter as far as Z height.

SK hynix Develops 1c LPDDR6, 6th-Generation 10nm-Class DRAM

SK hynix announced Mar. 10 that it has successfully developed a 16Gb LPDDR61 DRAM based on the sixth-generation 10nm-class (1c) process technology.

news.skhynix.com

news.skhynix.com

SK hynix plans to complete preparations for mass production within the first half of the year and begin supplying the product in the second half

Last edited:

Those are effectively the same speed as LPDDR5X-9600. 20% better power is nice but you'll be paying a lot more per GB to get it (and in a time when memory is already super expensive)

I imagine a few Android OEMs will adopt it for their low volume flagships, since they are forced to compete with one another mainly on specs and "LPDDR6" will sound impressive to some who don't realize they aren't actually getting much from it.

Until there are faster grades than -10666 there will be very little momentum behind its adoption. Even when those higher speeds arrive the price premium may keep a lot of OEMs away until supply and demand in the memory market reaches some semblance of balance. You can eat a 50% price premium a lot easier when that premium comes out to $20 rather than $80.

I imagine a few Android OEMs will adopt it for their low volume flagships, since they are forced to compete with one another mainly on specs and "LPDDR6" will sound impressive to some who don't realize they aren't actually getting much from it.

Until there are faster grades than -10666 there will be very little momentum behind its adoption. Even when those higher speeds arrive the price premium may keep a lot of OEMs away until supply and demand in the memory market reaches some semblance of balance. You can eat a 50% price premium a lot easier when that premium comes out to $20 rather than $80.

Hmm, practically LPDDR5X-9600MT/s and LPDDR6 10667MT/s are quite different.Those are effectively the same speed as LPDDR5X-9600. 20% better power is nice but you'll be paying a lot more per GB to get it (and in a time when memory is already super expensive)

I imagine a few Android OEMs will adopt it for their low volume flagships, since they are forced to compete with one another mainly on specs and "LPDDR6" will sound impressive to some who don't realize they aren't actually getting much from it.

Next-Gen flagship smartphone SoCs from Qualcomm would have options of using 96-bit LPDDR6-10667MT/s or 64-bit LPDDR5X-10667MT/s, there's about one-third more bandwidth with the former which is significant.

no there is the 256/288 factor for LpDDR6 bandwidth calculation ie you have to multiply by bus width * memory speed * 256/288(8/9) so a 96 bit LPDDR6 is roughly (96/8)*(8/9)*10667 cancel 8 by 8 and you get 10667*96/9 = 113GB/s a regular 96bit LPDDR5X(96*10667/8) would be roughly 128GB/sHmm, practically LPDDR5X-9600MT/s and LPDDR6 10667MT/s are quite different.

Next-Gen flagship smartphone SoCs from Qualcomm would have options of using 96-bit LPDDR6-10667MT/s or 64-bit LPDDR5X-10667MT/s, there's about one-third more bandwidth with the former which is significant.

Read again please. My calculations regarding one-third more bandwidth is for 96-bit LP6-10667 compared to 64-bit LP5X-10667 and is correct.no there is the 256/288 factor for LpDDR6 bandwidth calculation ie you have to multiply by bus width * memory speed * 256/288(8/9) so a 96 bit LPDDR6 is roughly (96/8)*(8/9)*10667 cancel 8 by 8 and you get 10667*96/9 = 113GB/s a regular 96bit LPDDR5X(96*10667/8) would be roughly 128GB/s

Present flagship SoCs(from Qualcomm and MediaTek) use/support up to 64-bit LP5X-10667MT/s. Upcoming Snapdragon flagship SoC(s) is/are being tested with 96-bit LP6-10667MT/s and 64-bit LP5X-10667MT/s.

^^ SM8975 supposedly is Qualcomm's next flagship. "MIC" and "SS" are probably Micron and Samsung Semiconductor ? Can infer specs from "LPDDR5X 4CH X16 5.3GHz" and "LPDDR6 4CH X24 5.3GHz".

Practically 96-bit LP5X doesn't matter much here because it's not being used here and 96-bit LP5X (that is 6CH X16 LP5X vs 4CH X16 LP5X and 4CH X24 LP6) could mean using more memory capacity(and may be 1 more memory PHY) making cost go up.

Another thing to consider is SoCs/Platforms using Combo PHYs to maintain compatibility with both LPDDR6 and LPDDR5X. The 2 combo PHYs revealed yet work by maintaining the channel constant not the bit-width. Paraphrased from the datasheet of a Combo PHY it's either "Two channel LPDDR5X/5 only support with 32 DQ bits" or "Two channel LPDDR6 support with 48 DQ bits", so max bit-width supported for platforms with those combo PHYs will be a multiple of those wrt LPDDR5X and LPDDR6 configs.

TRENDING THREADS

-

Discussion Zen 5 Speculation (EPYC Turin and Strix Point/Granite Ridge - Ryzen 9000)

- Started by DisEnchantment

- Replies: 25K

-

Discussion Intel Meteor, Arrow, Lunar & Panther Lakes + WCL Discussion Threads

- Started by Tigerick

- Replies: 25K

-

Discussion Intel current and future Lakes & Rapids thread

- Started by TheF34RChannel

- Replies: 24K

-

-