Charles Kozierok

Elite Member

Where did you get the SOI idea?

Just from people discussing it here (not from the Otellini quote, sorry if I caused confusion.)

Where did you get the SOI idea?

If smaller dies keep having greater heat problems like 22nm did, I am in no rush for more shrinks.

If smaller dies keep having greater heat problems like 22nm did, I am in no rush for more shrinks.

What are the heat problems you're referring to? You mean in terms of overclocking?

For everyone else, I thought IB was a step forward in terms of power consumption.

Actually, I retract my last post as the temp issues seem to all stem from the heat dissipation design, as we've shown when we give it a little help.

Regardless of what part of the CPU design caused it, the bottom line is moving to 22nm caused higher CPU temps than on 32nm. If it is as simple as fixing the heat dissipation design, then fantastic. My only question is if it was so simple, why did Intel not do it for IB?

Regardless of what part of the CPU design caused it, the bottom line is moving to 22nm caused higher CPU temps than on 32nm. If it is as simple as fixing the heat dissipation design, then fantastic. My only question is if it was so simple, why did Intel not do it for IB?

if it was so simple, why did Intel not do it for IB?

Because it is also more expensive, and of no benefit to 99.9% (number from my arse!) of their customers. The weakness of the current design is not apparent until you run it out of spec. I don't really expect Joe Q Dellbuyer to have to pay a bit more for his PC to subsidize my hobby.

Agreed, but my original statement about 14nm and beyond still stands. This problem was not that big of a deal with IB, however, if the issue gets worse going forward, it will impact more people. That was my only point. I am confident that Intel will work to make sure that does not become reality.

It's a step forward for everyone in regards to power consumption.

Actually, I retract my last post as the temp issues seem to all stem from the heat dissipation design, as we've shown when we give it a little help.

right I saw those-- so as of May 12th 2012 they've begun work on it. That's a far cry from people at XS testing some samples out...

..... Essentially its only a 4.5Ghz+ issue. And limited issue as well.

Tjmax was also increased 5C.

What exactly do die shrinks do for CPU performance? Lower voltage and Heat?

a smaller process lets you cram in more transistors in a square millimeter. so the same number of transistors takes up less die space.Several good things GENERALLY happen during a process shrink.

Physical size gets smaller (save some $$$ there or you can put more complexity/performance on the chip)

Devices get faster (higher clock speeds or you can save more power)

Voltages get lower (save power)

The heat for the most part comes entirely from the CPU side logic. The GPU can use a decent amount of electricity per mm^2, but I'd imagine most people who care have the IGP off or idling anyways.on the subject of heat, do we know if IB's heat comes mainly from the CPU part of the die (as opposed to the GPU) or is the heat spread evenly on the die?

would it have been better if Intel had put an iGPU several times bigger than the CPU (in terms of mm2)

to get a beefy iGPU while also increasing surface area and thus reducing heat density?

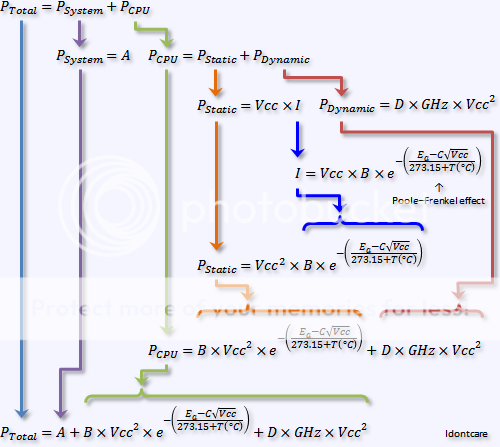

mostly it's the decrease in leakage brought by a shrink which gives you lower power chips (electrical, not computational) and faster switching speed of the transistors themselves which gives you higher frequencies.

Is this really the case? My understanding was that switching power went down with a die shrink, but that leakage actually becomes more of a problem as the feature size goes down, because of smaller thicknesses of insulators, and other related effects.

It could be that leakage increases as a percentage of total power loss as you shrink the node.

There is no requirement that leakage increases with node shrinks, nor is there a requirement that the percentage of power lost to leakage increase as nodes shrink.

http://download.intel.com/museum/Moores_Law/Printed_Materials/Intel_Silicon_Brochure.pdfWhen it comes to power, making transistors smaller

and putting more of them into a small space is a

good news, bad news story. The good news: Smaller

transistors consume less power, and it takes less

voltage to drive them. The bad news: Increasing

density of ever faster transistors means the overall

chip consumes more power and generates more

heat. In addition, power leakage becomes more

problematic with shrinking feature sizes, wasting a

higher portion of total microprocessor power.