I doubt they are doing it by hand for all the codeThey suggest it's converting scalar code into vector code. I assume Intel didn't have someone do that by hand (what a waste that would be).

-

We’re currently investigating an issue related to the forum theme and styling that is impacting page layout and visual formatting. The problem has been identified, and we are actively working on a resolution. There is no impact to user data or functionality, this is strictly a front-end display issue. We’ll post an update once the fix has been deployed. Thanks for your patience while we get this sorted.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Discussion Intel Binary Optimization Tool thread

Page 3 - Seeking answers? Join the AnandTech community: where nearly half-a-million members share solutions and discuss the latest tech.

gdansk

Diamond Member

Who knows.I doubt they are doing it by hand for all the code

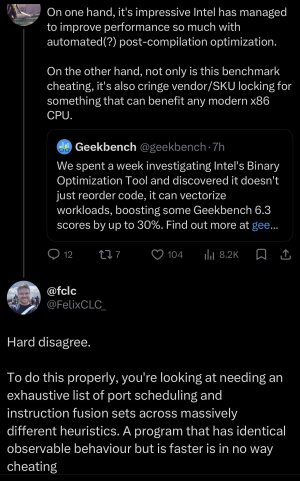

All I can say it is proven we cannot trust what Intel marketing says about this. They're being very selective.

igor_kavinski

Lifer

But they got busted really bad. Really should've tried to buy John Poole off 😀Who knows.

All I can say it is proven we cannot trust what Intel marketing says about this. They're being very selective.

coercitiv

Diamond Member

igor_kavinski

Lifer

Wow. Someone's going to get in trouble at Intel...

coercitiv

Diamond Member

Yup, I smell a promotion.Wow. Someone's going to get in trouble at Intel...

Wow. Someone's going to get in trouble at Intel...

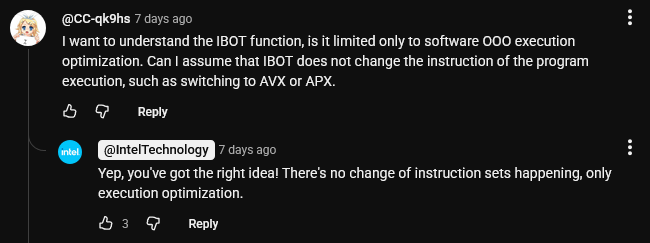

Technically they are safe cause there is no change in instruction set it's not like a AVX2 only processor Becomes a AVX-512 they avoided it cleverly.Yup, I smell a promotion.

igor_kavinski

Lifer

They are not going to feel very clever if after seeing how much vectorization is possible, John gets to work and releases GB 6.8 that is massively vectorized and helps AMD even more. He will want to do that to discourage Intel because he does not want them optimizing every version and him having extra work to do before every GB release. He does not want AMD or websites stopping the use of his benchmark in reviews because it can be cheated at.they avoided it cleverly.

Well yeah but i doubt anyone can out do Intel in X86_64 Software optimization they increased this much score just by iBOT imagine how much they can do with if they wrote stuff correctlyThey are not going to feel very clever if after seeing how much vectorization is possible, John gets to work and releases GB 6.8 that is massively vectorized and helps AMD even more. He will want to do that to discourage Intel because he does not want them optimizing every version and him having extra work to do before every GB release.

gdansk

Diamond Member

He won't do that, or shouldn't. It defeats the point of a cross-platform benchmark. We know that hand-tuned code can get very nice results on wide vector engines. We've known this since Cray. But GB, to be a useful consumer benchmark, should try to reflect real world applications and the optimizations they use. Autovec is reasonable, maybe, but real world applications seldom use it because it often provides small increases while limiting who can run your binary.They are not going to feel very clever if after seeing how much vectorization is possible, John gets to work and releases GB 6.8 that is massively vectorized and helps AMD even more.

But if Intel is distributing a program to replace binaries in place, why won't AMD follow the next time they're behind? And since AMD has a very nice (possibly the best?) FPU in consumer parts they actually stand to gain more doing this.

igor_kavinski

Lifer

He could change the scoring method and split the scores into INT and FP ones while noting that the FP ones could be massively optimized by a vendor and may not reflect real world optimizations in applications. But seriously, Intel has opened a whole can of worms. They need to make iBOT vendor agnostic and apply the optimizations indiscriminately to whatever CPU if they want the worms to disappear.He won't do that, or shouldn't. It defeats the point of a cross-platform benchmark.

Nothingness

Diamond Member

At this point, we can assume Intel is cheating until proven otherwise.Well yeah but i doubt anyone can out do Intel in X86_64 Software optimization they increased this much score just by iBOT imagine how much they can do with if they wrote stuff correctly

Nothingness

Diamond Member

I think the upcoming 6.7 release is only there to get around iBOT.He already invalidate iBOT Run which is fine and should be that way

YeahI think the upcoming 6.7 release is only there to get around iBOT.

They suggest it's converting scalar code into vector code. I assume Intel didn't have someone do that by hand (what a waste that would be).

I'm willing to bet there is some hand tuning involved in some cases, depending on your definition of "tuning". Because if I had to guess it is probably AI doing it, but there's no way they'd let that run wild without some human oversight - and occasional human "correction", so there 100% are humans in the loop.

That's the reason it has to hit the cloud. The AI model they're using can't be run on a typical PC, and even if it could they wouldn't trust it unattended like that.

What I don't understand are the two second lags. You need some sort of lag the first time you run for it to do the checksum and check if Intel has an optimized binary and download it if its there, but once you've done the checksum you can check your stored checksum has a more recent modification time than the executable and know it hasn't changed. Having the service enabled could handle "push" type situations where they didn't have an optimized version the first time you ran it but they later develop one. It gets "pushed" to your PC and it'll use that the next time. No reason for a two second or even two millisecond delay, that's just stupidity on their part.

gdansk

Diamond Member

I think the steps are actually something like this:

1. Lift x64 binaries to LLVM IR with something like remill.

2. Use llc to recompile this using PGO, autovec, -march for the target architecture. Store the check sum and corresponding new binary on Intel's benchmark busting servers for that chip family

3. iBOT client has a white list of known binary checksums for which it should fetch replacement binaries on start on supported chip family

4. Replace the original binary. It's actually hard for me to understand the additional start up time even after the initial download of new binaries. It shouldn't take two seconds to load an alternate GB binary but maybe they're doing something clever - like applying this entire process only to certain functions.

But Intel doesn't want to say what they're actually doing. I wonder why. If I'm right all the tools to do this are open source and very general but combined in a new way here. This would work on any chip not running natively tuned builds for which llvm has reasonable architectural optimzations. But you would probably need to review the results of steps 1 and step 2 takes a long time which explains the whitelist & server concept.

1. Lift x64 binaries to LLVM IR with something like remill.

2. Use llc to recompile this using PGO, autovec, -march for the target architecture. Store the check sum and corresponding new binary on Intel's benchmark busting servers for that chip family

3. iBOT client has a white list of known binary checksums for which it should fetch replacement binaries on start on supported chip family

4. Replace the original binary. It's actually hard for me to understand the additional start up time even after the initial download of new binaries. It shouldn't take two seconds to load an alternate GB binary but maybe they're doing something clever - like applying this entire process only to certain functions.

But Intel doesn't want to say what they're actually doing. I wonder why. If I'm right all the tools to do this are open source and very general but combined in a new way here. This would work on any chip not running natively tuned builds for which llvm has reasonable architectural optimzations. But you would probably need to review the results of steps 1 and step 2 takes a long time which explains the whitelist & server concept.

Last edited:

Currently the lack of transparency is making it hard to say how clever this is. Is this a breakthrough that will allow us speed up legacy software en masse or cheap trick that will fade away when they will get a CPU that can compete without this hassle.thoughts?

From pragmatic point of view, I will take whatever that makes my production binaries faster without breaking them (faster compile times, more fps in games whatever).

But for benchmarks it's basically cheating at this stage. It makes comparing CPUs harder. We also don't know how fast they will add support beyond these initial apps. And if the support is not widespread then it is only skewing perception that the CPU is better than it actually is.

Saylick

Diamond Member

This is outside of my wheelhouse, but would an analogy for IBOT be like GPU drivers, where a vendor releases a new set of drivers that are optimized for their latest generation of GPUs? If so, then I don't think there's an issue with it. All GPU vendors run DirectX, but each vendor is allowed to make tweaks and optimizations for their specific architectures rather than using a generic GPU driver. Does it now mean everyone needs to make custom drivers to make their product have the best competitive edge? Yes. But is it unfair? Likely not?

I think the steps are actually something like this:

1. Lift x64 binaries to LLVM IR with something like remill.

2. Use llc to recompile this using PGO, autovec, -march for the target architecture. Store the check sum and corresponding new binary on Intel's benchmark busting servers for that chip family

3. iBOT client has a white list of known binary checksums for which it should fetch replacement binaries on start on supported chip family

4. Replace the original binary. It's actually hard for me to understand the additional start up time even after the initial download of new binaries. It shouldn't take two seconds to load an alternate GB binary but maybe they're doing something clever - like applying this entire process only to certain functions.

But Intel doesn't want to say what they're actually doing. I wonder why. If I'm right all the tools to do this are open source and very general but combined in a new way here. This would work on any chip not running natively tuned builds for which llvm has reasonable architectural optimzations. But you would probably need to review the results of steps 1/2 which explains the whitelist.

If that's all they're doing there's no reason it couldn't be done locally since it only has to be done once. It has to be something more than that.

poke01

Diamond Member

In real world apps, not unfair. It’s good.custom drivers to make their product have the best competitive edge? Yes. But is it unfair? Likely not?

In benchmarks, bad

gdansk

Diamond Member

Step 1 can go wrong. They can fix it up. I think that's all it is.If that's all they're doing there's no reason it couldn't be done locally since it only has to be done once. It has to be something more than that.

adroc_thurston

Diamond Member

Load of horse since they can always contribute optimizations to Geekbench directly.thoughts?

View attachment 141092

Saylick

Diamond Member

So using the GPU driver analogy, if a GPU driver makes a GPU look better in a gaming benchmark, is that bad?In real world apps, not unfair. It’s good.

In benchmarks, bad

Again, this is not my wheelhouse whatsoever, so if the analogy of a GPU driver is not anywhere near representative, then I stand corrected but would like to understand how IBOT is different.

TRENDING THREADS

-

Discussion Zen 5 Speculation (EPYC Turin and Strix Point/Granite Ridge - Ryzen 9000)

- Started by DisEnchantment

- Replies: 25K

-

Discussion Intel Meteor, Arrow, Lunar & Panther Lakes + WCL Discussion Threads

- Started by Tigerick

- Replies: 25K

-

Discussion Intel current and future Lakes & Rapids thread

- Started by TheF34RChannel

- Replies: 24K

-

-