-

We’re currently investigating an issue related to the forum theme and styling that is impacting page layout and visual formatting. The problem has been identified, and we are actively working on a resolution. There is no impact to user data or functionality, this is strictly a front-end display issue. We’ll post an update once the fix has been deployed. Thanks for your patience while we get this sorted.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Discussion Intel Binary Optimization Tool thread

Page 2 - Seeking answers? Join the AnandTech community: where nearly half-a-million members share solutions and discuss the latest tech.

poke01

Diamond Member

as long as they don’t touch benchmarking software, i don’t care what Intel does to get themselves a win.I'm pretty excited about Intel violating the trust of other prominent software developers.

But it’s a messy way of gaining performance.

gdansk

Diamond Member

Sure and in that manner quite unlike Zen 2. Yet it chews through code that Intel marketing says is "console optimized". A curious line they spin.RPL Clocks sky high and the uncore is really fast in Raptor Lake not to mention lower latency than any other modern processor

That's only true for handful of games 🤣 better to not take marketing at face value. Also Lion Cove from uArch perspective kind of different than Skylake the ports has changed in LNC it's no longer unified ports and not to mention we have L0/L1/L2/L3 another cache hierarchy Golden Cove is not much changed in terms of cache hierarchy and ports (I don't mean the capacity obviously).Sure and in that manner quite unlike Zen 2. Yet it chews through code that Intel marketing says is "console optimized". A curious line they spin.

What I don't like is that they probably (I am guessing, but they marketing does not want to give us real answers so take it with a grain of salt) is that they have made LLVM's BOLT (OSS) work on Windows but do not want to share the results with the rest of community. Their current compiler is downstream of LLVM what just make it even more likely.But it’s a messy way of gaining performance.

While I understand some bits of HWPGO might not work for others (the profile gathering) the other part of implementation should be universal. I mean it would be then AMD/Qualcomm/nVidia problem to gather profiles for their CPUs. But that would also empower normal people to run that on their own, for their own software. The gains could be weak in that case, but at least this pseudo-secrecy black magic vibe from marketing would be gone.

Once again, I am not saying this is what they are exactly doing, just guessing based on what I understand from marketing messages. I can be wrong.

igor_kavinski

Lifer

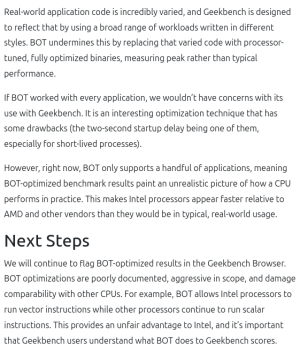

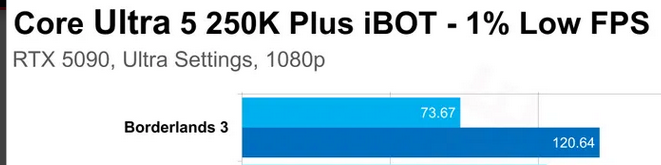

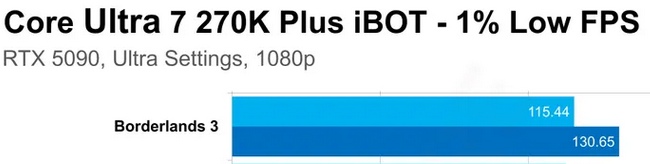

Decent investigation with lots of data.

My takeaways:

250K is generally seeing more improvement from iBOT supported games than 270K. I think the 250K suffers due to only 6 P-cores and iBOT either makes those P-cores more performant or it makes the Skymonts performant enough to make up for the absence of two additional P-cores vs. 270K. This could've been tested by disabling E-cores but unfortunately, it's Tom's Hardware. Anand Lal Shimpi would definitely have delved deeper.

Also,

See the massive improvement 250K is experiencing with iBOT? I think that's the Skymont IPC improvement working its magic. Again, this should've been investigated.

The temps and power use also increase significantly in some cases, meaning the cores are working harder and less time idling. Doesn't always translate to a proportional increase in performance though. The clocks are also dropping a bit in a few cases. This could be due to rising temperatures and/or AVX2 codepaths causing localized hotspots which then need to be mitigated with downclocking. Just a guess.

Also, NOTICE the temps for 250K rising up to 5C in Far Cry and Cyberpunk. We know that the Skymonts can be harder to cool than the P-cores due to being denser silicon. So this lends credence to my theory that their IPC is getting boosted by iBOT trickery.

Nothingness

Diamond Member

Too bad it's missing results of subtests of Geekbench.

here have thisToo bad it's missing results of subtests of Geekbench.

Nothingness

Diamond Member

Thanks a lot! So that's not only large code bases that benefit, interesting.here have this

Hulk

Diamond Member

Let's look at one possible action IBOT may be performing and the ramifications.

Let's say IBOT determines there is certain data that is currently being ejected from cache and it would be more beneficial for this data to remain in cache for a particular software.

Now it would seem logical that this specific optimization would pay larger dividends in systems with smaller cache because that data would be less likely to be ejected from systems with larger cache.

Furthermore, this would imply that these optimizations would be more beneficial to Lion Cove rather than AMD's X3D parts with v-cache.

Is this basic logic reasonable?

Let's say IBOT determines there is certain data that is currently being ejected from cache and it would be more beneficial for this data to remain in cache for a particular software.

Now it would seem logical that this specific optimization would pay larger dividends in systems with smaller cache because that data would be less likely to be ejected from systems with larger cache.

Furthermore, this would imply that these optimizations would be more beneficial to Lion Cove rather than AMD's X3D parts with v-cache.

Is this basic logic reasonable?

LightningZ71

Platinum Member

In general, in many games, there are things that the E cores can be doing that don't require extreme low latency response. Offloading to those cores can reduce the context switches needed on the Pcores, making them more efficient for throughput. The performance difference in the above charts is almost exactly in line with the P core max boost frequency difference between the two SKUs, meaning that having only 6 P-cores isn't really a hold up if there are sufficient e-cores to carry the background load. Games typically only have 1-2 threads/processes that are latency critical with respect to performance. they are starting to get more secondary threads that have a lot of general work to do, so they need a good core to complete that in a timely manner so their output is available to the latency critical threads, so there is a need for performant secondary cores as well. Then, there's the various housekeeping and pre-work threads that can all be done efficiently by the e cores. It looks like the software is doing it's job with respect to getting threads where they are supposed to be.

I have to wonder if the uncore fixes/improvements/improved timings are playing a crucial part in getting this software to work right? If the uncore was much slower, moving those other threads and their data around would probably be more painful.

I have to wonder if the uncore fixes/improvements/improved timings are playing a crucial part in getting this software to work right? If the uncore was much slower, moving those other threads and their data around would probably be more painful.

dttprofessor

Member

In general, in many games, there are things that the E cores can be doing that don't require extreme low latency response. Offloading to those cores can reduce the context switches needed on the Pcores, making them more efficient for throughput. The performance difference in the above charts is almost exactly in line with the P core max boost frequency difference between the two SKUs, meaning that having only 6 P-cores isn't really a hold up if there are sufficient e-cores to carry the background load. Games typically only have 1-2 threads/processes that are latency critical with respect to performance. they are starting to get more secondary threads that have a lot of general work to do, so they need a good core to complete that in a timely manner so their output is available to the latency critical threads, so there is a need for performant secondary cores as well. Then, there's the various housekeeping and pre-work threads that can all be done efficiently by the e cores. It looks like the software is doing it's job with respect to getting threads where they are supposed to be.

I have to wonder if the uncore fixes/improvements/improved timings are playing a crucial part in getting this software to work right? If the uncore was much slower, moving those other threads and their data around would probably be more painful.

It's the work of APOIn general, in many games, there are things that the E cores can be doing that don't require extreme low latency response. Offloading to those cores can reduce the context switches needed on the Pcores, making them more efficient for throughput. The performance difference in the above charts is almost exactly in line with the P core max boost frequency difference between the two SKUs, meaning that having only 6 P-cores isn't really a hold up if there are sufficient e-cores to carry the background load. Games typically only have 1-2 threads/processes that are latency critical with respect to performance. they are starting to get more secondary threads that have a lot of general work to do, so they need a good core to complete that in a timely manner so their output is available to the latency critical threads, so there is a need for performant secondary cores as well. Then, there's the various housekeeping and pre-work threads that can all be done efficiently by the e cores. It looks like the software is doing it's job with respect to getting threads where they are supposed to be.

I have to wonder if the uncore fixes/improvements/improved timings are playing a crucial part in getting this software to work right? If the uncore was much slower, moving those other threads and their data around would probably be more painful.

igor_kavinski

Lifer

That's a good point and could be tested by launching a game with higher than normal process priority on the 250K and see if that improves performance since the higher priority will minimize context switches on the P-cores.Offloading to those cores can reduce the context switches needed on the Pcores, making them more efficient for throughput.

Schmide

Diamond Member

This one is good. The others are a little dated. IMO

CouncilorIrissa

Senior member

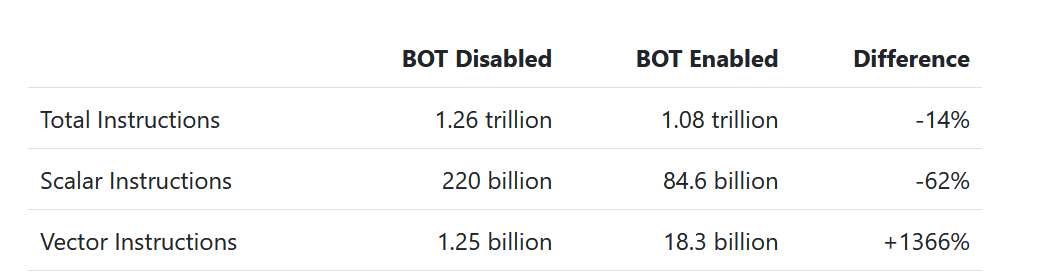

Analyzing Geekbench 6 under Intel's BOT - Geekbench Blog

gdansk

Diamond Member

Wow, I didn't think they'd include autovectorization.Analyzing Geekbench 6 under Intel's BOT - Geekbench Blog

www.geekbench.com

If AMD follows along in these shenanigans autovec may not even be a net win for Intel.

dttprofessor

Member

apx ready!

It's interesting if they handtune the code or they have some sort of algorithmic solution to the problem. Usually the auto autovec leaves a lot of perf on the table.Wow, I didn't think they'd include autovectorization.

If AMD follows along in these shenanigans autovec may not even be a net win for Intel.

A pity they focused only on the most shocking example I was really curious what is there behind clang improvements😉Analyzing Geekbench 6 under Intel's BOT - Geekbench Blog

www.geekbench.com

LightningZ71

Platinum Member

AutoVec is TYPICALLY better than no vectorization at all. For CPUs with a full rate implementation of AVX-512, even those suboptimal gains could be substantial.

I haven't dug into the details yet. I wonder if it's even taking older pre-AVX2 code and vectorizing as much of that as it can to AVX2?

I haven't dug into the details yet. I wonder if it's even taking older pre-AVX2 code and vectorizing as much of that as it can to AVX2?

gdansk

Diamond Member

They suggest it's converting scalar code into vector code. I assume Intel didn't have someone do that by hand (what a waste that would be).I haven't dug into the details yet. I wonder if it's even taking older pre-AVX2 code and vectorizing as much of that as it can to AVX2?

igor_kavinski

Lifer

They've been doing that since GB 6.3 so almost two years they've been at it. I suppose that's when they got ARL Refresh silicon back from the fab or even NVL silicon!

TRENDING THREADS

-

Discussion Zen 5 Speculation (EPYC Turin and Strix Point/Granite Ridge - Ryzen 9000)

- Started by DisEnchantment

- Replies: 25K

-

Discussion Intel Meteor, Arrow, Lunar & Panther Lakes + WCL Discussion Threads

- Started by Tigerick

- Replies: 25K

-

Discussion Intel current and future Lakes & Rapids thread

- Started by TheF34RChannel

- Replies: 24K

-

-