You have been offering the Application Optimization tool (APO) to improve the performance of apps and games since the 14th Gen Core. How does iBOT differ from APO?

Hallock: "First, let's talk about APO: that is a technology that works at the OS level. It controls how many threads a game uses and what priority they are given. Many games determine this themselves via a so-called affinity mask, but the underlying assumptions are not always correct. For example, a CPU with eight physical cores and eight virtual threads is then seen as a CPU with sixteen physical cores.

Because of that incorrect assumption, a lot of unnecessary work is started that the circuits in that CPU cannot process efficiently at all. Managing the overhead of all those threads subsequently causes the game to actually slow down. APO enables us to identify such cases and make the CPU respond better to the game.

However, APO cannot fix errors in the code at the architecture level. You need a different tool for that. Our Binary Optimization Tool really works at the execution level of the microarchitecture."

Intel Application Optimization was introduced together with the 14th Gen Core processors and is enabled by default today. Intel Application Optimization was introduced alongside the 14th Gen Core processors and is enabled by default today.

Chynoweth: "To add something to what Rob said: I always say that APO optimizes hardware resources for the software and iBOT optimizes the software for the hardware. And the interesting thing is that a kind of gray area arises between those two, which, to be honest, we hadn't entirely foreseen when we started this. All sorts of interesting interactions emerge in that intermediate area."

Hallock: "So, APO and iBOT are different tools for different problems, on different layers of the software stack. Without those tools, gamers would end up in situations where somewhere between 5 and 25 percent of potential performance is locked behind an optimization choice that might not be right for the CPU the user actually has. We want to give that performance back to the user with APO and iBOT."

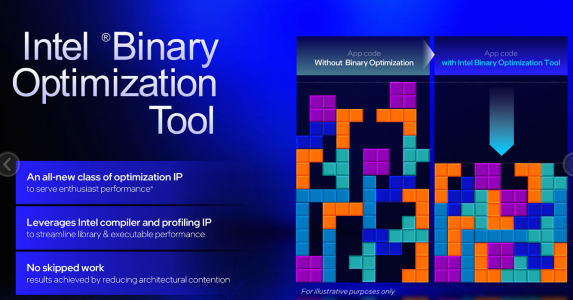

So how does Binary Optimization Technology optimize the software for the hardware? Hallock: "The basic idea is that developers have to make choices when optimizing their software. This often happens based on a specific architecture or processor series; only well-funded developers can look at a lot of different CPUs in their lab. The architecture on which a program or game is optimized—which can sometimes even be console hardware or an Arm CPU—influences what kind of code is used and which instructions are used. Even if code is optimized for, say, a different x86 architecture, we can still run it, but that does have a major impact on performance.

With iBOT, Intel now has a tool to 'open' a compiled workload. We cannot extract anything from it, but we can streamline or reorder things so that it aligns better with the execution pipeline of modern Intel CPUs, for example, so that the machine code fills the cache better or utilizes the branch predictor better. With iBOT, we look at features within it that run slower than they could, to replace them on-the-fly with code that works better on Intel's x86 architecture." Intel iBOT

Perhaps it would be good to briefly define exactly what 'machine code' is.

Hallock: "Machine code is an instruction at a very deep level. You are no longer looking at a programming language with context and logic, but at a kind of manual that tells the CPU what to do. For example: jump to memory address 0x00010 to retrieve a piece of data there."

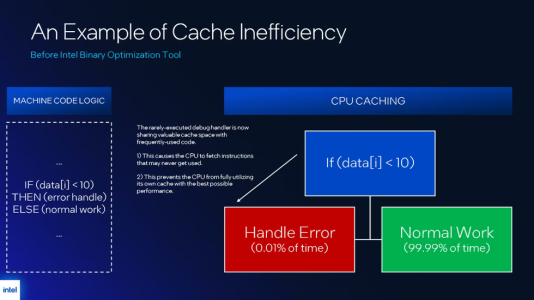

Can you give a practical example of how iBOT can optimize that machine code? Hallock: "Let's take a hypothetical example of a program that caches something. On the left (in the image below, ed.) you see a simple 'if-then' construct. If this small piece of data has a value lower than 10, the next line of code goes to an error handler. And if that is not the case, you do the normal work instead. The 'then' is therefore the exception.

The only problem is that many algorithms for caching and branch prediction simply take the next line of code—that then-statement—as a starting point. But if that code is used in only 1 percent of cases, or even in 0.01 percent of cases, then the CPU caches something and jumps to something that is almost never used. That is a waste of valuable cache space and precious processor time." Intel iBOT example (1 / 2)

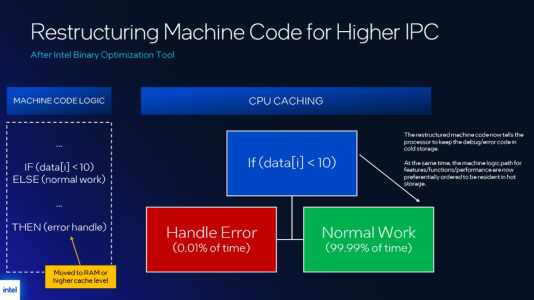

Hallock: "An example of how we can reorganize that with iBOT is to put the then statement in cold storage. You can move that to the L3 cache or even main memory, so that the actual working path is taken by default in the machine code.

So, the following happens: instead of first running the error handler, which fills the cache with things you probably won't use, you fill the cache with useful data that you *do* need. You then no longer discard important cache lines for a very exceptional scenario.

There are all kinds of examples like this in the field of caching, code hotspots, and branch prediction. But the idea is that we can restructure where and when the least used path ends up in the machine code, so that the processor can make better decisions as it runs through the code."

If you look purely at the machine code, you simply don't know whether a turn is taken almost always or almost never in practice. So, you have to run and analyze the code to know what is happening, it seems to me.

Chynoweth: "Yes, there is a lot involved. We record every jump taken in the code (a branch in jargon, ed.) in what we call the lastbranch records. "There we see which branches are taken, where they originate from and to, and whether that branch was accurately predicted by the branch predictor."

So, do you run a program on a sort of simulated processor, or on a real chip like the ones consumers have in their PCs?

"We use a real processor with a real workload.""Hallock: "That is exactly where you hit our advantage: we use a real processor with a real workload. Everyone does code profiling, but generic tools for that don't give you specific details about what happens *inside* the processor. For certain aspects of the microarchitecture, you are simply blind.

The in-house developed tool we use, Intel Hwpgo, uses information from a performance monitoring unit in the hardware. It provides information about specific events and gives, for example, the address information and those lastbranch records. This allows us to see exactly where the bottleneck is in the processor. "We originally devised this method for monitoring performance to help developers debug, but with iBOT, we have also found a great application for it for users."

Chynoweth: "What is important is that with this hardware-based profiling, you can be absolutely certain that your analysis is correct. Previously, developers often used an instrumented binary with all sorts of extra logging. However, that slows down execution by at least a factor of three; I have even seen a factor of ten. How do you then know whether the results of your profiling are caused by your own debug features or by real inefficiencies in the code?

Our measurements have less than one percent overhead. Hwpgo is without a doubt a superior method of profiling." It also costs hardly any performance while you are doing it, and you can apply it to real binaries in a production environment.

What kinds of optimizations can you do with all that information?

Hallock: "The technology consists of various modules, which are best viewed as a family of tools that each intervene in architectural aspects of software in their own way – think of branching, caching, or code hotspots.

These modules are remarkably generic, because the problems they address recur throughout the code. Which module is deployed depends heavily on the context: which compiler was used, with which compiler flags, how long ago the code was built, what it was intended for at the time, and even which processor the developer had on their desk back then.

Exactly which modules we use differs per workload, but they all come from the same toolbox and have the same goal: identifying and resolving architectural bottlenecks in the code."

I noticed that while the provisional list of supported games includes some recent titles, such as Cyberpunk 2077, it still consists mainly of older games. That is apparently where the most potential lies. Is that because developers have started programming better, or because compilers are improving and have started using newer x86 features?

Chynoweth: "A bit of everything, actually. For iBOT, we primarily look at games that are popular but run inefficiently. We also apply it mainly to titles where we do not have the opportunity to collaborate with the developers. For Cyberpunk 2077, we previously worked with the developers on a major patch that yielded a performance gain of about 30 percent. But take Shadow of the Tomb Raider, a game that is no longer actively being developed. Yet, many people still play that game, and we know there are specific bottlenecks that stand in the way of better performance. In such cases, we deploy the Intel Binary Optimization Tool to improve that efficiency and achieve better frame times." Furthermore, the focus so far has been almost exclusively on games. Is there also potential for iBOT in productive software?

Hallock: "In theory, every category of software is eligible for binary optimization. But that immediately raises some more fundamental questions: is it really worth the effort? Is the performance gain significant enough? Is this a sensible use of engineering time? And how does the community view this – do they see it as something useful and positive, or not? Those are more philosophical considerations.

In practice, it doesn't matter that much whether it concerns creative applications, productivity software, or games: wherever there is room for optimization, we could apply binary optimization. That is ultimately our goal as well. At the same time, we realize that non-game workloads likely have a higher threshold, certainly given their reputation, both among users and in the media. That is why we are deliberately rolling out this technology cautiously and methodically, and participation remains opt-in.

"We know how quickly the perception can arise of: 'Look, Intel is enabling a feature and getting a higher benchmark score as a result.'" "We want to avoid that at all costs." "We know how quickly the perception can arise of: 'Look, Intel turns on a feature and gets a higher benchmark score as a result.' We want to avoid that at all costs. We want to show that it is possible, and then work step by step towards a broader application. There is still enormous potential in this technology, but we are deliberately choosing a cautious approach so that the industry has time to understand what we are doing and it can develop gradually."

So, for example, you don't want to do Cinebench optimization, because people might see that as cheating?

Chynoweth: "It has to have a positive effect on the end user. Cinebench is a rendering benchmark, so let me take rendering as an example. We recently implemented Hwpgo together with Chaos Group, the developer of the popular renderer V-Ray. With optimizations similar to what we do with iBOT, we achieved an average performance gain of more than 15 percent. V-Ray customers, major film studios for example, benefited directly from that."

Maiorino: "That also shows that our engineers have built trust with partners like Chaos Group. Implementing a compiler update is no small feat; a lot can go wrong. Mike (Chynoweth, ed.) and his team have done an excellent job earning that trust and demonstrating that we want to unlock extra performance in close collaboration with our partners. That way, everyone benefits from that great performance gain."

You would think that heavy CPU workloads, such as rendering and video encoding, have already been optimized so extremely that there is little potential left. But you do see a future role for iBOT in those kinds of tasks, then? Hallock: "If we take video encoders as an example, a large part of the optimization time in such projects is spent on making the encoder itself faster, such as during scene analysis and coding. In this regard, the focus lies primarily on the algorithmic optimization of the codec one intends to implement.

However, this does not necessarily mean that optimization has been specifically aimed at a particular CPU architecture. Developers want the encoder to perform well, but may not consider optimizations specifically targeted at a single architecture—and in open-source projects, they often do not feel a direct obligation to do so.

In such cases, therefore, there is certainly still room for improvement. A workload may already be intensively generically optimized, while there is still much to be gained from architecture-specific optimization."

Chynoweth: "It is precisely with codecs that we sometimes see our biggest performance gains, which makes this a particularly interesting domain for us."

In an earlier presentation, you said that iBOT is now starting with the Core Ultra 200 Plus CPUs, but will become a fixed pillar for performance improvements in future CPU generations from now on. Hallock: "The first litmus test is actually the question: for which type of product is binary optimization useful? That will always be a product that is not bottlenecked by the GPU. For example, we do not apply it to Panther Lake 12 Xe, the configuration with a large integrated GPU. For an iGPU, it is very fast, but in many cases, it will be utilized to its maximum potential. In that case, there is little more to be gained from binary optimization; even if we make the CPU even faster, the GPU cannot suddenly produce more frames.

Panther Lake 4 Xe, with a less powerful iGPU, is specifically intended to be combined with a powerful discrete graphics card. Consequently, games in these systems will be bottlenecked by the GPU much less quickly, making it a more suitable scenario for iBOT. However, we do look at what makes sense per processor, which means the list of supported games can differ between, for example, Panther Lake for laptops and the Core Ultra 200 Plus CPUs for desktops.

Looking to the future, the rule of thumb is therefore: if "Since the platform is designed to work with a dedicated graphics card, we will implement iBOT in both laptops and desktops."

But such a graphics bottleneck only occurs in games. How does that apply to optimizations for pure CPU workloads?

Hallock: "That is correct; for pure CPU applications, any product can in principle benefit from binary optimization. But we are under no illusions; people buy these CPUs primarily for gaming, and that is why the ratio of games to other software in the support list is currently about 15 to 1."

You already mentioned that iBOT is not enabled by default; for now, it is opt-in. What makes you hesitant?

Hallock: "History is full of examples of 'turn on a feature and get more performance'. But that same history also shows that this did not always end well.

"You could, of course, say: this is great for gaming, so why isn't it just on by default?" "We understand very well that binary optimization might look like those earlier attempts at first glance. That is precisely why we felt it was essential to introduce this technology as conservatively as possible. We know that iBOT differs fundamentally from earlier attempts that may have looked superficially similar, but did something completely different in substance. Those earlier examples entailed risks for consumers and for public trust. That is why we are choosing to keep iBOT opt-in for the time being, so that people can get used to it gradually and fully understand what it does. We might enable it by default in the long run, but first we want to see how it is received. We felt that was the right, ethical approach.

You could, of course, say: this is great for gaming, so why isn't it just on by default? But we want to play it safe. In our opinion, that is better for ourselves and for the public." and for the industry as a whole."

Is that also due to the possibility that there are still side effects that you did not see in your tests, but which users might encounter? Or is it primarily a matter of perception and acceptance? Hallock: "Especially that second point. Let me be clear: we don't collect telemetry, so we don't know who turns on iBOT. What we *do* do is pay very close attention to the sentiment: to your reporting, reactions on your site, comments on YouTube, Reddit, X, Bluesky, and so on. We monitor all of that closely to gauge what people think about it. Based on that, we can potentially adjust our choices or, conversely, confirm them. We have yet to see that.

""Personally, I expect users will receive it well, by the way. After all, it's free extra performance.""Personally, I expect users will receive it well, by the way. After all, it's free extra performance. But at the same time, we understand that this is very different from how CPU optimization has been common practice over the past fifty years. Now we suddenly have an extra roadmap. We already had a hardware roadmap that leads to increasingly better gaming performance, but now we also have a software roadmap that improves gaming performance—at the same pace or perhaps even faster.

That is quite remarkable. There is nothing else like this. But when you introduce a major change, you can't turn the world from one to the next change it the next day. That's not how it works. So we want to do this step by step."

Finally, what should our readers remember about iBOT?

Chynoweth: "I would say that iBOT is truly a game changer in a sense, because it is going to change how software and hardware interact. The software now runs more harmoniously with the hardware. And with APO, the hardware is in turn optimized for the software. Looking ahead, we are even already exploring additional capabilities within the CPU itself.

We are working directly with the CPU designers to see what information the chip can return to us in real time, on a nanosecond scale, and how we can use that not only to increase the average frame rate but also to address frame consistency and other issues affecting the user experience. We are going to focus on that even more aggressively in the future." ""These are real extra fps, and we know that really counts for gamers.""Maiorino: "From my side, I mainly want to emphasize that this is a real fps gain. These aren't fake frames, no weird AI things happening off-screen. These are real extra fps, and we know that really counts for gamers."

Hallock: "What I mainly want to convey to the readers of Tweakers is that we are not removing a single piece of work that the binary is supposed to execute. Everything that was in the source code is still happening. Nothing is being skipped. But we can achieve a lot of performance gain by ensuring that the code reaches the processor more compactly and efficiently.

iBOT is therefore a very pure form of performance optimization, because you keep everything as it was intended, but simply execute it better. That is what we are really proud of: that we can make a workload run faster, while showing respect for the original, by keeping all original requests intact." I can imagine that it is sometimes very tempting to simply delete a piece of code if you see that something is being calculated unnecessarily or could easily have been skipped.

Hallock: "Yes, but it just stays in, because it was simply in the original. That is the right thing to do."

www.computerbase.de

www.computerbase.de