Kimi K2 is 1 trillion params.Big dawg those have DRAM requirements in 10s of gigabytes.

Get real.

Get real. Stop living under a rock. This guy still thinks local LLMs are 7b. 😅

Last edited:

Kimi K2 is 1 trillion params.Big dawg those have DRAM requirements in 10s of gigabytes.

Get real.

cool but you know that this is out of topic.Kimi K2 is 1 trillion params.

Get real. Stop living under a rock.

this wank is in no way related to normal PCs with 16G of DRAM.Kimi K2 is 1 trillion params.

Get real. Stop living under a rock. This guy still thinks local LLMs are 7b. 😅

Hence, I said Intel is missing the boat on unified memory with high capacity and high bandwidth.this wank is in no way related to normal PCs with 16G of DRAM.

No it isn't. Trace the replies back. You'll find that it is on topic.cool but you know that this is out of topic.

really......Hence, I said Intel is missing the boat on unified memory with high capacity and high bandwidth.

It was you who started by saying local llms = 7b models.

How expensive is a setup like that? Could you realistically get a server board + CPU + 2TB RAM and reach the same performance levels in the same budget? Would be interesting to see a comparison (since this is an Intel earnings thread, let's say you try it with Granite Rapids).

Entirely depends on ram cost you can get a 128C GNR CPU for like $6K USD the RAM is the only pain pointHow expensive is a setup like that? Could you realistically get a server board + CPU + 2TB RAM and reach the same performance levels in the same budget? Would be interesting to see a comparison (since this is an Intel earnings thread, let's say you try it with Granite Rapids).

Well you're paying for RAM whether you buy the Mac Minis or the server board.Entirely depends on ram cost you can get a 128C GNR CPU for like $6K USD the RAM is the only pain point

well than 1 maxed out ultra is $14099 with 512GB RAM and 32 TB StorageWell you're paying for RAM whether you buy the Mac Minis or the server board.

There exists models in the range of 64GB - 100GB large that machines like Strix Halo and M4 Max are great for. 1T is an example of what's possible now locally using consumer/prosumer hardware.really......

like do people choose to just be contrary because thewy have nothing else of value to add.

i am microslop , i can develop a local LLM to do X/Y/Z .

i am picking my hardware target for mass adoption. am i

1. going to pick a 7/14/etc B 8bit model

2 going to pick a 1T *bit model

sigh

Take out the 32TB SSD. That adds $5k to the final cost. Just use an external drive you need more storage. Much cheaper.well than 1 maxed out ultra is $14099 with 512GB RAM and 32 TB Storage

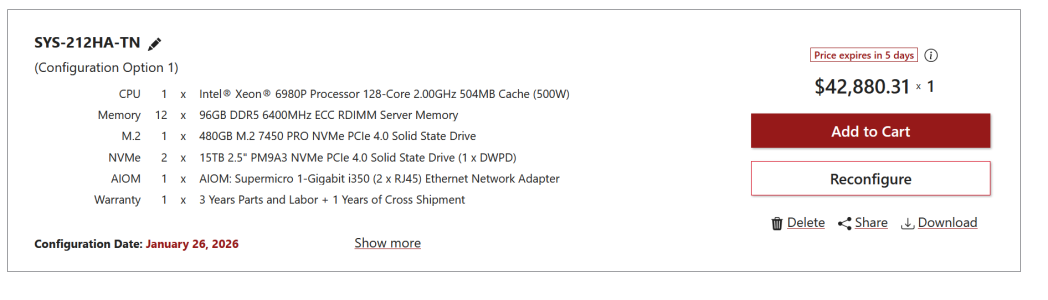

Here is a GNR Server with similarish configuration

Buy Mac Studio

Get a new Mac Studio desktop with M4 Max or M3 Ultra from only $166.58 per month. Select a model or customize your own. Buy now at apple.com.www.apple.com

https://store.supermicro.com/us_en/configuration/view/?cid=1000429554&5554 fwiw RAM Price was more than half of server price

View attachment 137323

Uhh the CPU can do that as well? also if you only want 512 GB Ram than the cost comes way down with storageTake out the 32TB SSD. That adds $5k to the final cost. Just use an external drive you need more storage. Much cheaper.

The most important thing here is the 512GB of unified memory.

So we're looking at $9.5k for a 512GB Mac Studio. You only need 1 Mac Studio to run Kimi K2 1T if you use the Q3 version.

A CPU can’t have as much TFLOPs relative to die size.Uhh the CPU can do that as well? also if you only want 512 GB Ram than the cost comes way down with storage

AMX is purpose built for AI it's literally Matmul for CPUA CPU can’t have as much TFLOPs relative to die size.

Like i said use a Xeon6 with AMXTry running a large model like DeepSeek on Epyc or Xeon. It’s like watching paint dry.

A CPU will never have the combination of TFLOPs and memory bandwidth that can beat a GPU in inference efficiency.AMX is purpose built for AI it's literally Matmul for CPU

Like i said use a Xeon6 with AMX

Cost Effective Deployment of DeepSeek R1 with Intel® Xeon® 6 CPU on SGLang | LMSYS Org

<p>The impressive performance of DeepSeek R1 marked a rise of giant Mixture of Experts (MoE) models in Large Language Models (LLM). However, its massive mode...lmsys.org

than we have this

So the Mac solution in this case winds up costing $10k more. Not sure how the performance stacks up, but if what you really need is 2TB of RAM for your local LLM . . .well than 1 maxed out ultra is $14099 with 512GB RAM and 32 TB Storage

...So Intel is trapped. They underinvested for a decade and are now literally caught up by it. Capacity is finite and those limits have been reached, expansion is years away if started now and the starts that were in progress were scaled back. Were they scaled back correctly? Possibly, this depends on your views about spending like a drunken sailor, had that been done five years ago, what would the payout be now? And how would a potential foundry customer view this?

So all of the pieces come down to the board and their lack of competence or worse. The multi-billion dollar skeletons in the closet that were papered over, buried, and never even acknowledged publicly meant no one was ever held accountable. The rot continued and money wasn’t spent on things it should have been. Every FPGA propping up Ericsson et al for 5G base stations was a brick that wasn’t put in a new fab, and so on. As things stand now, Intel has a new CEO in the hot seat but the problem remains on high as the company suffers. Don’t look for real solutions any time soon, just more denials and lack of accountability.S|A

www.semiaccurate.com

www.semiaccurate.com

To get this thread back on track, Charlie has a write up of his thoughts:

Intel Is In A Serious Bind With Few Options

Last week’s Intel Q4/2025 analyst call was a disaster that has a single root cause but no solution.www.semiaccurate.com

Pat problem is he came to late, his plan if they start 3-5 years eailer when everyone here could see the comming pain but intel financials still looked good, would have a good shot at getting back foundary leadership. But all that moneys was already paid out in dividends. The problem is your not going to catch up to TSMC by spending less.Everybody has move on, but not Charlie.

Charlie's still has a crush on Pat Gelsinger. Gelsinger can do no wrong in Charlie's eyes.

? Intel hasn't been in DRAM for some time. Unless you count Optane as DRAM - it isn't - but that wasn't sold to SK Hynix.Welp, after selling off their memory business in the early 2020s to SK Hynix, Intel is trying to get back into the memory business. Given their prior history of constantly missing the boat, I wonder how this will fare. Will the memory supply catch up by 2029? Will AI demand fall off by then?

https://www.softbank.jp/en/corp/news/press/sbkk/2026/20260203_01/

Welp, after selling off their memory business in the early 2020s to SK Hynix, Intel is trying to get back into the memory business. Given their prior history of constantly missing the boat, I wonder how this will fare. Will the memory supply catch up by 2029? Will AI demand fall off by then?

https://www.softbank.jp/en/corp/news/press/sbkk/2026/20260203_01/

It is another non standard memory. Intel got on board with Rambus back in the 2000s but did not manufacture it themselves, pushed XPoint in the 2010s which they did manufacture, so I guess this is their failed custom non-DRAM memory for the 2020s (yeah I know RDRAM was DRAM, but XPoint wasn't and neither is this)

Saw today they are also getting back into GPUs. I suppose these announcements work well on Wall Street analysts who mostly aren't too cognizant of the immense difficulty of pushing a new memory standard or how long it'll take from "we're back in the GPU business" to shipping GPUs vs how long the AI bubble has left.