Do you have a Macbook Adorable? Note the real name is the macbook but some people call it other nicknames like macbook one, or do you have a different computer?

Core m3 7Y-32 could go all the way up to 1.6 ghz with ctdp up for the base clock but apple with the macbook adorable decides on 1.2 ghz.

I am an ATP podcast fan and Casey Liss just recently got the 2017 Macbook Adorable which he loves

https://www.caseyliss.com/2017/6/25/macbook-adorable

But Marco hated his 2015 macbook adorable but he hates everything

https://marco.org/2015/05/19/mistake-one

https://marco.org/2015/05/19/mistake-one

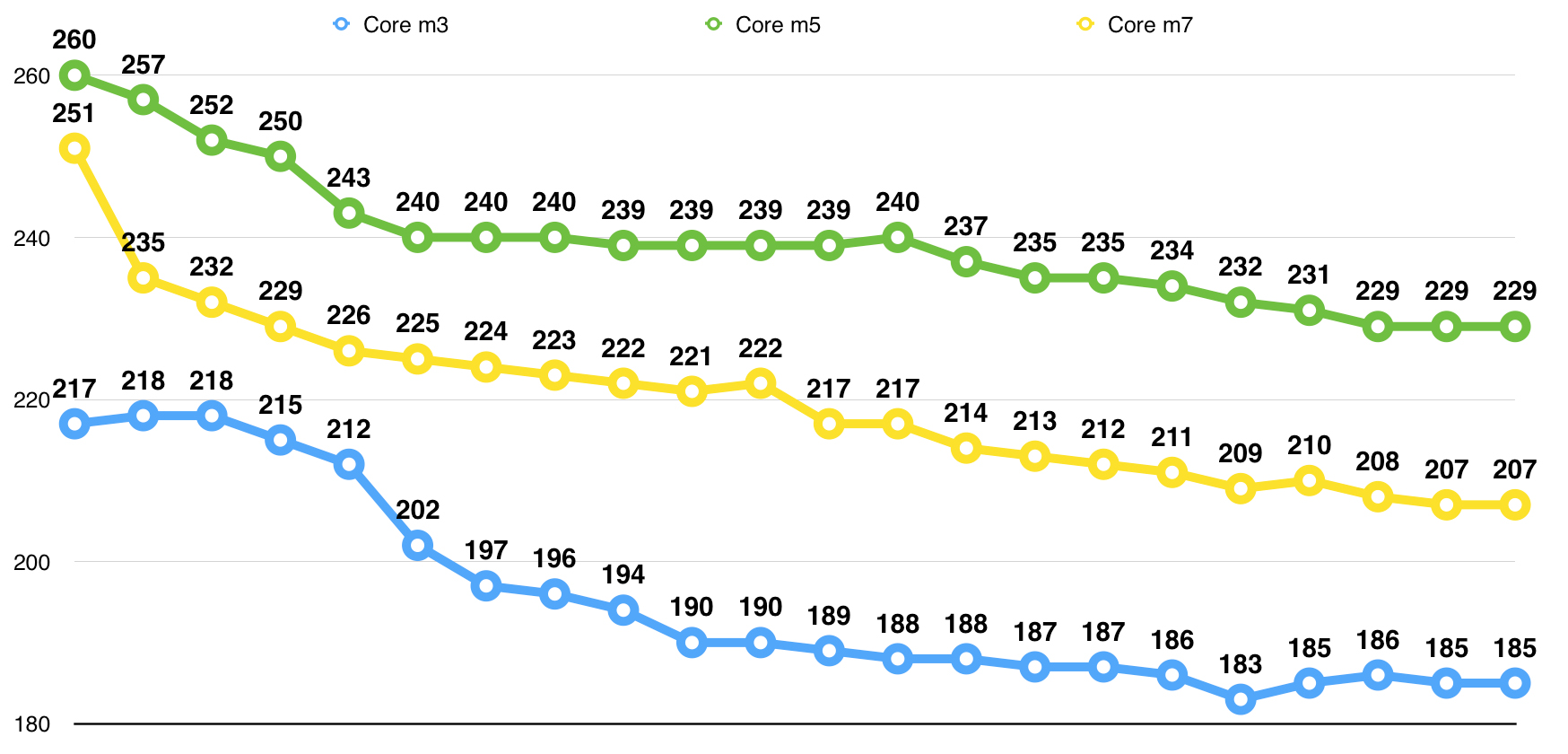

Honestly though I see very little reason to get the i5 or i7, when the core m3 7Y32 is such a good processor. 20% faster turbo is not worth $250 more for the i7 vs the m3.

No nicknames.

I was all set to buy a Core i5 MacBook, until I learned that Intel added a SKU. I was aware of the 7Y30 but was not impressed with its specs. Intel later added the 7Y32 which not only drastically increased the Turbo Boost speed, it also added full HDCP 2.2 support (as did a later stepping of 7Y30).

When I found out about the new m3 and that Apple was using it for the base model MacBook, I decided to get the m3 instead of i5. The main reason now IMO to get the i5 is to get a 512 GB SSD. You can't get that with the m3. (I don't need it though, so I got 256 GB. I keep my main data on a NAS, and my primary machine is an iMac with 1 TB SSD. I put the extra memory toward RAM, so my config is m3 / 256 GB / 16 GB.

I kind of agree with both those bloggers. My favourite PowerBook form factor was the 12" PowerBook. After the MacBook Air came out I thought it was a big step in the right direction, but the font size was off, and the screen quality was shit. Plus it was slow as hell. So I waited, and wished for an 1152x720 12" MacBook Air. Then when the Retina MacBook Pros came out I changed my wish to a 2304x1440 12" MacBook Air.

Then Apple released the 2304x1440 12" MacBook in 2015. Yay!

Except I hated it. Again it was kinda slow, but more importantly, its butterfly keyboard sucked. Plus it only had one USBC port. It was the laptop I had been waiting for for eons, but they crippled it in so many ways. So I waited until 2016 and nothing really changed, plus by then I figured I may as well wait for Kaby Lake's HEVC support.

Luckily I waited until 2017, because the m3 in 2017 is quite a noticeable boost in speed over the m3 in 2015, although I suspect it's a combination of SSD and CPU speed, since the SSD is way faster now too. Read speeds on that SSD are about 1.4 GB/s and write is over 1 GB/s. Nice. That's with a 256 GB drive BTW. Perhaps the 512 is faster, I'm not sure. But even more importantly, the new keyboard is vast improvement. It's still not an ideal keyboard IMO, but it's gone from really annoying in the 2015 to moderately decent in the 2017. However, the keyboard that comes with the iMac in 2017 is much nicer. They're both butterfly keyboards, but the one for the iMac is more substantial with better key travel.

BTW, I was just playing around the 120 Mbps jellyfish 10-bit 4K HEVC video from here:

http://jell.yfish.us/

The files are MKV so QuickTime can't understand them, but I remuxed the file to m4v. QuickTime in High Sierra 10.13 takes that file just fine, but playback on the Core m3 stutters a bit. I figured it may be the GPU, since the CPU usage always remained low, even with this 120 Mbps bit rate. The base clock speed of the GPU in the m3, i5, and i7 are all 300 MHz, but the boost speeds are 900, 950, and 1050 MHz respectively. I thought perhaps it would play better on the i7, I dunno.

However, the strange part is I then tried the 8-bit version of the file and got the exact same results. Then I took that original 120 Mbps 10-bit HEVC MKV and transcoded it using Handbrake using the 4K Roku preset, and ended up with a file that was just 18.5 Mbps and much, much smaller of course. However, that 18.5 Mbps file gave me the same stuttering in the same spots as the original 120 Mbps, so perhaps it's not the GPU speed after all, and instead is some peculiarity with the way the file was encoded, and QuickTime simply doesn't like it.