This puts the whole argument "a card that scores better at higher resolution tends to last longer" completely on its head.

Absolutely not. The whole point is to look at overall trends and pay attention to outliers in terms of context. What has been the overall trend in the last 20 years of GPUs? The card that performs better at higher resolutions lasts longer in probably 90% of the previous GPU generations. Alternatively, the card that performs better at 1080P but not as good at higher resolutions eventually loses most of its advantages with future games at 1080P as the games become more GPU demanding. There are of course exceptions to this rule but generally speaking on the whole the 960 is likely a far worse bet for keeping over 2-3 years than the R9 380/280X and especially the 290. Considering it's still possible to buy an R9 290 for

$245 in the US, while most 960 4GB cards still sell for $200, it's shocking that people still keep buying the 960. Well, I guess it's shocking to me since I wrongly presumed that most of the mainstream GPU buyers would eventually wake up and start doing GPU research but I guess I am wrong generation after generation. Also, it makes it very difficult when "professional" sites like TechReport or HardOCP recommend GTX950/960 cards over far superior options, fully ignoring VRAM bottlenecks, fully ignoring raw GPU horsepower that will come into play with future games, fully ignoring the wide range of performance in more than 7-8 games, etc. And guess who the mainstream gamer going to listen to?

😛

Can GTX950/960/950 4GB owners get a refund check now? Nope. Back to upgrade land in 2016. I am sure NV is loving that.

Whoa! Could they be more biased if they tried?

What do you mean? I guess I am missing something? Looks like the older architectures (Fermi and VLIW-4) are getting hammered -- possibly some internal bottlenecks across the board -- pixel, shaders, textures, etc. R9 280X is 120% faster than the HD6950. Remember when people said HD7970 was a small upgrade from HD6970? :awe:

While the FPS are not directly comparable to Guru3D's testing, the overall standing seems somewhat similar.

960 < 770 < 380 < 280X < 780 < Titan < 970 < 780Ti < 290 < 980 < 390X < Fury < Titan X < 980Ti.

They don't have the flagship Fury X in their charts though.

I found this interesting:

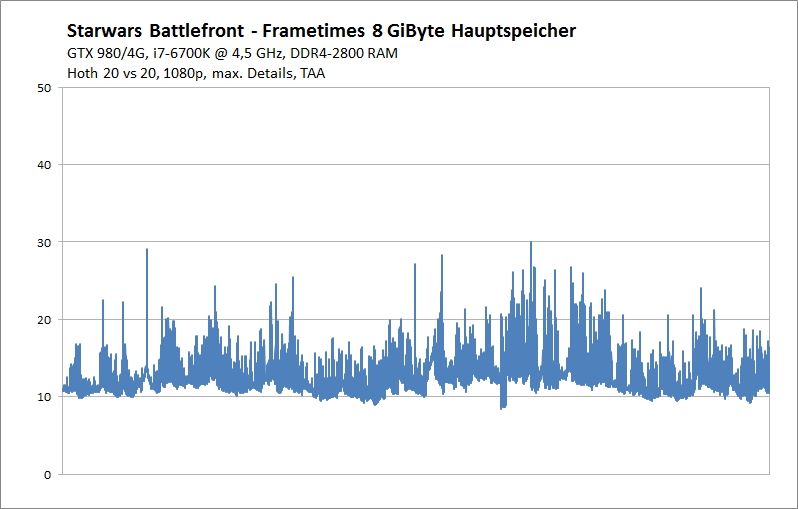

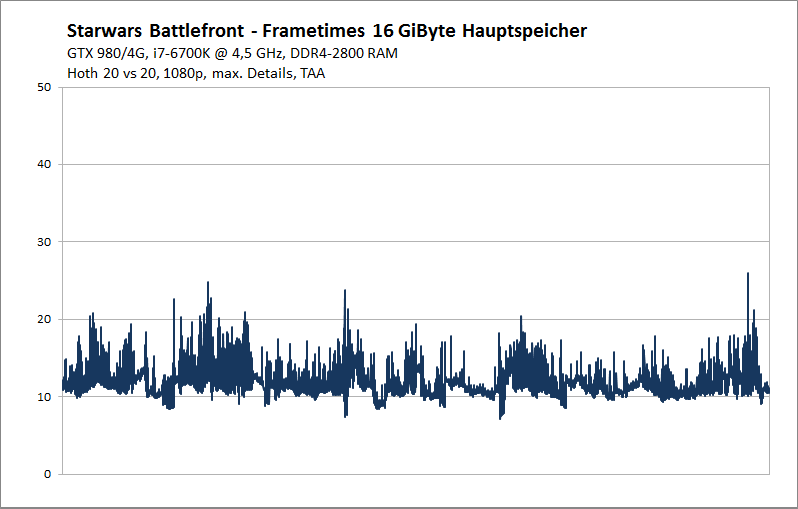

"The graphics load is comparable to the online battles, but the CPU load will be lower. One exception we make in memory measurements with 8 and 16 GiByte main memory. This we have recorded on the more sophisticated card Hoth in Walker Assault mode with 40 players. Note that not 1 the frametimes here: let reproduce. 1 Nevertheless, the difference is clearly visible and at times even to feel:

The frametimes with only 8 GiByte RAM are significantly worse, what this could intensify at slow clock-cycle storage. The 16 GiByte main memory which indicates DICE as a recommendation, certainly seem sensible for the undiluted gambling entertainment."

This is one of the few times if not the first where I am seeing a real benefit in frame times moving from 8GB to 16GB of RAM.

This game is still in Beta though so chances are we'll see 2-3 more AMD/NV drivers and game patches that should improve performance further for both companies.