Paratus

Lifer

Ha, I don't know. That's a good test of the theory!

Get Doom, test it and post results here 😀

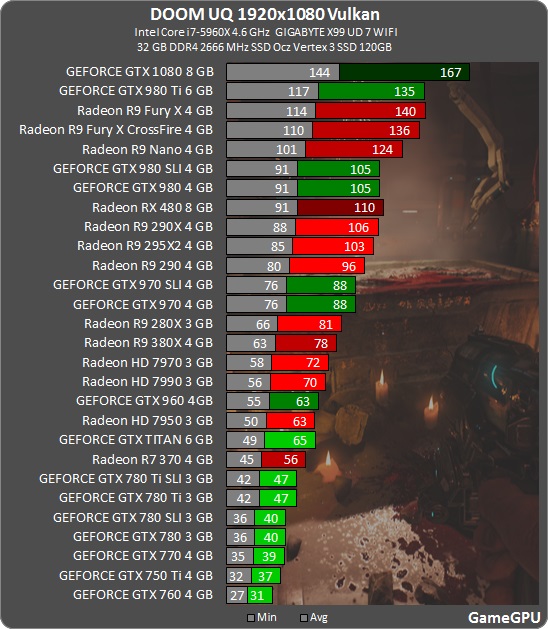

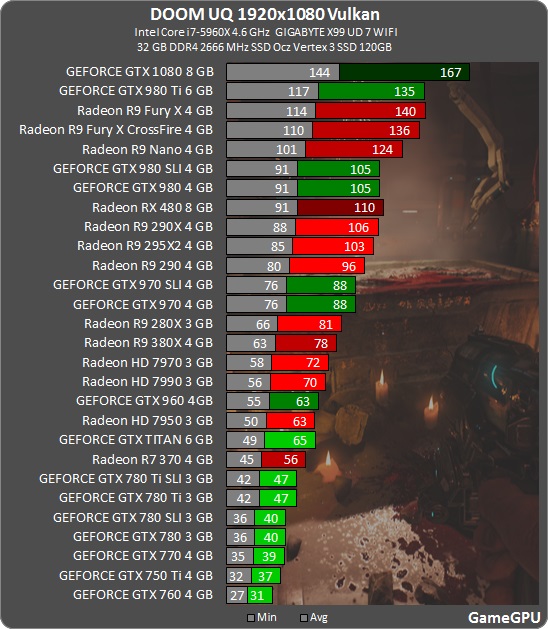

Faster than OG Titan😱😎

Last edited:

Ha, I don't know. That's a good test of the theory!

Get Doom, test it and post results here 😀

the titan getting almost 20fps more then a gtx 780ti? buahahahah...there it goes their credibility...

Could be partially due to RAM management in Vulkan on Nvidia...?

Can you run Doom on Vulkan under linux?

He died so that our framerates might be high and our temperatures cool.

Bit misleading as the original flop numbers are completely wrong.

1070 has a flop rating of 6.4TFLop. That is without taking the overboost into account.

Could range anyware from 6.4 to 7.2TFlop (1.9GHz)

Same story for the 970 980,..

980 ti has 6Tflops that can range up to 6.7TFlops (1.2GHz)

Also the numbers of AMD 480 is misleading as it gives max 5.8TFlops

edit : 970 would push 3.9Tflops (not 3.4) probably ranging up to 4.2TFlops (1.25GHz)

Still does not explain why a game like Doom is so much compute hungry to have a lineal perf/TFlops.

A lot of console game engines shifted graphical effects over to compute based effects to better make use the hardware they have. It started with Sony and their PS4, and now Microsoft & Xbox is onboard with games like Quantum Break and Forza amongst many others. ID is just following suit with their graphics engine.

The upshot is AMD based PC gaming is also getting the *massive* performance gains from these optimizations originally started for consoles.

As far as I know everyone expected nvidia to also get these performance gains, but when developers tried to access the Async scheduler on nvidia hardware it didn't work. And that's where we are at today.

Sure but its scaling linearly up to Fury X 8 TFlops (at least)... that does not look a bit too much for a game like that to you?

Sure but its scaling linearly up to Fury X 8 TFlops (at least)... that does not look a bit too much for a game like that to you?

Sure but its scaling linearly up to Fury X 8 TFlops (at least)... that does not look a bit too much for a game like that to you?

Is vulkan going to be the go to API for programmers for the next generation of pc games? If so, I think I may hold off on a 1070 and see where the dust settles.

If you look at the major AAA games coming out in a few months and towards the end of the year, it's all DX12.

Vulkan is still rare. ATM only Valve & id Software and a smaller indie group.

DX12 has momentum and I am sure MS is sending its software engineers to studios to "help" them move to DX12. It maintains their ecosystem. Vulkan is a threat to MS.

That link has a link to DX12 supported games. The list looks much longer but the list of games with currently useful DX12 support is still pretty low, too.

Though, for me, Civ VI and Doom and TW:Warhammer are games I'd play to death so I guess that is enough games for me.

Hooray for franchises that have been around since forever! (1991, 1993, 2000)

Since late March 2016 we started working daily with both AMD and NVIDIA. Both have been great partner companies, helping bring full DOOM and Vulkan driver support live to the community. There was a lot of work on all fronts but we are pleased with the results.

https://bethesda.net/#en/events/game/doom-vulkan-support-now-live/2016/07/11/156

FYI since many people have been complaining that id worked only with AMD, you failed to read the patch announcement

Nvidia also had id on stage to do the initial reveal of Vulkan support with the 1080 announcement.

You understand that the point of compute is to take things that were done in the fix function pipeline and do them in compute shaders because you want to do it differently to how the fixed function hardware allows you to.