The GTX 670 wins not only over the HD 7970 but the HD 7970ghz edition. Why are you using this OC edition and blanketing all HD 7970's?

How about a factory GTX 670 OC model vs a Factory HD 7970 OC model and then compare performance, power efficiency, acoustics, thermals and price.

How is it 5 pages into this thread and you are

still on this? I already addressed both of those points you mentioned earlier in the thread but maybe you missed it --

here.

It's been stated at least 3-4x in this thread the discussion is about GTX670 at $400 OR $420-440 1000-1100mhz for quiet after market versions of HD7970 which

may be worth spending the extra $ for at this resolution

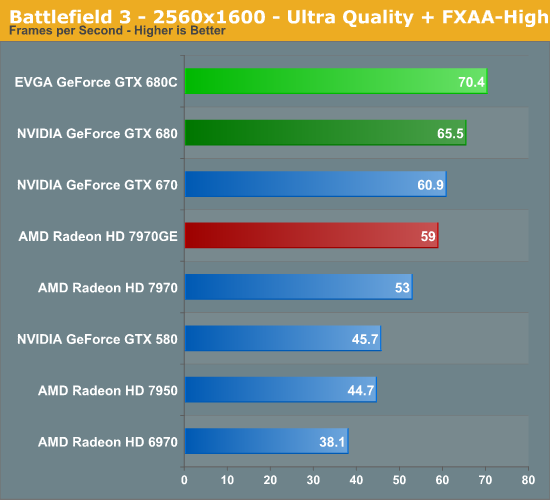

for the OP. Not one person in this thread has once said anything about a 925mhz HD7970. This isn't about HD7970 vs. GTX670, but what's the best card around $400 range is for 2560x1440/1600 and providing info around that to help the OP make a more informed decision and provide other gamers who haven't upgraded yet with more up-to-date information using the latest drivers.

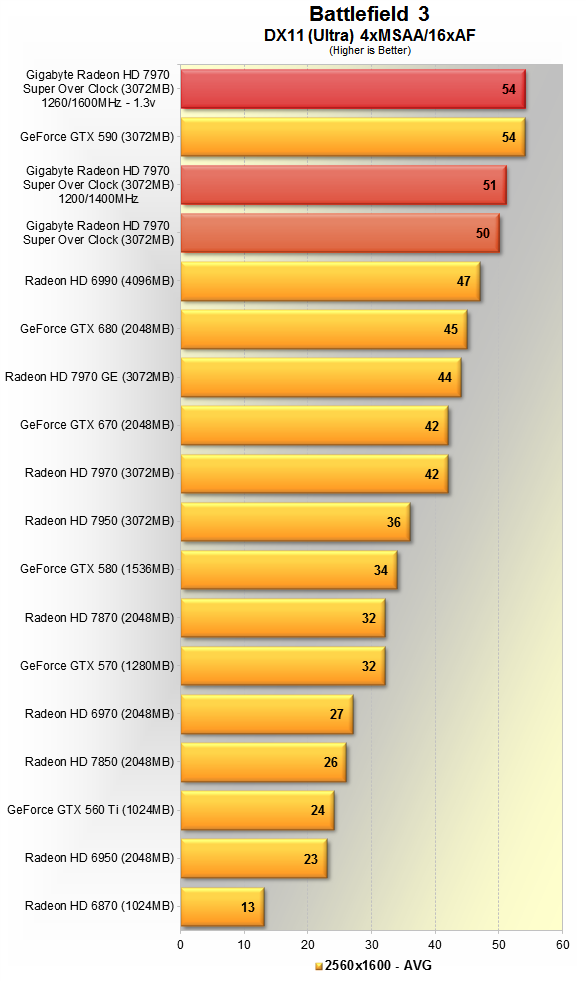

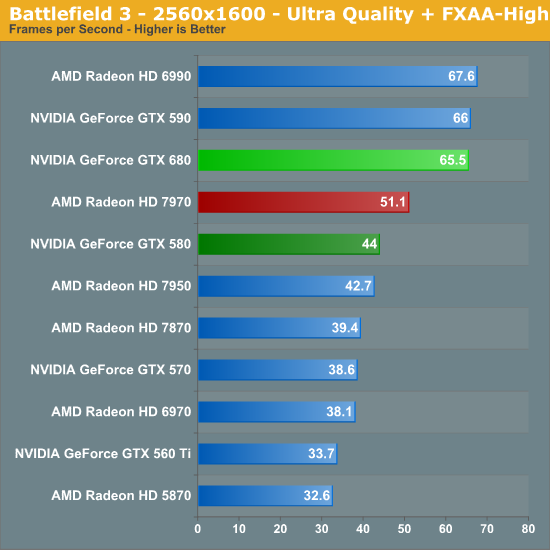

Also, GTX670 doesn't even win against stock HD7970 925mhz in case you want to know at 2560x1440/1600:

Computerbase

TPU

So let's not start making stuff up now.

Well, well...

waiting to see what

RussianSensation has to say about this

5 pages later and we still get a post such as this one below despite 5 professional reviews stating otherwise.

AMD didnt take the performance crown back.

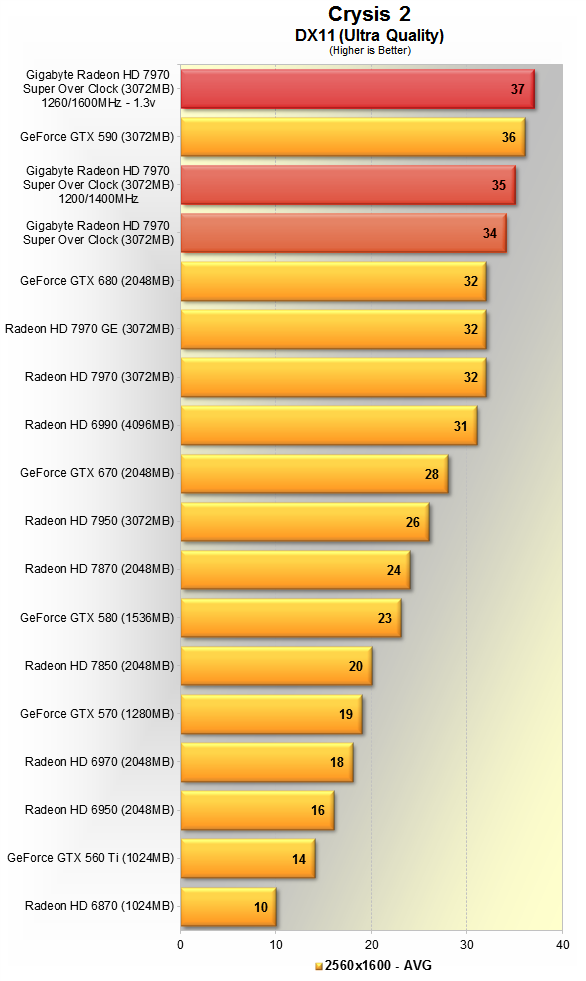

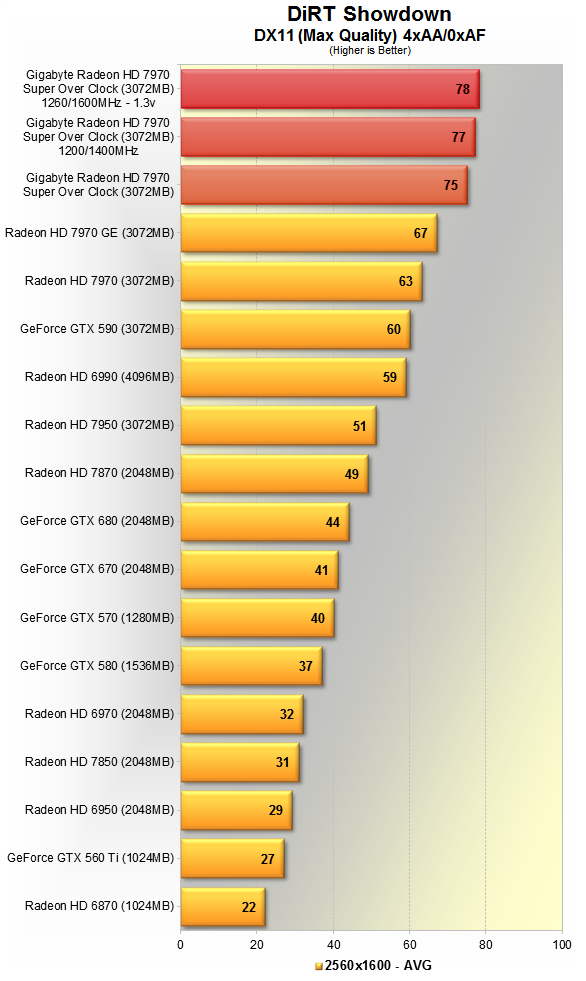

Can you please link 1 review that shows that HD7970 (esp. at 1000-1100mhz) isn't faster than GTX670 at 2560x1440/1600? Also, feel free to find a review anywhere where a GTX680 is recommended over HD7970 for higher resolutions (not talking about SLI drivers here). I provided information from at least 5 separate professional publications (TechPowerup, Computerbase, H4Tu, TechReport, TechSpot) and in each of those HD7970 1050mhz is the fastest GPU in the world.

Here is

Review #6 from KitGuru:

"Until today, the incredible KFA2 GTX680 Limited OC Edition claimed the ultimate single GPU performance spot, however in the majority of the real world game testing, the Sapphire HD7970 6GB Toxic Edition managed to outperform the overclocked GTX680. The performance results are unquestionably impressive. In 7 out of 11 tests, The Sapphire HD7970 6GB Toxic Edition outperformed the KFA2 GTX680 Limited OC Edition."

Here is

Review #7 from Xbitlabs where GTX680 @ 1290mhz couldn't even beat an HD7970 @ 1165, which means GTX670 has no chance at all.

Here is

Review #8 from BitTech.net: "the 7970 3GB GHz Edition is the

fastest single GPU card when compared to the GTX 680 2GB across all of our benchmarks"

This is a really big point, and just what I was thinking as I read RussianSensation's first couple of posts in this thread. He was referring to how others were posting benchmarks using outdated drivers, that they were irrelevant now. No, not really. In fact they pretty much drive home a simple point. BF3 was released October of last year and in public beta for quite a bit before that. It's taken how long now for AMD to be "on par"??? This isn't a one off occurrence, it happens over and over with AMD, and it's amplified further under multi-gpu situations.

BF3's performance was fixed right around the launch of HD7970 GE (June 22nd). GTX680 launched on March 22, 2012 (or almost 3 months after HD7970). AMD fixed BF3 performance about 3 months after GTX680 launched. Until March 22nd, HD7970 (esp. overclocked) was faster than GTX580 in BF3. So if waiting 3 months is such a big deal to get good BF3 performance for you, you would have been gaming on HD7970 for 3 months and not waited for GTX680 since it was faster than GTX580. If BF3 performance was such a big deal, how did you endure it on GTX500 series cards until March 22nd then?

Secondly, what about the games where AMD was faster from Day 1: Anno 2070, Bullet Storm, Serious Sam 3, Alan Wake, Metro 2033, Deus Ex? Those don't count for some reason? What about games where NV's performance was laughable due to their own driver issues -- you didn't mention

Shogun 2, despite that being a modern game like BF3 is. Let's not pretend only AMD has driver issues with newer games.

Thirdly, this isn't about what NV vs. AMD. I don't know why you guys are started to talk about how things were in March, etc. How does this apply to this particular thread and the recommendation to the OP? I provided information to the OP regarding current up-to-date drivers and performance in games. It's not how GTX680 performed 3 months ago but what card is the best card for 2560x1440/1600 today. Also, why is SLI even being brought into this? No one is saying anything about multi-GPUs or drivers for such setups. Please read the OP. The responses addressed specifically single-GPU gaming at 2560x1440/1600.

I figured this thread couldn't possibly be neutral, especially since with the latest drivers and price drops AMD has regained both the price/performance (HD7950) and performance crown (HD7970 GE). This is exactly why this thread is so useful because it seems the perception for the cards is still stuck in March 2012, but it's no longer the case. New buyers should be aware of more up-to-date information imo.