These raw frame-rate comparisons is akin to a VR experience where you stare at a scene static.

They don't measure the most important metric: motion to photon latency.

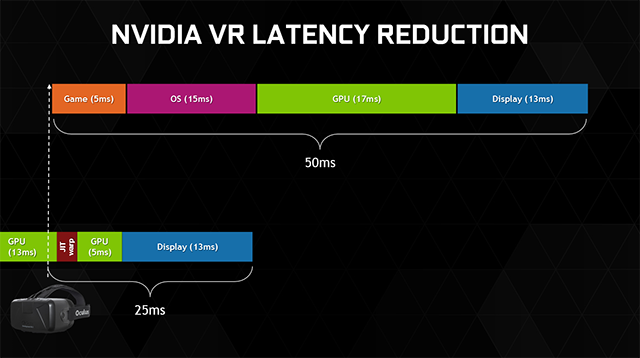

Tom's did a test last year on 3dMark's latency test (DX11) and it was awful, every GPU across the field got 35-50ms lag time from when you move your head, to when the scene is visually updated.

We still lack verifiable data and benchmarks for this latency that utilizes LiquidVR and GameWorks VR APIs.

The verifiable data is that the thousands of people who own or have demoed VR aren't barfing all over themselves.

If games were running with 35-50ms latency on the headsets that are coming out this/next month, the impressions you'd be seeing would be less "vr is the future" and more "wait when did I eat cheetos?"

The steam vr test can't measure motion to photon latency because there's no headset attached to the PC, nor is there a high framerate camera pointed at the headset screen. It's not an API test, that kind of thing doesn't need to be widely distributed. It's a simplistic "is my computer powerful enough?" test. It's also probably useful for valve to get statistics on the kind of computers owned by people interested in VR.