marees

Platinum Member

2027 & 2nm

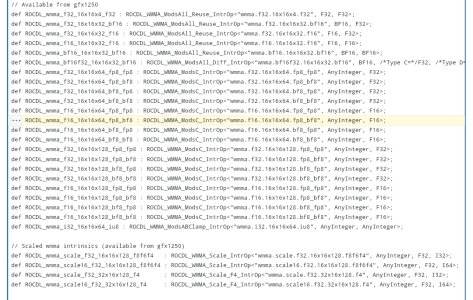

Well that puts any question of CDNA nomenclature remaining pretty conclusively to bed....

View attachment 136337

Kinda weird to jizz all over enterprise products at the Consumer Electronics Show though.

We get it, we're not the priority - no need to rub it in.