No RT, yes US.

The problem with RT is that it is a debatable tradeoff by every metric.

It's not just "framerate vs image quality", it's so much more than that.

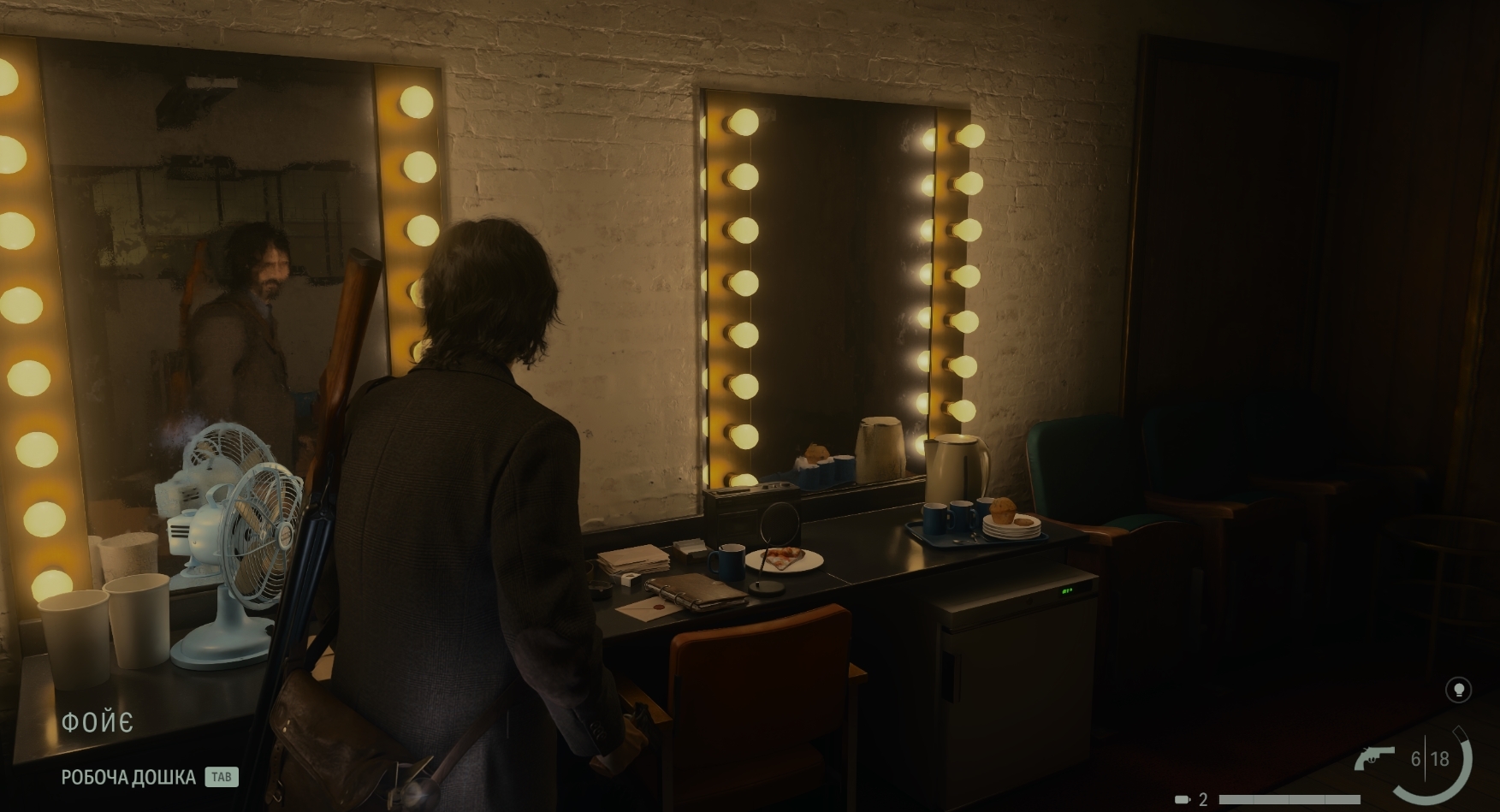

RT adds a layer of extra complexity to the lighting and design that absolutely breaks the mood and design of scenes in many cases. If you're going to give me more powerful shadows, or realistic lighting, mirroring, or ambient lighting RT effects, good. If you're going to give me that, and there are scenes, particularly in closed environments, where the artists painstakingly tried to set a certain mood, etch out visual details, bring forth certain elements and tone down others in the scene, and RT comes in and just splats its own brand of "new lighting" where all the feel of the place is thrown out the window, then I don't want it.

My problem with RT is that below PT, which is still ridiculously expensive, I don't think that it is a reliable lighting technique art-wise. I remember those CP2077 showcases, and I was looking at the RT/noRT images, and thinking "better with RT" "worse with RT" "better with RT" "worse with RT" every single image. It wasn't even 80% of the images that looked better, it was closer to 60% at best. Lots of indoor environments became smudged black and looked like nothing I would turn on.

As for PT, it solves a lot of my RT problems, and basically demands a 4x upscaling. A 4090, unequivocally a 4K card, will require upscaling from a 1080p base to play it. That's...well that's just ridiculous, plain and simple.

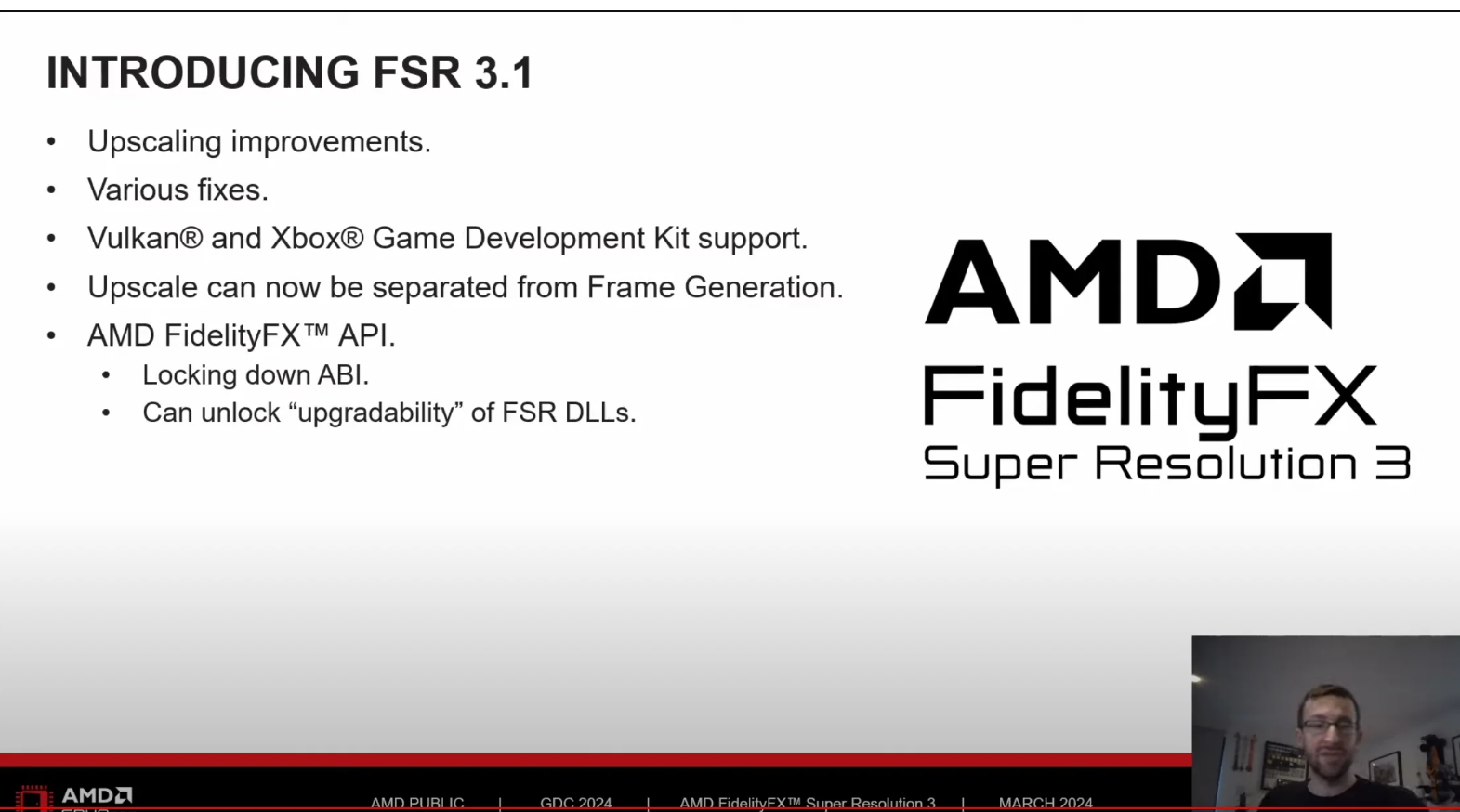

Upscaling on the other hand, I used to think is just to make your poor GPU run better, but I've really opened up to it now.

It's not just "my 1080p card can push to 1440p", it's a much broader "my card can now reliably run a bigger image" or "my card can now produce almost the same image at a much lower wattage cost". No upscaling is 100% perfect, and FSR is routinely noted as the worst of the bunch (and I bought AMD since I gave no fs about RT), but it hasn't stopped me from playing 4K games when my 7900 xt was a little too weak for it, and past the first hour or so of playing the "FSR doctor" and finding all the little things that looked worse, I just...played. Without even noticing it anymore.

Professional Bemoaners like Tim from HWUB can make his next 20 videos on how "FSR bad", but the intelligent question is "is FSR so bad that you'll notice it". Of course he won't raise that question since he'd lose one of his favorite dead horses to beat. But I did play with FSR on, and past actively looking for problems, it was good enough for 99% of the game. Which doesn't take away the need for improvement ofc, and in my 1600 base -> 2160p, FSR is already fed a lot, and FSR is far worse with a 1080p base, FSR has shimmering issues, FSR has all kinds of reasons to be improved.

But for all the issues it has, it's already good enough to use, and it is, broadly speaking, a net bonus. You want less power draw or more frames or a higher resolution at a minimal cost of image quality? Upscaling is an excellent way to get one of the three. And I expect that in time, every single last game will just use it by default because the IQ loss will be tiny and the general gains will be widely recognised.

RT however, I expect to still remain this Nvidia-backed scam, where you are sold on a "revolutionary lighting technique" that doesn't just eat frames like it's brownies, but also routinely juggles between better looking environments and plainly worse looking ones. It's not a "you pay in frames to get better lighting", it's "you pay in frames to get different, unequal lighting, some amazing, some just plain worse". For RT to fully realise its potential, we will need it to not be a tacked on system on top of a game, we will need games that are fully RT designed from the start.

And why don't we have those? Because Nvidia are scammers. Because RT with Turing was a fat joke. RT with Ampere was effectively unusable on cards below the 3080, by lack of raw compute or a tiny VRAM buffer (my condolences, 3070/Ti buyers).

The games industry runs not on "pay 50$ more, get RT in the game". It runs on "everyone pays $70 and gets the game". Hence the question for a studio is not in "do the players want RT", it's in "how many people can play RT, and will they still buy the game if we don't put RT". Development time pushed towards RT is one thing, but shipping a game that would solely use RT is an entirely different affair. A radically different one, because as we've seen with some overly ambitious games (Immortals of Aveum for ex.), gatekeeping your game on the grounds of a $800+ last gen GPU is just plain stupid.

Nvidia sold the RT meme as "ready" with Turing. It was a lie.

Did it again with Ampere. Less of a lie, but still nowhere near viable for an industry seeking number of buyers, not "class buyers". RT is just not financially viable yet. Anyone saying otherwise is either hugging his 3080 every night and cries that it loves it or has the Jensen brain parasite.

When the entire GPU stack, from top to bottom, plus one or two years of GPU renewal period, is properly RT capable, meaning anyone spending between $300 and $3000 will have a RT capable GPU, then games will be designed with RT in mind from start to finish. And it will be an amazing thing then. But we're not there until 2028 in my opinion. Jensen will have sold snake oil for nearly ten years, while very slowly turning it into wine. The Finest of Finewines, AMD should be proud.

P.S I don't wanna sound like I HATE RT, I saw it in modded FF XIV and found it an absolute improvement. But it's a mod. And honestly, great as the improvement was, it wasn't enough to get me to pay the NV tax. I think RT can be great, I just don't think it's financially viable for studios, for gamers, and that the only real earner of the fad is Nvidia.