Soulkeeper

Diamond Member

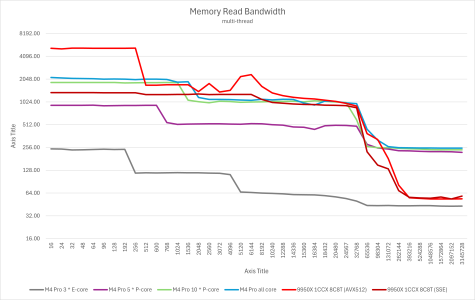

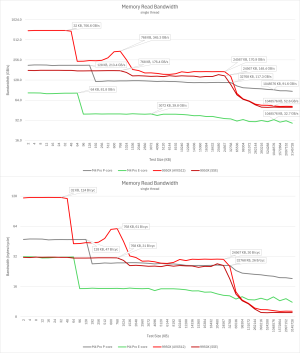

Hello, I was doing some reading on zen5 and threadripper/epyc and noticed that the CCD links are the limiting factor for max mem bandwidth.

IE: 2 or 4 CCD setups can't fully saturate 8 channels of ddr5

Is the case the same on the Intel side ?

Is there any setup besides 8 or 12 CCD that can utilize many channels of ddr5 fully ? (and would require a highly threaded situation to saturate it).

I know the trend over the past 25+ years has been CPU speed greatly outpacing memory bandwidth, less bandwidth per core/thread as time goes by (and larger caches to help hide it).

I'm just interested in your thoughts on this and if anyone has been following this in detail lately.

IE: 2 or 4 CCD setups can't fully saturate 8 channels of ddr5

Is the case the same on the Intel side ?

Is there any setup besides 8 or 12 CCD that can utilize many channels of ddr5 fully ? (and would require a highly threaded situation to saturate it).

I know the trend over the past 25+ years has been CPU speed greatly outpacing memory bandwidth, less bandwidth per core/thread as time goes by (and larger caches to help hide it).

I'm just interested in your thoughts on this and if anyone has been following this in detail lately.