Here is David Schor's take on V-Cache:

AMD 3D Stacks SRAM Bumplessly – WikiChip Fuse

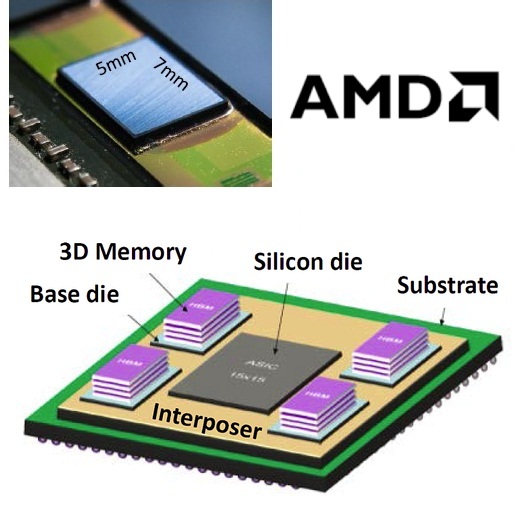

View attachment 45426

I think it is pretty obvious by now that the CCD solution with optional V-Cache will remain a key feature of Zen 4. It is nicely flexible and low risk. The big question in my view is whether they will finally move away from the slow and power-hungry interconnect implemented in the organic substrate to a faster, wider and more power-efficient chiplet interconnect on silicon interposer and/or over embedded silicon bridges. The ugly mock-ups from the "leaked" sources indicate that they will not. I think and hope they will. Despite the positive feedback to my own mock-ups based on silicon interposers and bridges, I find it hard to gauge the general consensus here. Do you think the current interconnect in the package can be extended to Zen 4 — with higher bandwidth demands and more chiplets complicating the routing further — or is AMD bound to move to a more efficient interconnect on silicon?

Is there anything from AMD indicating that they actually plan on using HBM on Epyc die? With the amount of SRAM cache, I don't know if the an HBM L4 cache would actually be that useful. Epyc is going to have a lot of DDR5 channels and a lot of SRAM cache.

At the moment, I am kind of thinking that initial Zen 4 may still be similar to Zen 3, with just pci-express 5 level interconnect speeds. The large interposers have scaling issues. How do you efficiently and cost effectively make intermediate products? Zen 3 goes from 8 to 64 cores, although the 8 core is still 8 cpu die. You probably don't want to use a giant interposer for a smaller number of cpu die, so do they use multiple interposer sizes?

I had the idea that they may split up the IO die into multiple active interposers, but this may be unlikely. It makes some sense since it would be very modular. The current IO die is around 435 square millimeters; I don't know what that will shrink to on a TSMC process, but splitting it into multiple active interposers may make sense. Some parts will get bigger if they use more memory channels and upgrade to pci express 5. The internal pathways will need to be wider and/or higher clock to handle the extra bandwidth requirements. It will not scale as well with the IO, so it may still be rather large if it is a single chip rather than some kind of stacked solution.

A possibly much lower risk option would be to have close to the same layout except with the cpu to IO die connections replaced by local silicon interconnects. That would make the common case very cheap. The version with low cpu chip count (4 or 6, depending on layout) would have very short embedded silicon bridges. For routing under another cpu chiplet, they would need either a really long silicon bridge or they would need to kind of daisy chain them. I could see them using rows of 3 chiplets with tiny embedded silicon bridges between each. The silicon bridges could probably be all the same and very small. The number of hops probably isn't that important. The connection would be very wide and they wouldn't need to be serialized. They might be able to set up the cpu chiplet to pass through multiple connections such that they aren't shared.

Any of these may contain stacked components, like stacked L3 caches or stacked IO die:

1. Same as Zen 3, except double the link speed to pci-express 5 levels and done.

2. Giant interposer(s) under everything as in mock-ups. Expensive and may be hard to scale to different number of chiplets.

3. Tiny, modular interposers with IO and other chiplets stacked. Possibly expensive. Still need to connect interposers together, so may be unlikely; why use an interposer rather than local silicon interconnect?

4. Same type of layout as Zen 3, except use local silicon interconnect to connect cpu chiplets to IO die. No actual interposers. Possible daisy chain cpu chiplets with local silicon interconnect. May be shared link or pass through. The bandwidth could be ridiculously high, so even it it were shared, it might not be an issue.

Any other ideas? There are a lot of possibilities with stacking, so there may be a surprise, but we have some idea of what connection technology TSMC has available.

I am kind of leaning towards #4. It would help a lot with power consumption and bandwidth without introducing any scaling issues. It is obviously still very modular and the cost would scale. You could make the common 4 cpu chiplet version with 4 cpu chiplets, 4 LSI die, and the IO die. You could scale all of the way up to 8, 12 or more cpu chiplets with the cost scaling with it. I don't know if the local silicon interconnect can be used across multiple products the way the cache die probably can. It could be a standardized, wide infinity fabric protocol chip that might get used to connect other infinity fabric chiplets, like gpus and fpga devices. It is also possible that the initial version is just #1 and extra 3D stuff comes later. Perhaps they have a multi-layer IO die to take advantage of different processes, but leave the interconnect mostly the same.