- Oct 16, 2019

- 2,629

- 5,938

- 146

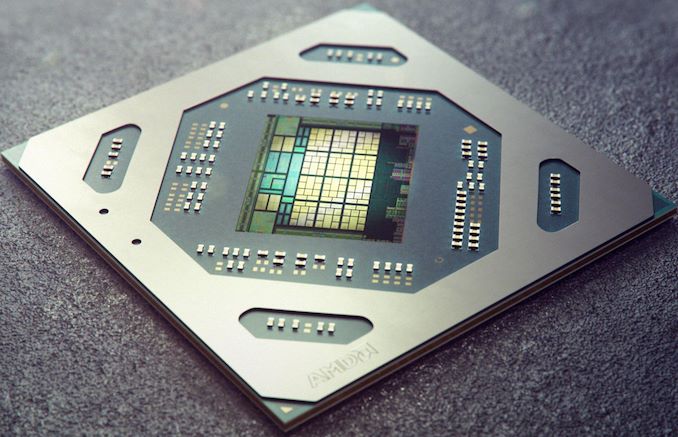

All die sizes are within 5mm^2. The poster here has been right on some things in the past afaik, and to his credit was the first to saying 505mm^2 for Navi21, which other people have backed up. Even still though, take the following with a pich of salt.

Navi21 - 505mm^2

Navi22 - 340mm^2

Navi23 - 240mm^2

Source is the following post: https://www.ptt.cc/bbs/PC_Shopping/M.1588075782.A.C1E.html

Navi21 - 505mm^2

Navi22 - 340mm^2

Navi23 - 240mm^2

Source is the following post: https://www.ptt.cc/bbs/PC_Shopping/M.1588075782.A.C1E.html