-

We’re currently investigating an issue related to the forum theme and styling that is impacting page layout and visual formatting. The problem has been identified, and we are actively working on a resolution. There is no impact to user data or functionality, this is strictly a front-end display issue. We’ll post an update once the fix has been deployed. Thanks for your patience while we get this sorted.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

Discussion NV Re-Enter ARM PC market in Q2-2026!

Page 15 - Seeking answers? Join the AnandTech community: where nearly half-a-million members share solutions and discuss the latest tech.

DrMrLordX

Lifer

Excellent question. Sounds like something bad was discovered during ES/QS stage, maybe forcing them to respin? Either that or their entire production cycle is broken and inefficient. Or both!What were nv, mediatek, & Microsoft doing in those 90 days ?

poke01

Diamond Member

I mean in 3 years replace Mediatek with Intel too.It apparently taped out last December

But Charlie's leak published only this April

What were nv, mediatek, & Microsoft doing in those 90 days ?

This is why you need to own your hardware stack like AMD and Apple do. Intel owns it now too.

Intel replacing its iGPU IP with Nvidia will for sure see delayed products.

adroc_thurston

Diamond Member

No?Intel replacing its iGPU IP with Nvidia will for sure see delayed products.

poke01

Diamond Member

Hm. What’s wrong with N1X then? Is it Mediatek fault?

adroc_thurston

Diamond Member

poke01

Diamond Member

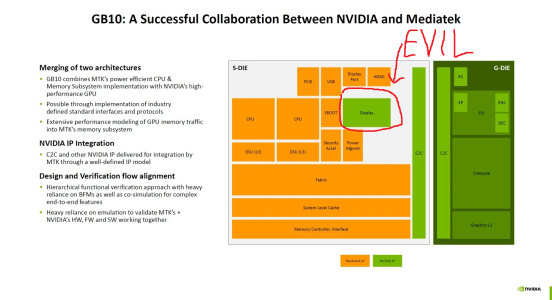

I love how you have this picture saved.

Tup3x

Golden Member

If I'd have to guess, they have issues with integrating that NVIDIA ip and they have bugs that make the thing unstable.What's the problem with the display block? Does it suck compared to MediaTek IP?

Or everything is just a guesswork and there are no hardware issues at all. I wouldn't be surprised if the real issue is Microsoft or that the drivers just aren't ready yet.

adroc_thurston

Diamond Member

It just flat out sucks.Does it suck compared to MediaTek IP?

NV display cores are just kinda really bad.

Oh no it sucks all-around.If I'd have to guess, they have issues with integrating that NVIDIA ip and they have bugs that make the thing unstable.

MTK has nothing to do with it.

igor_kavinski

Lifer

I remember from the old days that Radeon had the best 2D engine, followed by Intel and then Nvidia. Still true?NV display cores are just kinda really bad.

adroc_thurston

Diamond Member

Apple's a very strong contender to AMD now (better for the most part, really).I remember from the old days that Radeon had the best 2D engine, followed by Intel and then Nvidia. Still true?

Rest, yeah. Nothing ever happens.

NVIDIA DGX Spark Arrives for World’s AI Developers

NVIDIA today announced it will start shipping NVIDIA DGX Spark™, the world’s smallest AI supercomputer.

Starting Wednesday, Oct. 15, DGX Spark can be ordered on NVIDIA.com. Partner systems will be available from Acer, ASUS, Dell Technologies, GIGABYTE, HP, Lenovo, MSI as well as Micro Center stores in the U.S., and from NVIDIA channel partners worldwide.

Saylick

Diamond Member

If the rumors are true, this will effectively be a paper launch since the number of units sent to retailers will be small.

NVIDIA DGX Spark Arrives for World’s AI Developers

NVIDIA today announced it will start shipping NVIDIA DGX Spark™, the world’s smallest AI supercomputer.nvidianews.nvidia.com

Paper launch? It is in people's hands now https://videocardz.com/newz/nvidia-...ng-as-jensen-delivers-first-unit-to-elon-musk ;P

poke01

Diamond Member

DoA btw

video with tests

Really Why ?DoA btw

So the controlled benchmarks from selected reviewers bits was true. I guess everybody got the same reviewer guide, unless I have missed it nobody ever talks about the CPU part of this thing? [how it does compare in cpu only inference, how fast it can compile new libs, what is the power draw, does it work outside the two linux distros they officially mention].

First power measurements I have seen and also some interesting bits https://www.servethehome.com/nvidia-dgx-spark-review-the-gb10-machine-is-so-freaking-cool/

There are a few clear challenges working with the GB10. Somewhat surprisingly, video output is one of those areas that you think any NVIDIA product would nail. The Spark has been challenging to say the least. The LG OLEDs we have that are 1440p, display a garbled mess out of the HDMI port if set to 1440p output in the OS. Likewise, ultra widescreen monitors were a no-go.

At idle, when we did our power measurements last week, this system was idling in the 40-45W range. Just loading the CPU, we could get 120-130W. Adding the GPU, and other components we could get to just under 200W, but we did not get to 240W. Something to keep in mind is that QSFP56 optics can use a decent amount of power.

Also, in many of the AI inference workloads with LLMs we were using 60-90W and the system was very quiet. There is a fan running, but if you are 1-1.5m away it is very difficult to hear and it never hit 40dba when we were not stress testing the system.

LightningZ71

Platinum Member

I believe that the term used was "Evil"

now we are learning why...

now we are learning why...

The Hardcard

Senior member

It won’t be competitive. Both AMD and Nvidia missed the target with 256 bit memory. For LLMs, bandwidth is as important as compute and the Mac Studios have 512 bits up to 128 GB RAM capacity and 1024 bits up to 512 GB of RAM.Really Why ?

The huge knock on Macs is the compute is way too low, but the Apple10 GPU architecture resolves that with the neural accelerators (tensor cores). Comparable compute with double to quadruple the bandwidth.

Apple’s MLX framework now has a functioning CUDA backend, model algorithms run as is on Nvidia hardware. Most people, if not everyone wanting DGX Spark for locally prototyping and fine tuning a planned datacenter deployment can do better with the upcoming Mac Studios as well.

TRENDING THREADS

-

Discussion Zen 5 Speculation (EPYC Turin and Strix Point/Granite Ridge - Ryzen 9000)

- Started by DisEnchantment

- Replies: 25K

-

Discussion Intel Meteor, Arrow, Lunar & Panther Lakes + WCL Discussion Threads

- Started by Tigerick

- Replies: 25K

-

Discussion Intel current and future Lakes & Rapids thread

- Started by TheF34RChannel

- Replies: 24K

-

-