-

We’re currently investigating an issue related to the forum theme and styling that is impacting page layout and visual formatting. The problem has been identified, and we are actively working on a resolution. There is no impact to user data or functionality, this is strictly a front-end display issue. We’ll post an update once the fix has been deployed. Thanks for your patience while we get this sorted.

You are using an out of date browser. It may not display this or other websites correctly.

You should upgrade or use an alternative browser.

You should upgrade or use an alternative browser.

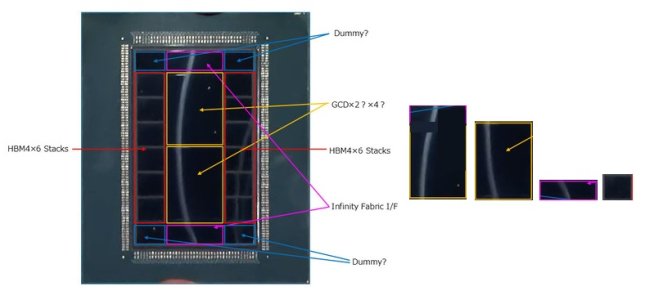

Discussion MI455X Estimation of structure etc

- Thread starter 1250

- Start date

1250

Member

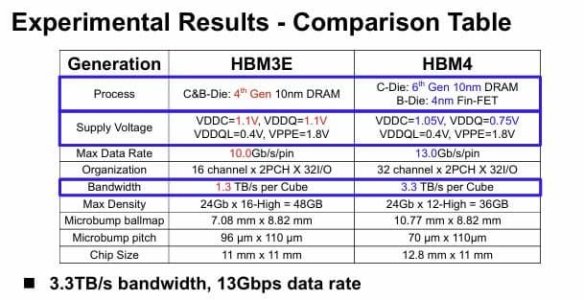

base die precess update(presume)

standard hbm(S-hbm) custom hbm(C-hbm) H(skH) S, M(micron)

S-hbm4(also 4E)

H 12nm S sf4x M dram(n12)

C-hbm4(only amd)

S sf4x H,S 12nm?(It's a different b-die with the same process)

C-hbm4E

H n3p S sf2 M n3p

p.s That means AMD has to make three versions of the base die, spending hundreds of millions of dollars(memory guys claim)

standard hbm(S-hbm) custom hbm(C-hbm) H(skH) S, M(micron)

S-hbm4(also 4E)

H 12nm S sf4x M dram(n12)

C-hbm4(only amd)

S sf4x H,S 12nm?(It's a different b-die with the same process)

C-hbm4E

H n3p S sf2 M n3p

p.s That means AMD has to make three versions of the base die, spending hundreds of millions of dollars(memory guys claim)

Last edited:

base die precess update(presume)

standard hbm(S-hbm) custom hbm(C-hbm) H(skH) S, M(micron)

S-hbm4(also 4E)

H 12nm S sf4x M dram(tsmc?)

C-hbm4(only amd?)

S sf4x

C-hbm4E

H n3p S sf2 M n3p

Are you saying that AMD may offer 2 different base dies - Standard and Custom?

BTW, who is making the custom base die?

1250

Member

memory guys (Samsung, skHynix)

Here, it's basically C-HBM4.

The memory guys are basically not interested in AMD(or strong NDA)

The consensus among official custom HBM team (probably) is that AMD isn't using C-HBM4 due to cost issues and AMD's lack of capacity.

But this time, my side has a consulting person(Maybe? for Google, Broadcom, AMD, etc. He knows the capacity of LPDDR.) on the scene

So, memory guy with the strongest argument is that the supply shortage has led to the switch to using S-HBM(claim: lpddr controller is inside the GPU. LPDDR is connected to the IOD or MID, just like a graphics card.)

p.s He's probably a planning person and has access to the MI4xx plan.

Here, it's basically C-HBM4.

The memory guys are basically not interested in AMD(or strong NDA)

The consensus among official custom HBM team (probably) is that AMD isn't using C-HBM4 due to cost issues and AMD's lack of capacity.

But this time, my side has a consulting person(Maybe? for Google, Broadcom, AMD, etc. He knows the capacity of LPDDR.) on the scene

So, memory guy with the strongest argument is that the supply shortage has led to the switch to using S-HBM(claim: lpddr controller is inside the GPU. LPDDR is connected to the IOD or MID, just like a graphics card.)

p.s He's probably a planning person and has access to the MI4xx plan.

Last edited:

renticular

Junior Member

base die precess update(presume)

standard hbm(S-hbm) custom hbm(C-hbm) H(skH) S, M(micron)

S-hbm4(also 4E)

H 12nm S sf4x M dram(n12)

C-hbm4(only amd)

S sf4x H,S 12nm?(It's a different b-die with the same process)

C-hbm4E

H n3p S sf2 M n3p

p.s That means AMD has to make three versions of the base die, spending hundreds of millions of dollars(memory guys claim)

standard hbm(S-hbm) custom hbm(C-hbm) H(skH) S, M(micron)

S-hbm4(also 4E)

H 12nm S sf4x M dram(n12)

C-hbm4(only amd)

S sf4x H,S 12nm?(It's a different b-die with the same process)

C-hbm4E

H n3p S sf2 M n3p

p.s That means AMD has to make three versions of the base die, spending hundreds of millions of dollars(memory guys claim)

This custom base die stuff only makes sense if you have some type of standardization regarding interface between DRAM stacks and base die.

I would propose following:

If memory guys do not want to change their DRAM array structure etc. one solution could be an intermediate interface die placed between DRAM stacks and the base die. This intermediate interface die acts as an RDL (redistribution layer).

That would also be a solution for vendor specific base die (AMD might design it differently compared to Nvidia). But the better solution would be, that the custom base die interface to the DRAM stack is really standardized.

The memory guys might still need the RDL die but it would be the same for all custom base die versions from any chip design company.

I would propose following:

If memory guys do not want to change their DRAM array structure etc. one solution could be an intermediate interface die placed between DRAM stacks and the base die. This intermediate interface die acts as an RDL (redistribution layer).

That would also be a solution for vendor specific base die (AMD might design it differently compared to Nvidia). But the better solution would be, that the custom base die interface to the DRAM stack is really standardized.

The memory guys might still need the RDL die but it would be the same for all custom base die versions from any chip design company.

1250

Member

That's why I know JEDEC exists.

Joe Macri (Board of Directors) is the AMD vice president known for creating HBM.

But the problem here is that memory guys claim JEDEC only sets the physical specifications, and S-HBM is no different from custom.

I've been reading some of the opinions exchanged on Twitter, so it's probably easier to understand.

There are differences of opinion.

Joe Macri (Board of Directors) is the AMD vice president known for creating HBM.

But the problem here is that memory guys claim JEDEC only sets the physical specifications, and S-HBM is no different from custom.

I've been reading some of the opinions exchanged on Twitter, so it's probably easier to understand.

There are differences of opinion.

AMD are not exactly going to be sharing proprietary knowledge with Samsung (or SK Hynix) about custom base dies they haven't yet sent to fab, so it stands to reason that the off the shelf base die engineer isn't going to know what AMD's capabilities are yet.That's why I know JEDEC exists.

Joe Macri (Board of Directors) is the AMD vice president known for creating HBM.

But the problem here is that memory guys claim JEDEC only sets the physical specifications, and S-HBM is no different from custom.

I've been reading some of the opinions exchanged on Twitter, so it's probably easier to understand.

There are differences of opinion.

I'm not even sure that there is any reason that such an engineer would have access to such information even if the Samsung fab had the masks on site - competent corporate security measures would demand proper separation of information for said fabs to keep their semicon customers trust and not incur ruinous lawsuits.

1250

Member

The person who is making the strongest claims right now is (probably) a planning person. He is confident that he has the latest and greatest information. He knows what HBM speeds companies want, etc. He also seems to have access to the MI455x planning. There is also a consulting person who works with Google, AMD, etc. He has hinted that he has developed a custom HBM, but I think it could be a PoC.

P.s memory guy's mainstream opinion

first c-hbm is nvidia c-hbm4e

P.s memory guy's mainstream opinion

first c-hbm is nvidia c-hbm4e

adroc_thurston

Diamond Member

Once again, this is wrong.first c-hbm is nvidia c-hbm4e

MI400's have kustom HBM with LPDDR shoreline stashed in each base die.

1250

Member

ThanksOnce again, this is wrong.

MI400's have kustom HBM with LPDDR shoreline stashed in each base die.

I don't know what information they have access to, and they don't seem to even know they're under an NDA. They're convinced that 4E isn't a hostile custom HBM, but they claim they have no information about 5.(parallel to them). I've tried suggesting solutions, but they're completely useless. They're brainwashed by development costs and such. AMD is a small, corner store company with insufficient capabilities. They think JEDEC is useless and that they're just making their own custom products.

Last edited:

adroc_thurston

Diamond Member

this is very very very funny (AMD R&D opex was 600m higher YoY last Q).AMD is a small, hole-in-the-wall company with insufficient capabilities.

1250

Member

The conversation of x above is a stereotype, they talk as if the development cost is 600mthis is very very very funny (AMD R&D opex was 600m higher YoY last Q).

If it costs that much, I'd rather use s-hbm. But nah

BTW, what do you think about the unit price? They believe that Nvidia is buying it for $700, and Lisa is buying it for cheap(450) with a lower clock speed. I wonder if the unit price will go up if they use a custom base die.

Last edited:

1250

Member

C-hbm Is it worth it?this is very very very funny (AMD R&D opex was 600m higher YoY last Q).

I'm worried(just amd fanboy)

Last edited:

adroc_thurston

Diamond Member

meh.They believe that Nvidia is buying it for $700, and Lisa is buying it for cheap(450) with a lower clock speed

Yeah, it's more shoreline for various nefarious purposes.C-hbm Is it worth it?

1250

Member

custom AMD Instinct GPU based on the MI450 architecturemeh.

Yeah, it's more shoreline for various nefarious purposes.

What does this mean? tell me sir

adroc_thurston

Diamond Member

Means MI455X with a different flexIO split.custom AMD Instinct GPU based on the MI450 architecture

What does this mean? tell me sir

1250

Member

capacity? 32x24 or 576Do we know how LPDDR5X MI450 will have?

MrMPFR

Senior member

Yes sorry for the word salad.capacity? 32x24 or 576

Hmm. That's only ~30% bump vs HBM4 capacity. Would've expected higher LPDDR5X capacity.

1250

Member

Nah sorry i think so 768GB. below 1tb(48,64)Hmm. That's only ~30% bump vs HBM4 capacity. Would've expected higher LPDDR5X capacity.

MrMPFR

Senior member

That sounds more reasonable. So ~1/2 of Vera CPU LPDDR5X.Nah sorry i think so 768GB. below 1tb(48,64)

this is very very very funny (AMD R&D opex was 600m higher YoY last Q).

Based on adroc's reply I'd assume that this is total R&D expenditure change from 2024 Q4 -> 2025 Q4 for AMD across all silicon µArchs in various compute types (CPU, GPU, FPGA, DPU etc), hw IO (consumer and enterprise/server, including mobo chipsets) and software R&D (ROCm/HIP, gfx drivers, CPU compilers, GPUOpen academic work/collabs etc), not solely on one specific memory interface type like HBM.The conversation of x above is a stereotype, they talk as if the development cost is 600m

If it costs that much, I'd rather use s-hbm

It took a large amount of engineering work over multiple campuses to run a company like AMD even back when they had scaled down in the mid Bulldozer days, but these days the workload must be huge 😅

TRENDING THREADS

-

Discussion Zen 5 Speculation (EPYC Turin and Strix Point/Granite Ridge - Ryzen 9000)

- Started by DisEnchantment

- Replies: 25K

-

Discussion Intel Meteor, Arrow, Lunar & Panther Lakes + WCL Discussion Threads

- Started by Tigerick

- Replies: 24K

-

Discussion Intel current and future Lakes & Rapids thread

- Started by TheF34RChannel

- Replies: 24K

-

-