Charlie/Jim are more like the National Enquirer.

Well put. But the question remains, which tech site would be a tmz equivalent? Videocardz?

Charlie/Jim are more like the National Enquirer.

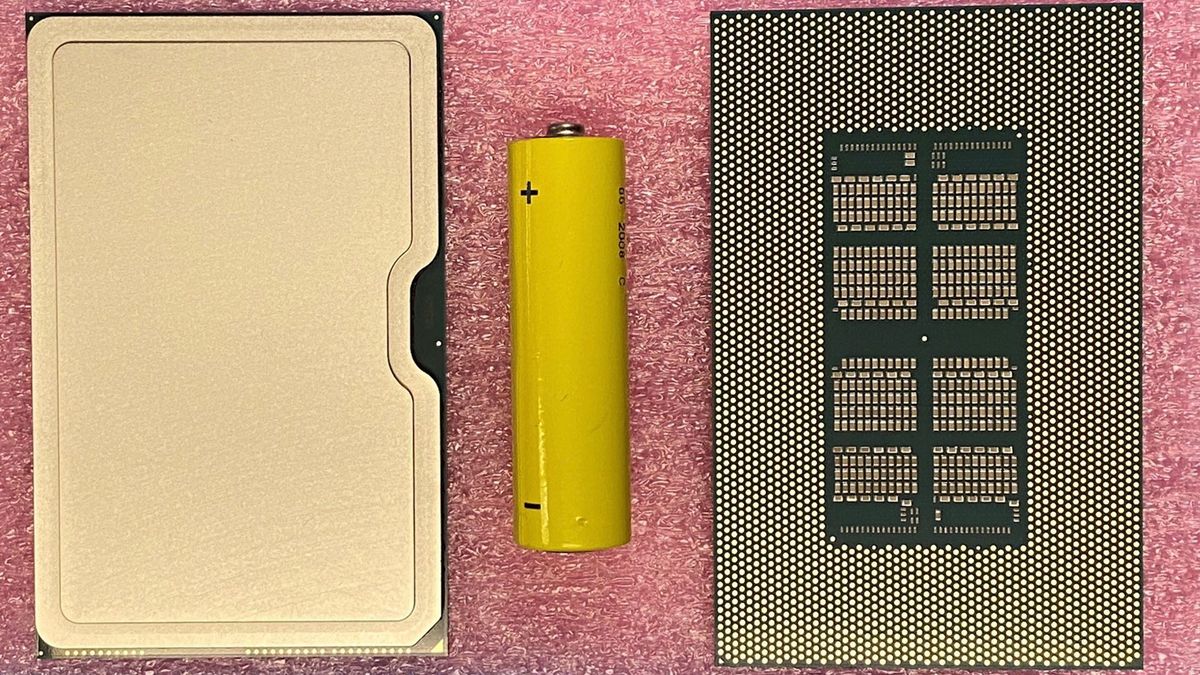

Another tease- the BFP (big fabulous package):

Do you realize that its a socketed chip? Yes, the HPC version might come in sockets!

Though its not relevant to graphics, especially on client.

Huh, interesting! Should certainly make it easier for server vendors to build custom chassis for it.

What makes you say that? The gold "this way up" tab on the card?

Even BGAs have an orientation indication, so it's not impossible that they'd use an arrow on a BGA.Yea and somebody mentioned that. There's no reason to develop a BGA socket like that.

Earlier tweets also showed the picture of the second package upside down. It was LGA.

This avoids some performance regressions on Gen12 platforms caused by SIMD32 fragment shaders reported in titles like Dota2, TF2, Xonotic, and GFXBench5 Car Chase and Aztec Ruins.

This doesn't bode well for Intel's graphics line...

Why? It might save their dGPU effort entirely. They'll actually have a working node, unlike the CPU design teams.

Why keep sinking resources into competing in a market which isn't your area of expertise?

Firstly, I don't think Intel can really compete just on the strength of their design. They're up against AMD and NVidia, who have been refining their discrete GPUs for decades, and have deep experience, connections with game developers, and highly optimized drivers. If Intel had a process advantage, that could cancel out their difficulties... but not on the same node.

The GPGPU products can stick around but they need to be on a competitive node. Now it could be on the chopping block if they lose Aurora.

Intel are talking about Tiger Lake (and its Xe GPU) this week. Looking forward to hearing about the architecture!

moore'slawisdead put out his 2year breakdown on graphics chips. some bits on multichip gpu and intels infighting.

Some Intel GPU info: https://videocardz.com/newz/intel-to-unveil-xe-hpg-gaming-architecture-with-hardware-ray-tracing

-Xe-HPG for gamers in 2021, using GDDR6 and external foundries, and also has hardware ray tracing

-Xe SG1, which is DG1 but for servers

-HP/HPC uses multi-tiles and is on Intel's 10nm process

Whats the battery for? Scale?

Some Intel GPU info: https://videocardz.com/newz/intel-to-unveil-xe-hpg-gaming-architecture-with-hardware-ray-tracing

-Xe-HPG for gamers in 2021, using GDDR6 and external foundries, and also has hardware ray tracing

-Xe SG1, which is DG1 but for servers

-HP/HPC uses multi-tiles and is on Intel's 10nm process

Ponte Vecchio on 10nm? That would be a big step back

Oh, giant-head-pencil-neck guy who makes up weird crap out of thin air. Or well, not thin air; the directions fans are turned... lolmoore'slawisdead put out his 2year breakdown on graphics chips.